AI Chatbots Prescribing Medication: Promise, Peril, and Guardrails

You want faster access to refills and clear answers about psychiatric meds, yet you worry about safety when an AI chatbot prescribing medication sits between you and a clinician. That tension is real right now. Health systems and startups are testing bots that draft refill approvals in minutes, but the Verge reporting on an AI chatbot offering to refill psychiatric drugs without a clinician in the loop shows how fragile the guardrails are. Regulators are already watching. The stakes are high: one bad refill can trigger side effects, drug interactions, or liability fights. What should you look for before trusting the chat window? And how should providers and vendors build this tech without sleepwalking into risk?

Rapid Signals Worth Your Attention

- Some AI chatbots already propose psychiatric refills, revealing gaps in clinician oversight.

- Safety nets hinge on tight EHR integration, audit trails, and explicit escalation paths.

- Clear FDA and DEA rules on automated prescribing are still emerging.

- Patients need transparent disclosures about when a human reviews the decision.

- Vendors must prove bias controls and contraindication checks actually work.

Why AI Chatbot Prescribing Medication Took Off

Refills are a perfect test bed: structured data, repeatable logic, and frazzled clinics with backlogs. Providers see a chance to cut admin time and respond faster to anxious patients. But drug management is more like piloting a plane than sending a calendar invite. Miss a warning, and someone gets hurt. One single-sentence paragraph sits here.

Speed without guardrails is a liability, not a feature.

Think of it like automated traffic lights. When the timing is right and sensors work, traffic flows. When the sensors glitch, you get gridlock or crashes. Psychiatric meds demand even stricter timing and context.

How Safe AI Chatbot Prescribing Medication Should Work

- Identity and consent checks: Validate the patient, confirm consent, and log every step.

- Clinical criteria: Dose limits, recent vitals, labs, and symptom screens must gate any refill recommendation.

- Contraindication scanning: Cross-check current meds, allergies, and black box warnings before proposing anything.

- Escalation defaults: When data is missing or symptoms change, hand off to a human clinician automatically.

- Auditability: Store rationale, model version, and prompt history so QA teams can replay decisions.

Skip one of these and you invite risk. Honest question: would you trust a pilot who says the checklist is optional?

Regulatory Ground Truths

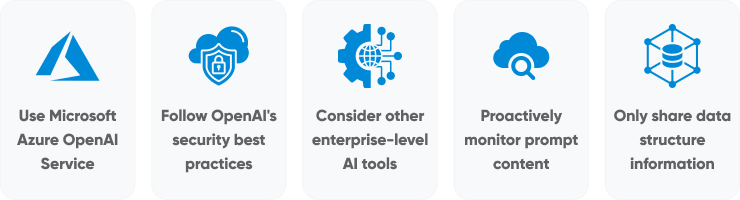

FDA guidance on clinical decision support draws a line: tools that inform are treated differently than tools that direct prescribing. DEA rules on controlled substances add another hurdle. Vendors must document that a licensed clinician reviews or signs off on controlled med refills. Anything else is walking into regulatory crossfire.

Privacy is another landmine. Bots need access to charts to be useful, but every API call is a potential HIPAA exposure. Encrypt data in transit, restrict scopes, and log every access. It is boring plumbing, yet it keeps you out of headlines.

Bias and Equity Checks

Psychiatric care already suffers from unequal access. If training data underrepresents certain groups, the bot may deny refills too often or miss adverse effects. Borrow a play from nutrition labels: publish model evaluation results by demographic slice. And refresh those tests as formularies and populations shift.

Practical QA Steps

- Red-team prompts for edge cases like pregnancy, substance use, and polypharmacy.

- Simulate pharmacy stock-outs to see how the bot reroutes options.

- Measure false approvals versus false denials, then tune for patient safety, not mere throughput.

Think of it as tuning a guitar. Tighten one string too far, and the whole song sounds off.

Building Trust With Patients

Patients should see who or what is making the call. Clear UI labels, explicit handoffs to humans, and easy ways to request a clinician matter. Use plain language in the chat: “An AI drafted this, and a clinician will review before sending.” That line alone can diffuse anxiety.

Support teams need scripts for when the bot errs. Own the mistake, fix it fast, and show the fix. Trust grows slowly and breaks fast.

What Providers Can Do Now

- Run pilots with narrow scopes, like SSRIs for stable patients, before expanding.

- Attach every AI recommendation to a human-in-the-loop workflow.

- Set up incident response drills that include pharmacy partners.

- Demand vendor attestations on safety tests and update cadence.

- Track patient satisfaction and clinical outcomes, not just ticket close time.

Look, no one gets a free pass because the tech feels shiny. Patients expect real care, not shortcuts.

Vendor Checklist Before Shipping

Ship with an API that supports reversible decisions, not just one-way approvals. Offer admins the power to halt the model when metrics go sideways. Provide a sandbox with synthetic data so health systems can test without patient risk. And bake in explainability—show why the model suggested a refill, not just that it did.

Where This Goes Next

The line between helpful assistant and unauthorized prescriber is thin and shifting. If teams get the guardrails right, chatbots can clear backlogs and free clinicians for tougher cases. If they rush, expect lawsuits and regulatory blowback. Which path will your org choose?