Machine Learning

Displaying 10 of 23 articles

David Silver on Reinforcement Learning’s Next AI Bet

David Silver on Reinforcement Learning’s Next AI Bet Most AI coverage right now fixates on chatbots, larger context windows, and the race to ship new model features. But that misses a harder question.

AI-Powered Table Tennis Robot Marks a Robotics Breakthrough

AI-Powered Table Tennis Robot Marks a Robotics Breakthrough The AI-powered table tennis robot is more than a party trick. It is a hard test of perception, timing, and control, and this new milestone s

The Complete Guide to AI Model Quantization in 2026

The Complete Guide to AI Model Quantization in 2026 Running a 72 billion parameter model requires 144GB of GPU memory in full precision. Most practitioners do not have that hardware. LLM quantization

How to Reduce LLM Hallucinations: 7 Techniques That Work in Production

How to Reduce LLM Hallucinations: 7 Techniques That Work in Production LLMs generate confident, fluent text that is sometimes completely wrong. This tendency to hallucinate is the single biggest barri

Meta Releases Llama 4 Fine-Tuning Toolkit: What’s New

Meta Releases Llama 4 Fine-Tuning Toolkit: What’s New Meta shipped a major update to its Llama 4 fine-tuning toolkit in April 2026. The release includes a unified CLI tool that handles LoRA, QLoRA, an

AI Chip Wars: AMD MI400 vs NVIDIA Blackwell vs Intel Gaudi 3

AI Chip Wars: AMD MI400 vs NVIDIA Blackwell vs Intel Gaudi 3 The AI chip comparison 2026 landscape has three serious contenders for data center AI workloads: AMD’s MI400, NVIDIA’s Blackwell B200, and

RAG Is Not Dead: How Retrieval-Augmented Generation Evolved in 2026

RAG Is Not Dead: How Retrieval-Augmented Generation Evolved in 2026 When GPT-5.4 launched with a 1 million token context window, a common reaction was: “RAG is dead. Just stuff everything in the conte

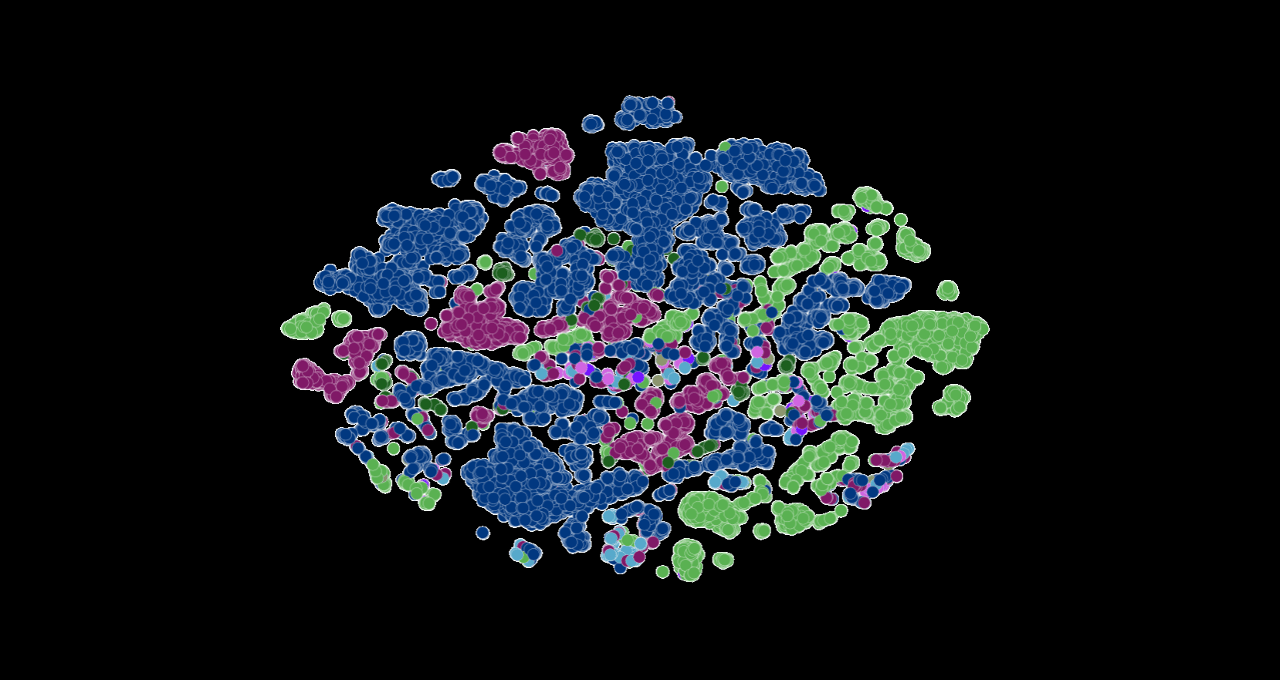

Large Genome Model: Open Weights for Trillions of Bases

Large Genome Model: Open Weights for Trillions of Bases Labs keep hitting the same wall: translating raw sequencing data into answers without burning budgets or months. An open Large Genome Model trai

Mixture of Experts in 2026: How Modern LLMs Route Tokens Efficiently

Mixture of Experts in 2026: How Modern LLMs Route Tokens Efficiently The largest language models in 2026 share a common architectural pattern: mixture of experts (MoE). GPT-5.4, Qwen 3.5, DeepSeek-V4,

Why AI Stumbles on Certain Games and How to Fix It

Why AI Stumbles on Certain Games and How to Fix It Developers want game agents that win, learn, and entertain, yet AI game playing limitations keep showing up in titles that reward improvisation or hi