AI Facial Recognition Oversight Is Falling Behind

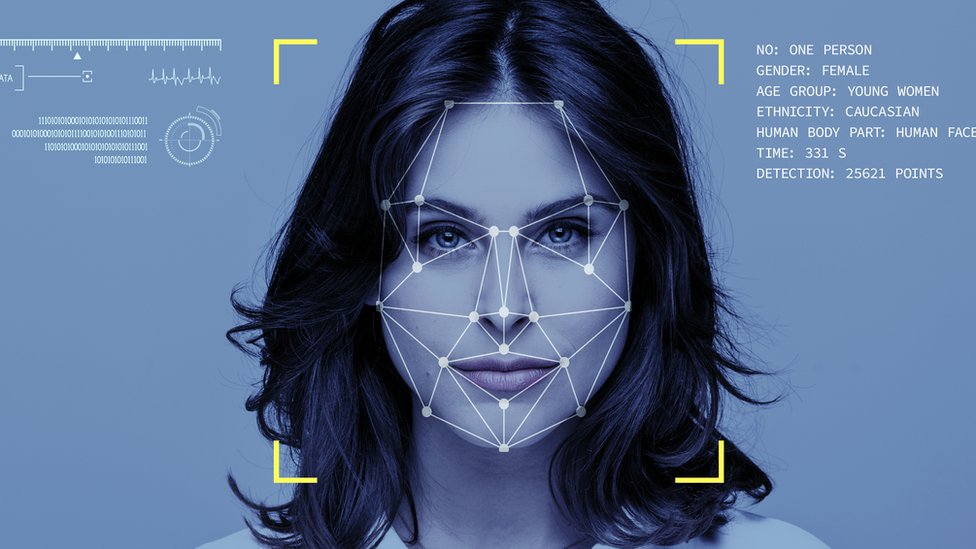

You are seeing AI facial recognition oversight fail the basic timing test. The systems are spreading through policing, borders, retail, and public spaces faster than rules can catch up. That matters now because once these tools become routine, weak safeguards harden into normal practice. And then bad decisions get baked in.

A recent Guardian report points to a blunt warning from watchdogs. Oversight is lagging far behind the technology itself. That gap raises familiar problems, but they are not abstract. People can be misidentified, tracked without real consent, or judged by systems they cannot inspect or challenge. If a tool can scan a crowd in seconds, who is checking whether it should be there at all?

What stands out

- Deployment is moving faster than regulation, especially in public surveillance and law enforcement use.

- Accountability is still thin when facial recognition produces errors or hidden bias.

- Procurement rules often focus on performance claims, not civil rights impact or public transparency.

- Watchdogs are warning about a structural gap, not a one-off policy delay.

Why AI facial recognition oversight is such a mess

Look, this is not a new pattern in tech policy. Companies and agencies can buy systems quickly. Legislatures and regulators move slowly. But facial recognition makes the delay more dangerous because the stakes are personal. Your face is not a password you can reset.

The core issue is simple. Oversight frameworks were built for older forms of data processing, while modern computer vision tools can identify, infer, and track at scale. That mismatch leaves regulators trying to police a Formula 1 car with parking rules.

Watchdogs are not saying the technology appeared overnight. They are saying the controls around it remain too weak for the power these systems now hold.

And that weak control shows up in a few predictable places.

Public sector use moves first

Police, border agencies, and local authorities often adopt facial recognition because the pitch sounds efficient. Faster identification. Better security. Fewer staff hours. Those claims can be attractive in budget-tight environments.

But public sector use carries a higher burden. State power changes the equation. A mistaken match in a shop is bad. A mistaken match in a policing context can alter someone’s life.

Testing does not answer the hardest questions

Vendors usually point to accuracy metrics. Those numbers matter, but they do not settle the real dispute. Accuracy in a controlled benchmark is not the same as legitimacy in a messy public setting. Lighting changes. Camera angles drift. Databases vary. Human reviewers may trust the machine too much.

That last part gets missed. People tend to treat algorithmic output as neutral, even when it is shaky.

Transparency remains patchy

In many deployments, the public still does not know enough about where systems are active, what data they use, how long records are kept, or how false matches are handled. That is a governance failure, full stop.

Where the real risks sit in AI facial recognition oversight

The headline risk is not just bias, though bias remains central. It is the combination of scale, opacity, and official power. Put those together and you get a tool that can quietly shift the balance between citizens and institutions.

- Misidentification. False positives can trigger stops, investigations, or denied access.

- Function creep. A system bought for one purpose can slowly expand into others.

- Mass surveillance. Continuous scanning changes public life, even before an arrest or sanction happens.

- Weak redress. People often struggle to challenge automated or semi-automated decisions.

- Procurement blind spots. Agencies may buy tools before serious impact review takes place.

Honestly, function creep may be the biggest sleeper issue. A narrow pilot can become standard operating practice with very little public debate. That is how temporary measures turn permanent.

One sentence matters here.

Rules that arrive after mass deployment usually protect institutions more than citizens.

What better AI facial recognition oversight would look like

If governments are serious, they need to stop treating oversight as paperwork added after purchase. It has to shape whether a system is used in the first place. Think of building codes. You do not inspect the foundation after the tower is open.

Start with use-case limits

Not every deployment deserves approval. Some uses should face strict limits, and some should be off the table. Live facial recognition in public spaces, especially for routine surveillance, deserves the toughest scrutiny.

That means asking basic questions before any rollout:

- Is the use necessary, or just convenient?

- Is there a less intrusive alternative?

- What is the error cost if the system gets it wrong?

- Who can audit the model, data, and outcomes?

- How can an affected person challenge the result?

Require independent audits

Internal testing is not enough. Agencies and vendors have incentives to present systems in the best light. Independent audits should review accuracy across demographic groups, operational conditions, retention practices, and human review procedures.

And yes, those audits should be public where possible (with narrow security exceptions). Public trust cannot rest on secret validation.

Build in reporting and redress

Any agency using facial recognition should publish regular reports on where it is used, hit rates, false matches, complaint volumes, and policy changes. Without reporting, oversight turns into theater.

People also need a clear path to appeal. Fast. If someone is flagged by a system, they should be able to learn what happened and contest it without entering a bureaucratic maze.

What businesses should learn from the watchdog warnings

Private companies should not read this as a government-only problem. Retail, events, property management, and employers face many of the same trust and compliance questions. A legal green light today can become a reputational mess tomorrow.

Smart operators should treat facial recognition as a high-risk system, not a default convenience feature. That means stricter vendor review, tighter data minimization, and board-level attention. If your team cannot explain why facial recognition is necessary, that is your answer.

The best governance move may be refusing deployment until the case for necessity is stronger than the case for speed.

The bigger policy fight is still ahead

The deeper argument is about democratic control. How much automated identification should public institutions have in ordinary civic life? That is not a technical question dressed up as law. It is a political choice.

Some policymakers still talk as if better accuracy will solve the core concern. It will not. Even a highly accurate system can be used in ways that the public rejects. Precision does not equal permission.

But this can still change. Legislators can set narrower legal boundaries. Regulators can demand tougher impact assessments. Courts can force clearer standards for proportionality and due process. None of that is flashy. It is just overdue.

What happens next

You should expect the fight over AI facial recognition oversight to sharpen, not fade. The technology will keep improving. The public case for restraint will get stronger at the same time. That tension is the story.

My view is plain. Until oversight catches up in a serious way, broad facial recognition deployment deserves skepticism by default. If governments and companies want trust, they need to earn it with limits, audits, and public accountability. Otherwise, why should anyone accept being scanned first and informed later?