AI Risk Assessment: What Matters Now

You hear constant noise about AI risk, yet the real issue is sorting hype from hazards fast. AI risk assessment sits at the center of that tension: you want progress without lighting a match near a dry forest. The stakes grew this year as models scaled, regulators stirred, and businesses shipped AI features into customer workflows. You need a clear path that balances lab safety, market reality, and governance that can keep up. Skip the drama; focus on the levers you can pull today.

Quick Signals to Watch

- Alignment drift shows up when fine-tuned models behave unpredictably outside benchmarks.

- Concentration risk grows when a few labs control frontier model access and updates.

- Model evaluation gaps appear when red-team coverage ignores real user contexts.

- Supply chain exposure spans data provenance, model hosting, and dependency sprawl.

Why AI Risk Assessment Beats Blanket Fear

AI risk assessment forces you to sort credible threats from cinematic doom. It frames concrete scenarios: model misuse, cascading automation errors, and overreliance on opaque systems in critical infrastructure. Think of it like defensive driving on black ice; you respect the conditions and adjust speed, but you still need to reach your destination.

Safety without delivery is academic theater. Delivery without safety is venture roulette.

This tension drives smarter policy and product choices.

Building Guardrails That Survive Reality

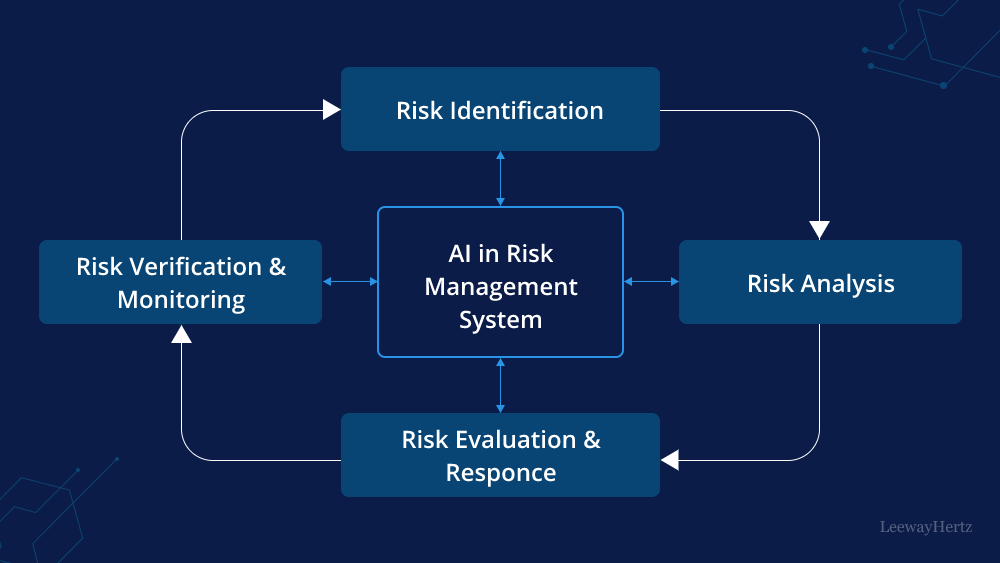

Here is the thing: policies on paper crumble if they ignore deployment friction. Use layered defenses that combine technical controls, operational checks, and governance.

- Technical filters: strong input/output moderation, circuit breakers for high-risk actions, and continuous evals that match live traffic patterns.

- Operational discipline: incident runbooks, rollback plans, and cross-team drills that treat misuse like a production outage.

- Governance fit: a board-level risk committee that understands AI risk assessment and approves model classes, not individual prompts.

One sentence can carry a hard line.

Measuring Risk Without Freezing Innovation

Why assume safety freezes progress when measured guardrails can accelerate shipping? When teams embed evaluators in CI and run post-deploy audits, they find issues earlier and cut rework. But do you have the courage to pause a feature if red-teamers flag an exploit?

Look, I have covered enough tech cycles to know incentives matter. Tie launch approvals to evidence: scenario-based tests, adversarial probes, and third-party audits where stakes justify the cost. Rotate test data to avoid stale benchmarks. Avoid checklist theater; chase real failure modes instead.

Public Risk, Private Incentives

Frontier labs hold immense power. Treat them like chemical plants: licensed, inspected, and accountable for spills. Public risk deserves public oversight, not just self-regulation by platform executives. And yet, bans and blanket moratoria rarely age well. You need targeted rules that hit high-energy systems while keeping low-risk experimentation open.

An analogy helps: building a skyscraper requires permits, inspections, and resilient materials, but no one outlaws bricks. AI should follow the same playbook.

What Good Policy Looks Like

Strong policy starts narrow. Define thresholds for model capability, compute scale, or dual-use potential, then bind them to disclosure, eval requirements, and incident reporting. Demand transparency on training data provenance and third-party dependencies. Encourage open reporting channels for researchers who find exploits, with protections that mirror security vulnerability disclosure.

Enforcement matters. Set penalties that hurt, and fund regulators with technical staff who can read logs, not just legal briefs.

Signals to Track in the Next Year

Watch for three shifts: standardized eval suites that include social harm scenarios, insurance markets pricing AI liability, and open-weight models gaining safety tooling parity with closed systems. If two of these land, governance moves from theory to practice.

Where This Leaves You

AI risk assessment is not a spectator sport; your org either builds muscle now or inherits someone else’s mess. Start with scoped model classes, live-traffic evals, and clear escalation when outputs go sideways. Could the next model release push you past your current controls?

Next Moves That Count

- Classify models by capability and bind them to specific guardrails.

- Run red-team drills with product owners in the room.

- Budget for external audits on frontier-adjacent deployments.

- Publish a plain-language safety memo to users and investors.

Looking Ahead

AI will keep sprinting. You should too, with brakes that work and a map that updates. The real win is progress that the public can trust.