AI Terms Explained: Hallucinations, LLMs, and More

AI coverage moves fast, and the jargon can turn a useful story into noise. If you keep seeing terms like hallucinations, large language models, agents, and inference, you are not alone. An AI terms guide matters right now because these words shape how companies sell products, how lawmakers talk about risk, and how regular people judge what these systems can actually do. Look, a lot of AI language is used loosely. That creates confusion, and sometimes it hides weak products behind polished marketing. This guide cuts through that. It explains the most common AI terms in plain English, adds context on where the labels help, and points out where the hype tends to creep in. Think of it as a field manual for reading AI news without rolling your eyes.

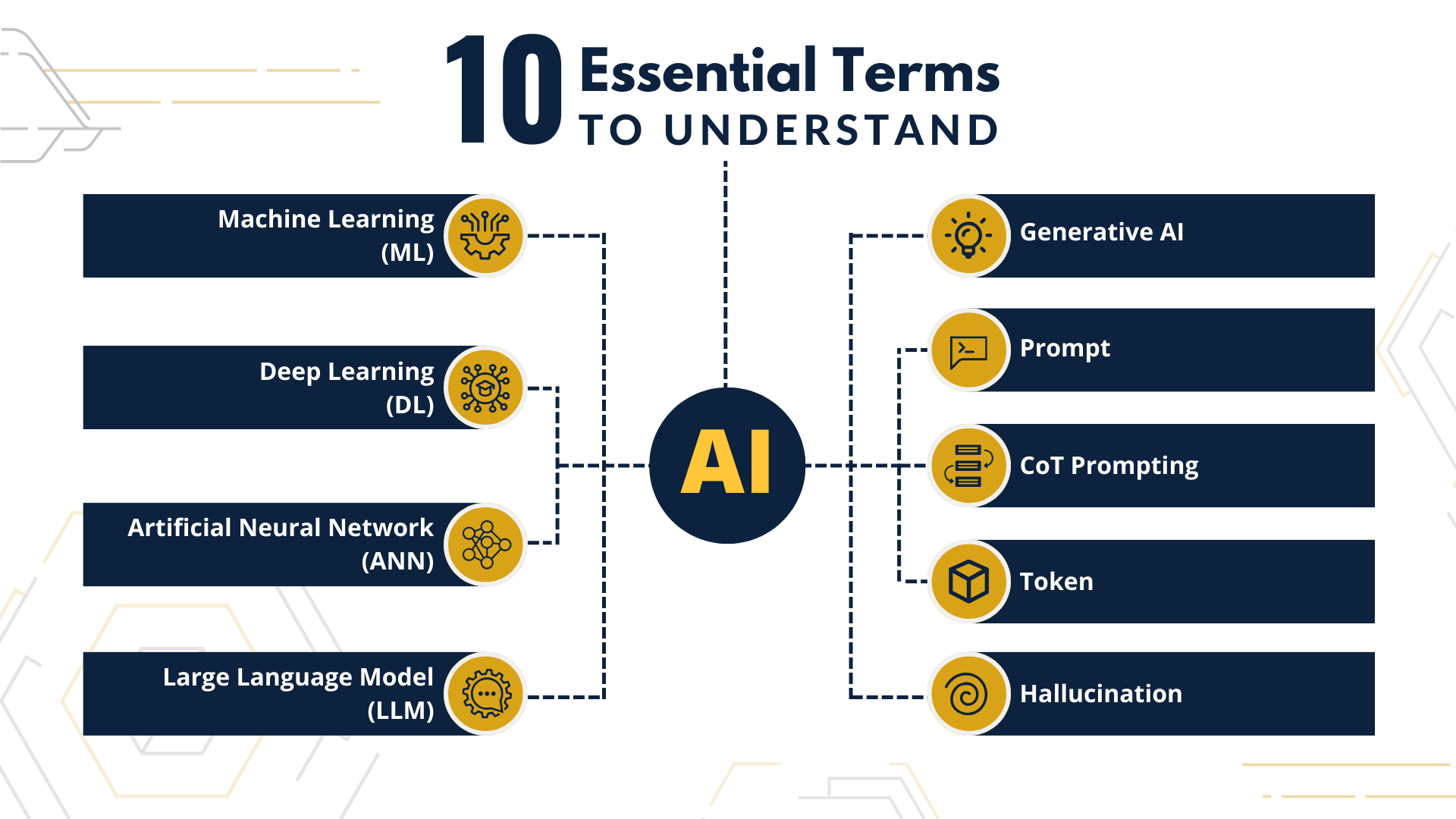

What to know first

- Large language model usually means a system trained to predict the next token in a sequence, often producing human-like text.

- Hallucination is a confident false output, which remains one of the biggest practical problems in generative AI.

- AI agent is an especially slippery term, and companies often use it far more broadly than they should.

- Inference is the stage where a trained model generates an answer, image, or decision from new input.

- Training data shapes what a model knows, what it misses, and where bias can show up.

AI terms guide: What is a large language model?

A large language model, or LLM, is a machine learning model trained on huge amounts of text to predict what word piece comes next. That sounds simple, but the result can look startlingly fluent. Tools like ChatGPT, Claude, and Gemini sit in this category.

Here is the catch. Fluency is not the same thing as understanding. A model can produce clean prose, answer coding questions, and summarize documents, yet still fail basic reasoning tasks or invent facts. Why does that matter? Because polished wording often fools people into trusting an answer more than they should.

LLMs are best understood as prediction engines with impressive pattern recall, not as reliable sources of truth.

That distinction is non-negotiable if you use these tools for research, customer support, or internal business work.

AI terms guide: What are hallucinations?

Hallucinations happen when an AI system generates false information and presents it as if it were true. A chatbot might cite a paper that does not exist, invent a legal case, or claim a product has features it does not have. And yes, this still happens in top-tier models.

The term is popular, but it can be a little too soft. It makes an error sound quirky when the real issue is reliability. In some settings, the better description is simple. The model is wrong.

TechCrunch’s glossary points to hallucinations as one of the most common AI concepts the public now runs into. That makes sense. Hallucinations are where flashy demos meet the brick wall of real-world use.

Why hallucinations happen

- The model predicts likely sequences, not verified facts.

- Prompt wording can steer it into overconfident guesses.

- Weak or outdated training data leaves gaps.

- Even retrieval systems can pass through bad source material.

It is a bit like a sports commentator who never stops talking. Sometimes they know the play. Sometimes they fill dead air with nonsense, but they still sound sure of themselves.

What people mean by training, fine-tuning, and inference

Training is the process of teaching a model from massive datasets. The system adjusts internal weights to get better at predictions. This stage is expensive and usually handled by large labs or well-funded companies.

Fine-tuning comes later. It means taking an existing model and adjusting it for a narrower purpose, such as legal drafting, coding, or customer service. This can improve tone, domain fit, or safety behavior.

Inference is what happens when you type a prompt and the model responds. The training is done. Now the model is applying what it learned to new input in real time, or close to it.

One short way to remember it. Training builds the engine, fine-tuning tweaks it, and inference is the drive.

That is the practical split.

What is generative AI, really?

Generative AI refers to systems that create new content, including text, images, audio, video, and code. Chatbots, image generators, music tools, and code assistants all fall under this umbrella.

But the label gets stretched too far. Some companies slap “generative AI” onto basic automation or search features because the term sells. Honestly, that should make you skeptical. If a product cannot explain what model it uses, what content it generates, and where human review fits, the branding is probably doing too much work.

What is an AI agent, and why is that term so messy?

An AI agent usually means a system that can take actions on your behalf, not just answer a question. It might read email, book meetings, query databases, write code, or chain together steps across apps.

That is the theory. In practice, “agent” has become one of the loosest terms in AI marketing. A workflow with a few if-then rules may be called an agent. So might a chatbot with tool access. So might a full autonomous system. Those are not the same thing.

If you are evaluating a product, ask three things:

- What actions can it take without approval?

- What tools or systems can it access?

- What happens when it fails?

(If the answer to the third question is vague, slow down.)

Tokens, context window, and why prompts break

Tokens are chunks of text a model processes. They are not exactly words. A single word can be one token or several, depending on the system.

Context window is the amount of text, code, or other input the model can handle at once. A larger context window lets you feed in longer documents or conversations. Still, bigger does not always mean better. Models can lose focus, miss details buried in long prompts, or weight recent text more heavily than earlier material.

This is why prompting can feel inconsistent. You are not talking to a person with steady comprehension. You are working with a statistical system that has limits on attention and memory inside each interaction.

What do bias, safety, and alignment mean?

Bias refers to skewed outputs tied to the data or design of a system. If training data reflects social bias, model outputs can echo it. That can affect hiring tools, moderation systems, lending products, and search results.

Safety usually covers efforts to reduce harmful outputs, such as instructions for violence, self-harm, fraud, or illegal activity. It can also include model behavior under stress, misuse risks, and cybersecurity exposure.

Alignment is the idea that a model should act in line with human goals or stated values. It sounds neat on paper. In reality, it is a moving target because humans do not agree on all goals or values, and product incentives often muddy the picture.

Any company claiming its AI is fully safe or fully aligned is selling certainty that the field does not have.

Open source, closed models, and what you should ask

Some AI models are open or partially open, which can mean public model weights, open research papers, or looser licensing. Others are closed, accessible only through an API or product interface.

This debate is often framed like ideology, but your real question is simpler. What do you need? Open models can offer control, customization, and lower long-term costs. Closed models may offer stronger performance, support, and managed security. The tradeoff looks a lot like architecture choices in software. You can buy the finished building, or you can own more of the blueprint and maintenance burden.

How to use this AI terms guide in real life

If you read AI news, buy AI software, or manage a team being pitched AI tools, these definitions help you test what is real. They also help you spot fuzzy claims fast.

- Translate buzzwords into plain functions.

- Ask what data the system uses and how current it is.

- Check whether outputs are verified or merely generated.

- Separate chatbot fluency from task reliability.

- Push vendors to explain failure modes in concrete terms.

That habit will save you time, money, and a few embarrassing meetings.

Where the language goes next

AI vocabulary will keep shifting because the products are still shifting. Some terms will harden into clear technical meanings. Others will stay muddy because marketing departments like them that way. So keep the useful definitions, ditch the fluff, and keep asking the annoying question that actually matters. What does this system do when the stakes are real?