Arcee and the Rise of Open Source Enterprise LLMs

Your team needs models that respect data boundaries and budgets, and Arcee says its open source enterprise LLMs can handle that pressure. The pitch is simple: smaller, domain-tuned models that live inside your stack, not behind a mystery API. That matters now because compliance rules are tightening while GPU prices swing wildly. Do you really need a 70B model when a lean one fits? I’ve watched this space long enough to know hype rarely survives procurement reviews.

Why Arcee’s Approach Is Different

- Focus on open source enterprise LLMs built to run on midrange hardware, not hyperscale clusters.

- Model weights you can inspect, audit, and redeploy without waiting on a vendor queue.

- Training that favors domain data over web-scale noise, improving signal on internal tasks.

- Costs that track with your own infra, not someone else’s margin.

What Makes a Small Model Work

Arcee keeps parameter counts modest, then leans on smart data curation. Think of it like a chef reducing a sauce: less liquid, more flavor. You get latency wins and lower inference bills. Models shrink; expectations grow.

“Enterprise buyers want transparency, not another black box,” says every CTO I interview these days.

The company also bakes in evaluation harnesses so teams can measure accuracy on their own corpora, not just on MMLU bragging rights.

How to Trial Arcee’s Stack

- Set a baseline: Run a reference task—document summarization or ticket triage—using your current model so you know what “good” looks like.

- Deploy locally: Spin up Arcee’s container image on a GPU you control. Keep data on your network to satisfy legal and security teams.

- Tune with guardrails: Feed a limited set of approved documents. Track drift with weekly spot checks.

- Compare total cost: Measure tokens processed per dollar. Include ops time, not just cloud spend.

Where Open Source Enterprise LLMs Fit in Your Stack

Look at places where latency and privacy trump peak benchmark scores. Internal search, policy summarization, and code review snippets are prime targets. And if a commercial API is like renting a star striker for a match, a self-hosted model is your homegrown academy talent—cheaper, coachable, and loyal.

Risks to Watch

Lean models can overfit if you overstuff niche jargon. Guard against that with a held-out validation set and periodic re-tests. Licensing diligence matters too; check the model card before shipping. And remember, observability isn’t optional. Add tracing so you see when responses slip.

Market Context and Arcee’s Edge

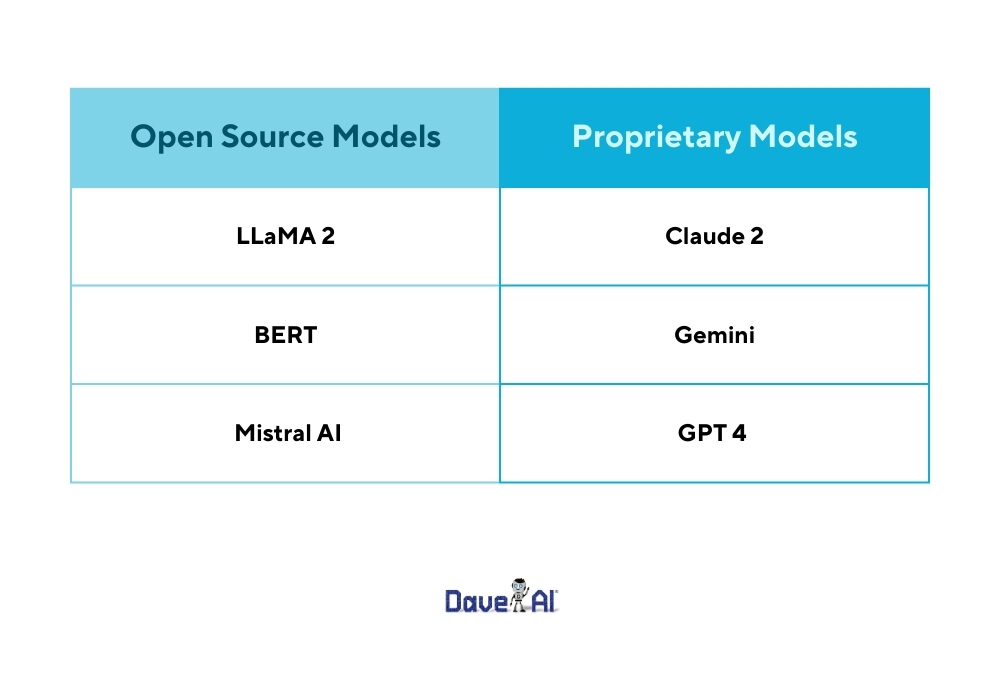

Open source incumbents like LLaMA derivatives and Mistral 7B still dominate mindshare, but Arcee targets enterprises that want control without wrangling giant checkpoints. The company’s bet is that curated data plus smaller footprints beat raw scale for most business workflows. I’m inclined to agree until someone proves otherwise.

Where Arcee Goes Next

Expect more task-specific releases and tighter evaluation tools. If Arcee can keep shipping models that run on everyday hardware while staying transparent on training data, they’ll earn a spot in the enterprise short list. Ready to test whether a compact model can replace your pricey API calls?