Building Public Trust with Practical AI Skills

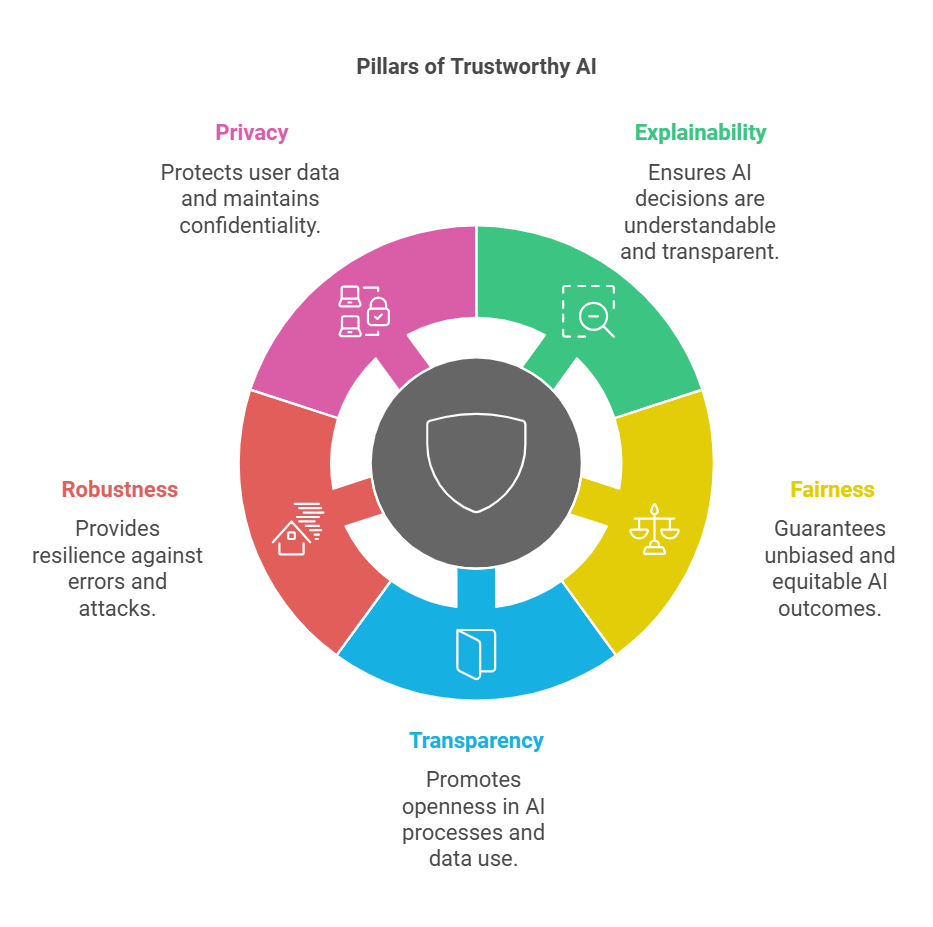

You want AI to help without turning into a black box, and that tension is rising now that systems sit inside hiring, health, and finance. The fix starts with trustworthy AI skills, not slogans. People need plain language guides, hands-on practice, and real oversight so they can question outputs and spot bias. Governments and companies are scrambling to set rules, but rules only work when users know how to apply them. That urgency makes trustworthy AI skills a non-negotiable priority today.

Why This Matters Right Now

- Hands-on literacy lets workers challenge flawed AI decisions instead of rubber-stamping them.

- Clear accountability policies reduce legal and reputational risk.

- Shared checklists keep teams aligned on privacy, bias, and security.

- Practical training builds public confidence faster than abstract ethics talk.

Trustworthy AI Skills in Practice

Look, trust grows when people can see how models behave, not just when they hear reassurances. Think of it like cooking with a new spice: you taste, adjust, and log what works before serving it to guests. A basic playbook should include model cards, data provenance notes, and risk registers that capture who approved each release. Add periodic red-team exercises to surface bad prompts and harmful edge cases.

One sentence. It punches through the noise.

Transparency is not a press release. It is a repeatable process with artifacts anyone on the team can inspect.

Hands-On Tactics

- Create short labs where staff compare AI outputs to human baselines and record mismatches.

- Rotate reviewers so the same eyes do not miss the same blind spots.

- Publish plain-language user guides that explain what data feeds the model and what it should never be used for.

And yes, keep a feedback loop open for users who find errors. Without that, you are flying without instruments.

Governance That Users Can Actually Follow

Policies sink if they read like legal scrolls. Trim them into checklists tied to product stages: data intake, training, deployment, and monitoring. Include clear escalation paths when an AI output looks unsafe. Why risk confusion when a simple contact chart will do?

Add scenario drills. Ask teams to walk through a failure mode, the way sports teams run set plays. It turns abstract rules into muscle memory. Pair this with metrics that matter: false positive rates in lending, demographic parity checks, incident response times.

Trustworthy AI Skills in Headings

Use your mainKeyword to label training tracks so stakeholders know the goal: trustworthy AI skills for analysts, trustworthy AI skills for managers. The repetition keeps the focus on capability building, not hype. It also signals to auditors that you treat trust as a skill set, not a slogan.

Public Oversight and External Signals

Citizens want proof, not promises. Open evaluation dashboards that show performance across protected attributes. Invite civil society groups to stress test models, and publish the results with remediation dates. But will you act when a finding hurts quarterly goals?

Add an analogy that lands: oversight should run like a neighborhood watch, not a secret police. Everyone sees the patrol routes, and everyone knows how to report a broken light. That visibility nudges teams to keep standards high.

Training That Sticks

Skip marathon slide decks. Run 30-minute sprints where people label data, measure drift, and write down what surprised them. A parenthetical aside: boredom kills retention. Mix formats—videos, quizzes, live demos—to keep attention. Tie completion to tangible rewards like access to more powerful tools or time saved on routine audits.

And keep language plain. No jargon walls. If a frontline worker cannot repeat the policy to a peer, rewrite it.

Measuring Trust Without Guesswork

- Track adoption of checklists and labs across teams each quarter.

- Monitor user-reported issues and the average time to resolution.

- Audit data sources for consent and relevance before each retrain.

- Score models on clarity: can you explain the top features in under a minute?

These numbers turn trust into something you can manage instead of admire from afar.

Risks and How to Defuse Them

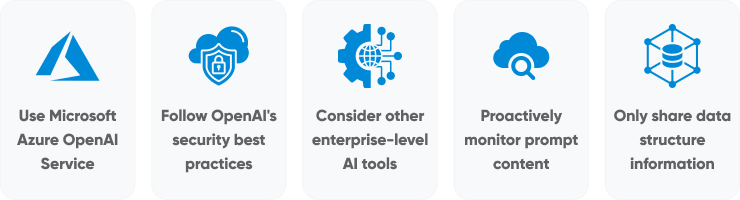

Biased data still slips in. Mitigate with diverse review panels and synthetic counterfactual testing. Security breaches loom; isolate training pipelines and log every access. Shadow deployments reduce blast radius when new models misbehave. And if leadership waves off concerns, document the risk and escalate to compliance—silence helps no one.

Where This Is Heading

Regulators are sharpening penalties, and workers are getting louder about fairness. The winners will be teams that bake trustworthy AI skills into daily routines, not annual workshops. Ready to treat trust like a product feature instead of a press quote?