Large Genome Model: Open Weights for Trillions of Bases

Labs keep hitting the same wall: translating raw sequencing data into answers without burning budgets or months. An open Large Genome Model trained on trillions of bases promises to compress that slog into minutes by spotting patterns most teams miss. The model’s weights are public, so you can run it locally instead of renting cloud GPUs at premium rates. That matters now as variant volumes surge and regulatory timelines tighten. Early results show faster motif discovery, better guide RNA scoring, and smoother annotation pipelines. The question is whether open access keeps pace with safety and accuracy standards.

Fast Facts

- Weights and code released under a permissive license, inviting modification and forked use.

- Training corpus spans human, model organism, and viral genomes to reduce overfitting.

- Benchmarks show gains on variant effect prediction and promoter strength ranking.

- Requires a single modern GPU for inference, so small labs can participate.

Large Genome Model in the Lab

You want throughput, not another black box. This release aims to cut the typical variant triage cycle by summarizing likely functional hits before wet lab validation. Think of it as a seasoned chess coach who flags bad openings before you make them. A published card details context lengths big enough to capture regulatory neighborhoods, which helps with enhancers and splice sites. Open weights change who gets to innovate.

Look, I have sat through enough closed demos to know open models are the only way smaller teams stay in the game.

Setting Up: A Strategic Guide

- Pull the repository and verify checksums on model weights. Do not skip this step when patient data may sit nearby.

- Run the provided inference script on a public dataset first. Confirm output matches the reference log.

- Profile VRAM use with your own FASTA batches. Batch size tuning often saves more time than fancy prompts.

- Layer domain rules. For CRISPR, combine model scores with off-target filters rather than trusting a single score.

- Track drift. Re-run benchmarks monthly as you add new genomes so you catch shifts early.

Main Uses for the Large Genome Model

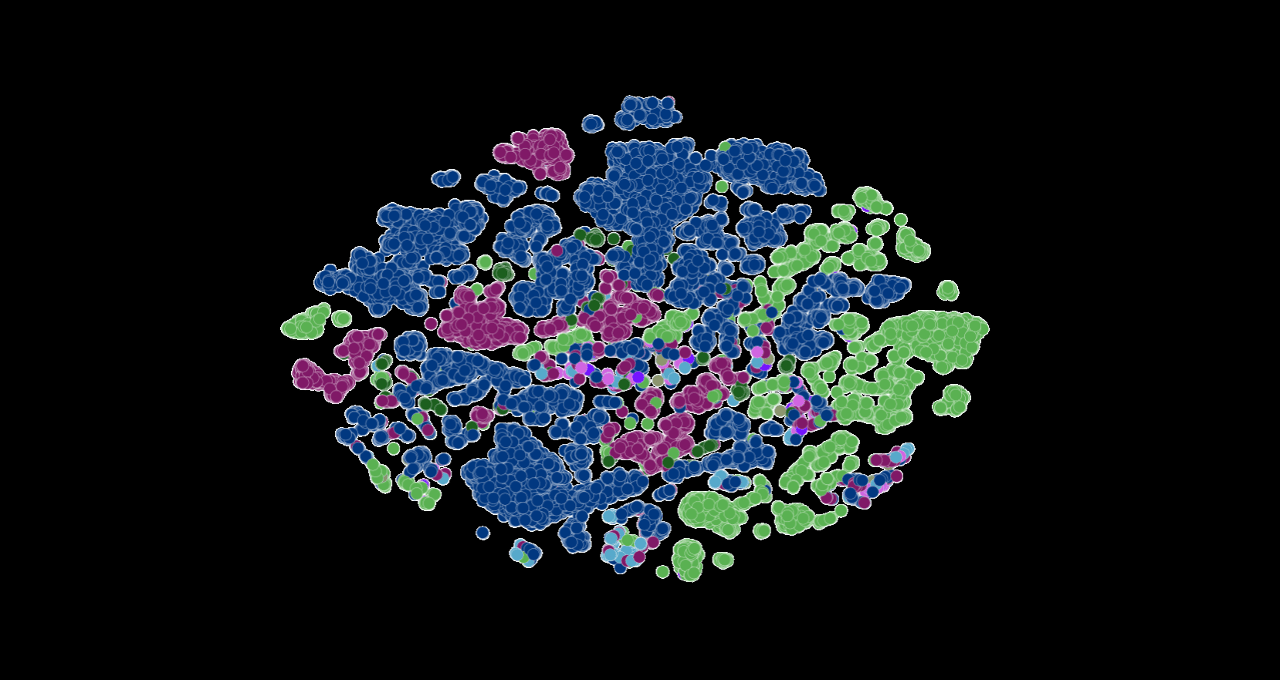

Where does this model earn its keep? Start with variant effect prediction on rare disease cohorts. Add promoter strength ranking to prioritize constructs before cloning. Use its embedding space for clustering viral lineages during outbreaks. For synthetic biology, guide RNA scoring speeds up design-build-test cycles. And because the weights are open, regional hospitals can deploy it on-prem to keep patient genomes off third-party clouds. Do you want to rely on closed APIs for clinical genetics?

This single line matters.

Trust, Safety, and Policy

Open access cuts costs, yet it also means anyone can fine-tune on sensitive data. Keep audit logs when running clinical workloads. Encrypt local checkpoints. Some regulators will ask how you validated predictions, so keep a small, well-labeled test set aside as a canary. If you publish downstream findings, cite the model card and version. Accuracy claims hinge on transparent lineage.

Performance Claims and Caveats

The developers report stronger AUROC scores on splice-site prediction than earlier genome transformers. Still, benchmark winners can stumble on edge cases like repetitive regions or rare structural variants. Treat confidence scores as triage signals, not verdicts. If your pipeline already uses Ensembl VEP or DeepSEA, A/B test a swap-in rather than rewriting everything. And keep an eye on inference latency when stacking tasks, because context windows that cover entire gene clusters can creep past GPU memory limits.

Analogy: Bio Model as Kitchen Prep

Running this model feels like prepping ingredients before a dinner rush. Slice, sort, and label your FASTA files, and the cooking line—your downstream tools—runs smoother. Skip the prep, and you chase fires all night.

Future Directions

Expect rapid forks tuned for plant breeding and microbial genomics as open contributors add species-specific corpora. I want to see more rigorous uncertainty estimates and calibration curves baked into outputs, not just point predictions. The community will also push for interpretable attention maps so regulators can audit why a certain intron looks risky. Will foundation genome models become as standard as BWA or GATK? The next year will answer that.