How LLM pseudonym deanonymization is reshaping online privacy

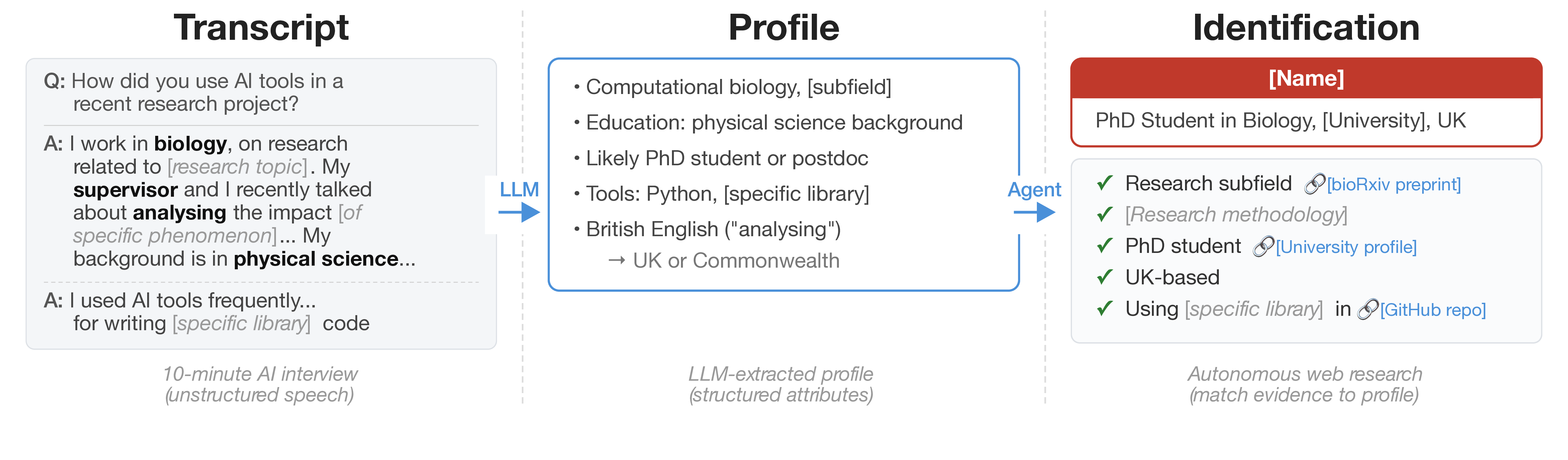

Your alias used to feel like a shield, but new research shows large models can pierce it. LLM pseudonym deanonymization now links writing style, metadata, and cross-platform crumbs to real identities with unsettling accuracy. You care because your forum posts, bug reports, and casual comments might map back to you even without names or emails.

Why this matters right now

- Models can match pseudonymous text to known authors across sites at scale.

- Style fingerprints often stay stable even when you try to write differently.

- Cross-referencing public leaks makes re-identification cheaper for attackers.

- Privacy laws lag behind rapid model deployment.

Silence is not protection.

LLM pseudonym deanonymization risks

How safe is your alias when models read your posts? Research teams trained LLMs on open forums, code commits, and social feeds, then asked them to guess who wrote unseen snippets. Accuracy climbed because the models learn subtle quirks: comma placement, word choice, even how you indent code. It feels like a chess match where the engine knows your favorite openings and traps you early.

LLMs do not need your name to know it is you; they infer identity from repetition and small stylistic tells.

Attackers can layer in public breach data to validate guesses. A scraped email from an old dump plus a consistent writing rhythm becomes a near-lock. The cost stays low because inference runs quickly on cloud GPUs, so bulk targeting becomes feasible.

Signals these systems exploit

- Stylometry: Character n-grams, punctuation habits, and cadence become a signature, even when you try to mask it.

- Context overlap: Topics, references to locations, or niche jargon narrow the pool fast.

- Timing patterns: Posting windows map to time zones and routines (late-night replies give clues).

- Cross-platform linking: Shared phrases in code comments and blog posts act like matching fingerprints.

Think of it like a seasoned scout in basketball watching your footwork; even when you change jerseys, the moves give you away.

Mitigating LLM pseudonym deanonymization

You cannot erase every signal, but you can blunt them. Rotate writing styles intentionally: change sentence length, swap punctuation habits, and vary vocabulary. Use separate accounts per context, never cross-linking emails or recovery numbers. When possible, strip metadata before posting. Some communities allow privacy-focused relay tools that proxy content and randomize headers.

- Adopt style transformers that rewrite text to neutralize personal stylometry before publishing.

- Separate identities across devices and browsers to avoid cookie and fingerprint correlation.

- Prefer end-to-end encrypted forums where logs are limited and scraping is harder.

- Watch for platform-level LLM features that summarize or recommend content; those pipelines often store embeddings that can be abused.

Policy helps too. Push platforms to disclose whether they run author-attribution models. Advocate for opt-out controls on training data that include your posts. And log your own activity so you notice unintended overlaps.

What organizations should do

Security teams need playbooks, not platitudes. Run red-team tests using open-source attribution models against internal comms to measure exposure. If accuracy is high, prioritize writer-style anonymization tools in publishing workflows. Train staff on operational security: no cross-posting internal phrasing to public sites, no reuse of personal handles. Treat stylistic data as sensitive as IP addresses.

Legal and compliance teams should map where employee content is processed by third-party LLM features. Contract language should ban identity inference on corporate submissions. This is less about paranoia and more about hygiene.

Where this heads next

Look, the models will only get sharper as context windows grow and training sets expand. Expect regulators to notice once political speech and whistleblowing cases hit the courts. Until then, your best defense is reducing the breadcrumbs you leave and challenging the tools that collect them. Will platforms let you stay anonymous, or will they sell your style back to the highest bidder?