Mira Murati on Human-in-the-Loop AI

Plenty of AI companies want you to believe models can reason, plan, and act with minimal supervision. That pitch sounds efficient. It also raises an obvious question. Who is accountable when the model gets something wrong? Wired’s reporting on human-in-the-loop AI and Mira Murati lands right on that pressure point. The debate matters now because companies are pushing AI into customer support, coding, research, and internal operations faster than their controls are maturing. If you buy the hype too quickly, you risk handing messy judgment calls to systems that still hallucinate, misread context, and fail in odd edge cases. Murati’s framing is more grounded than the usual sales talk. The real issue is not whether AI can look smart. It is whether you should trust it without a person close enough to catch the miss.

What matters here

- Human-in-the-loop AI is about oversight, not nostalgia for manual work.

- Mira Murati’s view pushes back on the fantasy of fully autonomous models.

- The strongest use cases pair machine speed with human judgment.

- Businesses need clear escalation rules before they deploy agentic systems.

Why human-in-the-loop AI keeps coming up

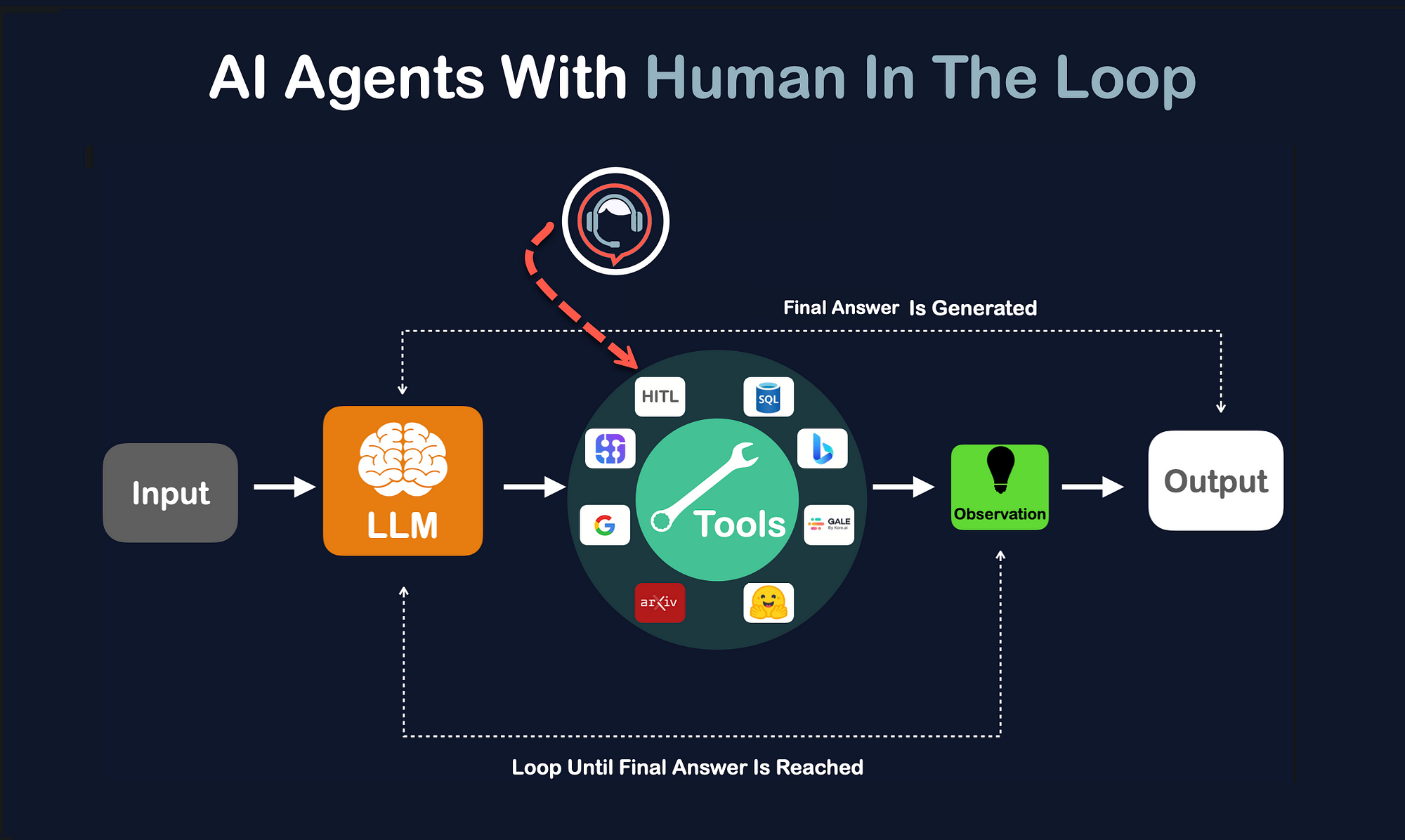

The term sounds technical, but the idea is simple. A model does part of the work, and a person reviews, approves, corrects, or redirects it before the output triggers a real-world action.

That can mean a doctor reviewing an AI summary, a lawyer checking extracted clauses, or a support manager approving a refund recommendation. Different setting, same pattern. The machine is fast. The human owns the final call.

AI looks most useful when it acts like a sharp assistant, not an unchecked executive.

Look, this is not a minor workflow preference. It is the fault line between AI as a productivity tool and AI as an accountability trap.

What Mira Murati seems to get about human-in-the-loop AI

Murati has long been associated with the argument that advanced AI needs stronger alignment with human goals and feedback. Wired’s piece adds more texture to that stance. The notable part is not the broad claim that humans matter. Everyone says that. The notable part is the implied pushback against the louder current in AI, which treats human review as temporary scaffolding that will soon disappear.

I am not sold on that future. And you should be skeptical too.

Why? Because many of the hardest business decisions are not pattern-matching problems. They involve tradeoffs, policy, timing, reputational risk, and social nuance. A model can summarize the terrain. It still struggles to own the judgment.

Think of it like a sous-chef in a busy kitchen. The prep can be fast, consistent, even impressive. But the head chef still tastes the sauce before it leaves the pass. That final check is where standards live.

Where human-in-the-loop AI makes the most sense

Some tasks are built for this model. Others are not. If the cost of an error is high, human review moves from helpful to non-negotiable.

- Healthcare workflows

AI can summarize notes, flag anomalies, or draft prior authorization language. But diagnosis and treatment decisions need licensed judgment. - Legal and compliance review

Models can speed up contract analysis and policy checks. They can also miss a clause that blows up later. - Customer service escalations

AI handles common requests well. Sensitive disputes, billing conflicts, and edge cases still need a person. - Software development

Code assistants are useful, especially for boilerplate. Security, architecture, and production readiness still need experienced review. - Financial decisions

Fraud detection and risk scoring benefit from automation, but adverse actions and exception handling need oversight.

One bad call can erase a year of AI efficiency gains.

The weak spot in the autonomy pitch

The autonomy crowd argues that models are improving so quickly that putting a human in the loop will soon look wasteful. There is some truth there. Review can create bottlenecks. It can slow teams down. And in low-risk settings, full automation may be perfectly fine.

But that argument often smuggles in a bad assumption. It assumes accuracy alone is enough. It is not.

Businesses also need traceability, explainability, and recourse. If a customer is denied service, if a patient record is misread, or if a financial recommendation causes harm, people want to know who checked what and when. That paper trail matters. So does the ability to reverse a bad decision fast.

Honestly, this is where a lot of AI product marketing starts to wobble. The demos are smooth. The governance story is thin.

How to evaluate a human-in-the-loop AI system

If you are buying or deploying one, ask harder questions than “How accurate is it?” Accuracy is table stakes.

Questions worth asking

- What decisions can the model make on its own? Spell out the boundary.

- What must a human approve? Do not leave this vague.

- How are edge cases flagged? Confidence scores alone are not enough.

- Can reviewers see the source material? Hidden reasoning chains create blind trust.

- What happens after a correction? The system should learn from mistakes, or at least log them cleanly.

- Is there an audit trail? If not, trouble is coming later.

And here is the practical test. If your best operator cannot explain how a bad output gets caught before it causes damage, the system is not ready.

Human-in-the-loop AI is not anti-automation

This point gets lost in the noise. Keeping people involved does not mean clinging to old workflows. It means assigning the right job to the right actor.

Machines are great at speed, recall, classification, and repetitive triage. Humans are better at context, ethics, exception handling, and understanding what is at stake beyond the prompt. That division of labor is not glamorous. It is just sane.

But there is a catch. If the human reviewer becomes a rubber stamp, the whole setup fails. The review layer must have real authority, enough time, and enough visibility into what the model actually did. Otherwise you have the worst of both worlds, fake oversight and real risk.

What businesses should do next

If Murati’s position tells us anything, it is that mature AI adoption will look less like science fiction and more like disciplined operations. That may disappoint people chasing fully autonomous agents. Too bad.

Start here:

- Map high-risk decisions in your workflow.

- Insert human review at those decision points.

- Define escalation paths for ambiguous outputs.

- Track correction rates and failure patterns.

- Reduce oversight only when the evidence supports it.

(And yes, evidence means real production data, not vendor benchmarks.)

The part to watch next

The bigger story is not whether AI can imitate reasoning well enough to impress a room. It is whether leaders will keep humans close to the loop when budgets tighten and pressure rises to automate more. That is the real test.

Murati’s stance feels closer to reality than the bolder promises coming out of the agent race. AI will keep getting better. No argument there. But if the next wave of systems is going to touch hiring, health, finance, and public services, human-in-the-loop AI may end up being less of a temporary safety rail and more of a permanent design rule. The companies that accept that early will probably make fewer stupid mistakes. Who wants to be the team that learns this after the damage is done?