Perplexity Incognito Mode Lawsuit Puts Trust on Trial

Your privacy tools should not feel like roulette. The Perplexity incognito mode lawsuit alleges that the company’s private browsing claims look sturdy on the surface yet leak data in practice, and that strikes at user trust when AI assistants sit between you and the web. The complaint argues Perplexity recorded queries, logged IP addresses, and kept chat histories even when the interface promised discretion. That matters because you feed sensitive prompts to these systems, and you expect them to treat those inputs like a sealed envelope. This case arrives as regulators sharpen their pencils on AI privacy, and it raises a blunt question: do AI helpers honor the same privacy norms as browsers, or are they banking on your ignorance?

What Stands Out Now

- Suit claims Perplexity tracked IPs and retained chats despite incognito branding.

- Alleged mismatch between marketing promises and back-end logging practices.

- Possible violations of state privacy statutes and consumer protection laws.

- Evidence phase could surface how AI assistants actually store prompts.

- Outcome may set a playbook for AI privacy disclosures.

Why the Perplexity incognito mode lawsuit matters now

Look, privacy lapses in a search interface are not abstract—they can expose job hunts, health questions, and legal research. Plaintiffs say Perplexity’s incognito label invited sensitive queries while its servers quietly kept identifiers. That is the same kind of broken traffic light you curse at an intersection: it signals safety while cars keep coming. State consumer laws often hinge on deceptive design, so the court will examine the wording of Perplexity’s UX and privacy policy against its actual logs.

Incognito is a promise, not a vibe: if data persists, the brand is misleading the very users it courts.

One sentence paragraph.

What evidence could tilt the case

The discovery process could reveal server-side telemetry, retention periods, and any sharing with third parties. Did engineers flag the gap between marketing and logging? Were users given clear opt-outs? And will the company argue that anonymized storage counts as privacy even when IPs appear? The answers decide damages and public perception.

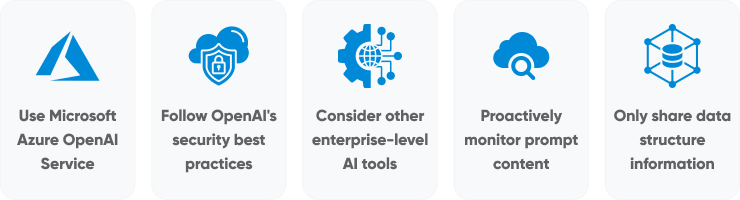

How users can respond to the Perplexity incognito mode lawsuit claims

If you still need AI lookups, reduce the blast radius. Use VPNs to mask IPs. Clear local cookies. Prefer tools that offer transparent privacy dashboards. Treat any incognito badge like a calorie label—useful, but verify the serving size. Ask yourself: does the provider publish retention windows and deletion hooks you can trigger?

- Check privacy policies for data retention timelines and audit logs.

- Pick services with client-side processing when possible.

- Use burner accounts for sensitive research.

- File data deletion requests; track the response time.

Regulatory ripple effects

Regulators already scrutinize dark patterns in consent flows. A ruling against Perplexity could push agencies to define AI-specific privacy disclosures, much like nutrition facts reset food labels decades ago. Expect rivals to tout shorter retention and clearer toggles as a competitive edge.

Honestly, the real test is whether AI firms can sustain growth while acting like stewards of user intent. Can they?

Where the Perplexity incognito mode lawsuit leaves the AI field

AI vendors love to talk about safety, yet privacy is the foundation that keeps users engaged. If this suit proves logging under an incognito label, the industry will need to rebuild trust with plain-language disclosures, independent audits, and hard caps on retention. Think of it like renovating an old house: you reinforce the wiring before adding new rooms.

The closing thought: expect privacy claims to face courtroom scrutiny, so build your stack and your habits as if every query might be read aloud in front of a judge.