How AI Software Development Automation Is Rewriting the Dev Playbook

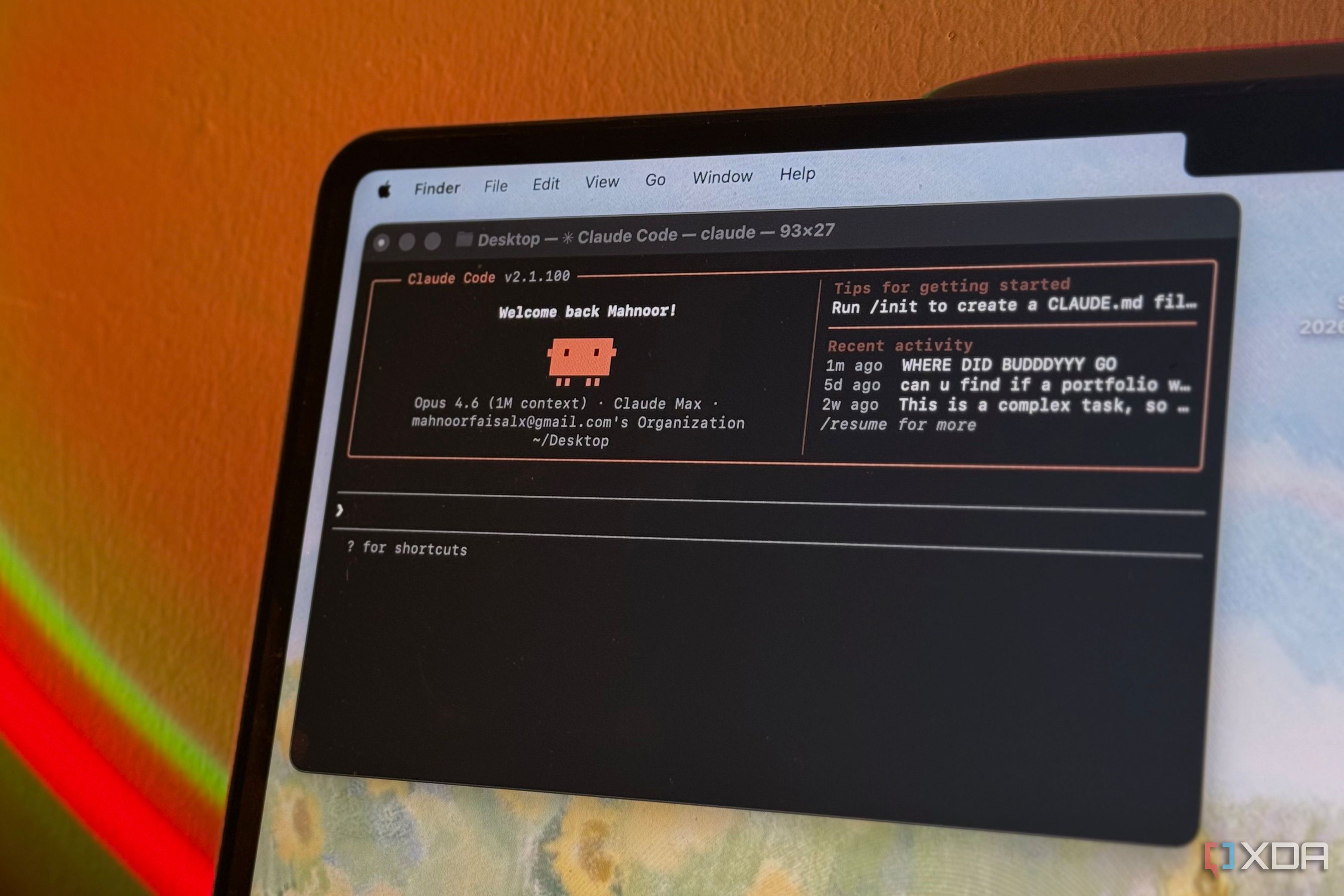

Startups are racing to ship features with fewer engineers and tighter budgets. AI software development automation now promises to triage tickets, write pull requests, and even self-debug. That matters because talent is scarce and product cycles keep shrinking. Tools like OpenClaw claim they can automate entire developer workflows, from specs to code reviews, without waiting for a human sprint. The upside looks seismic. The risks, though, are real: security gaps, brittle code, and team trust. You want speed, but you do not want to wake up to a production fire sparked by a bot.

So how do you push automation without handing over the keys? Let’s break it down.

Quick hits

- OpenClaw shows how small teams can ship with AI-driven coding pipelines.

- Strong guardrails and audit trails are non-negotiable before you let bots commit.

- Security scanning must sit inside the AI loop, not after it.

- Engineers still set architecture and own rollback plans.

Why AI software development automation matters now

Hiring has slowed, yet expectations for constant releases stay high. Automation tools are filling the gap by handling repetitive coding and testing work. Think of it like an assembly line in a kitchen: the prep cook slices, the line cook plates, but the head chef still tastes every dish. That last taste is your code review and rollout plan.

Speed without oversight is just gambling with uptime.

Do you trust a bot to review your pull requests? That question sits at the center of every CTO debate. I have watched teams ship faster when they keep humans in the loop for architecture and incident response, while letting AI grind through tests and documentation.

How AI software development automation is changing team roles

Engineers shift from writing boilerplate to designing systems and policies. Product managers move earlier into the process because AI tools ask for clearer specs. Security now pairs with automation platforms to feed policies and block unsafe calls. One-sentence truth: shipping code now feels like running a factory line.

- Define the coding rails. Style guides, lint rules, and API contracts become the playbook the AI must follow.

- Embed security checks. Run SAST and dependency scans inside the AI-generated pipeline, not as a late gate.

- Keep humans on critical paths. Approvals for migrations, data access, and customer-facing flows stay manual.

- Track provenance. Every AI-generated change needs traceable logs for audits and incident reviews.

I tested setups where bots opened pull requests with unit tests attached, yet the team still owned merge decisions. That blend keeps velocity high without surrendering accountability.

Practical safeguards before you roll out OpenClaw or similar tools

Start with a sandbox repo and let the AI handle low-risk chores. Watch for brittle tests or repeated logic. Set rate limits and API scopes so the tool cannot blast production systems. Use feature flags to turn off automated changes fast. And if the AI proposes database schema edits, demand manual sign-off every time.

Think of monitoring like a goalie in soccer: it only matters when the shot is on target. Metrics for code coverage, mean time to rollback, and escaped defects will tell you if automation helps or hurts.

Cost and ROI: when the math works

AI credits are cheaper than full-time engineers, but hidden costs creep in. Extra observability, policy engines, and security reviews add overhead. Track saved engineer hours against downtime risk. If your AI creates three fixes and one outage, the ledger is not in your favor.

One team I spoke with capped automated commits per day and measured customer tickets. Ticket volume dropped once the AI was tuned to respect coding standards. That is the signal you want.

Where this goes next

Expect vendors to bundle AI agents with compliance dashboards and SOC 2 templates. Open-source options like OpenClaw will evolve with community plugins for language support and testing harnesses. The real battle will be trust: engineers need transparency into prompts, model versions, and decision logs.

Honestly, I see automation reshaping junior roles first, while senior engineers become quality governors. The game will be won by teams that treat AI as an accelerator, not an autopilot. Ready to let a bot file your next bug fix, or do you still want your own hands on the keyboard?