CAISI Frontier AI Testing Deals Signal a Harder Line on National Security

If you follow AI policy, you have probably seen a familiar problem. Frontier models keep getting more capable, while public oversight struggles to keep pace. That gap matters more now because national security risks are no longer abstract. They touch cyber operations, critical infrastructure, biosecurity, and the misuse of advanced models by hostile actors. The new CAISI frontier AI testing agreements, announced through NIST, are a direct response to that pressure. They suggest the U.S. government wants sharper access to model evaluation before risk turns into damage. And that is the real story here. This is not about flashy demos or vague safety promises. It is about building a system where high-end AI models face structured scrutiny tied to security, not just product launch timelines.

What stands out

- CAISI frontier AI testing is moving from broad safety talk to formal agreements tied to national security concerns.

- The NIST announcement points to a more organized testing pipeline for advanced AI systems.

- This raises the bar for frontier model developers that want credibility with policymakers.

- It also hints at a future where pre-deployment evaluations become non-negotiable for the most powerful systems.

What CAISI frontier AI testing actually means

CAISI, housed at NIST, focuses on evaluating advanced AI systems for risks that matter at a national scale. Based on the NIST announcement, these agreements are meant to support testing of frontier AI models in areas tied to national security. That wording matters. It is narrower and more serious than generic AI safety language.

Look, governments do not sign these kinds of agreements because they are curious. They do it because they think model capability is racing ahead of existing controls. If an AI system can materially help with cyber offense, large-scale deception, or sensitive technical problem-solving, testing cannot stay optional or informal.

What NIST is signaling is simple: frontier AI oversight is shifting closer to the logic used in other high-risk domains, where evaluation comes before broad trust.

That shift was probably inevitable.

Why NIST and CAISI are stepping in now

NIST has spent years building a reputation around technical standards, measurement, and risk frameworks. So it makes sense that CAISI frontier AI testing would sit there rather than in a purely political office. NIST can give this work a more technical spine, even when the politics around AI stay noisy.

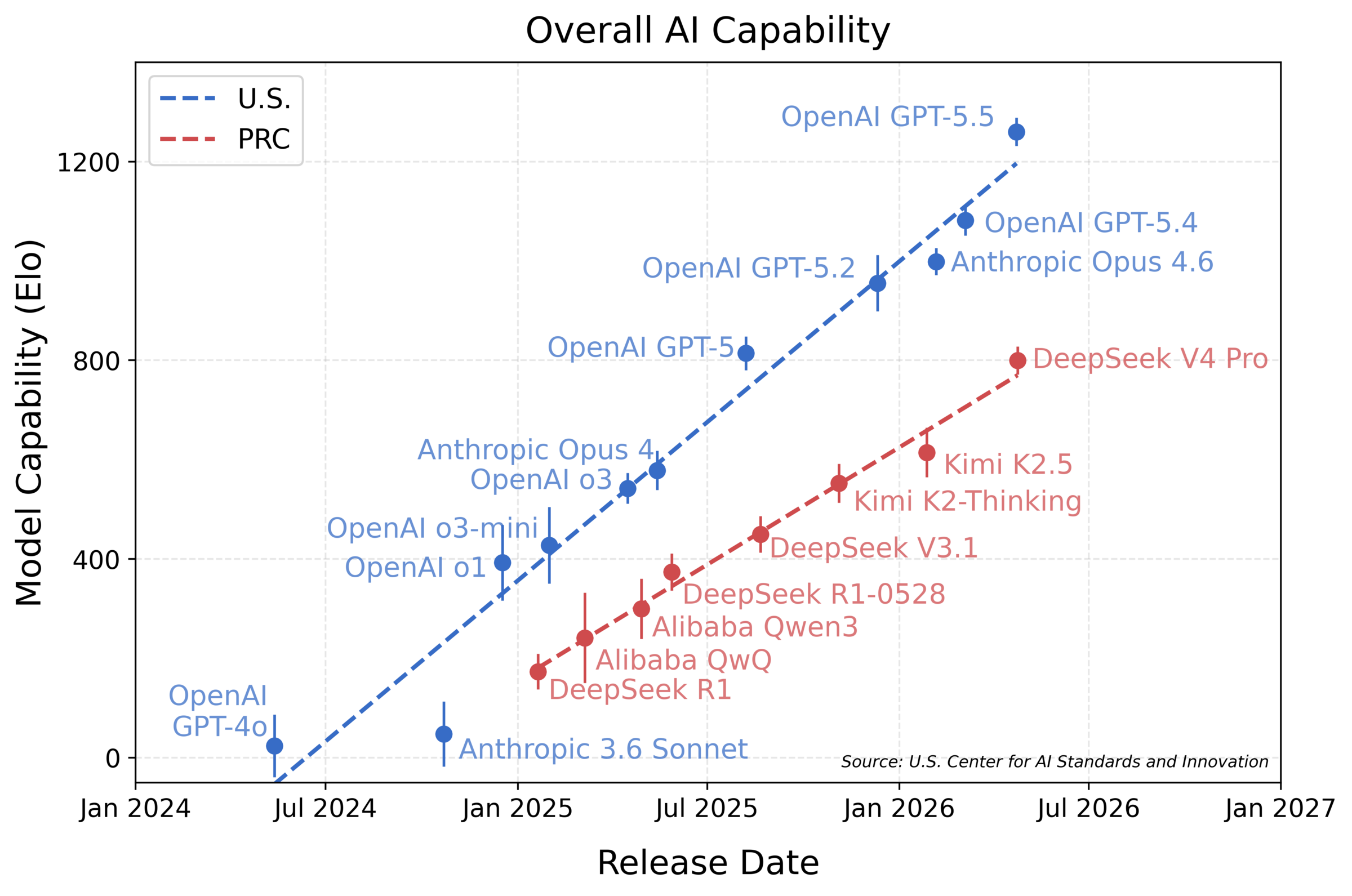

And timing matters. Frontier models are improving fast enough that old distinctions, consumer tool versus national security issue, no longer hold up well. A model built for general use can still be repurposed. That is the awkward truth policymakers are finally treating as real.

Think of it like airport security in the early years after a major threat shift. The old process was built for a smaller problem set. Then the risk changed, and screening had to become more systematic. AI is hitting a similar point.

What these agreements may change for AI companies

The immediate effect is reputational and procedural. Companies that sign onto serious testing frameworks can show they are willing to subject their systems to outside scrutiny. That will matter in Washington, and it may matter with enterprise buyers too.

But there is a harder edge here. Once these agreements exist, they create a baseline expectation. Firms working on top-tier models may face pressure to answer practical questions before release.

- What exact capabilities are being tested?

- How is misuse risk measured?

- Who gets access to findings?

- What mitigations are required if a model crosses a threshold?

Those are not academic questions. They shape product timelines, deployment choices, and even model architecture decisions. Honestly, this is where AI policy gets real.

Where CAISI frontier AI testing could run into trouble

Formal testing sounds clean on paper. In practice, it gets messy fast. The first problem is scope. Frontier models are general-purpose systems, which means they can create risk across many domains at once. Cybersecurity, chemical knowledge, persuasion, software exploitation, and operational planning do not sit in neat boxes.

The second problem is speed. A testing regime that takes too long will lag behind model releases. One that moves too fast may miss edge-case risks. That balance is brutal.

Then there is the issue of incentives. Companies want access to government legitimacy, but they also want to protect model details, training methods, and competitive secrets. So how much access will evaluators really get?

Here is the thing. If testing turns into a check-the-box ritual, it will not earn trust. If it becomes technically demanding and independent enough to irritate model developers a little, it may actually matter.

What readers should watch next on CAISI frontier AI testing

The NIST announcement is important, but the follow-through will tell the real story. Watch for concrete signs that this effort is more than a press release.

- Public detail on evaluation methods or testing domains

- Clarity on which organizations signed agreements

- Evidence that results inform deployment or mitigation decisions

- Links between CAISI work and broader U.S. AI governance efforts

- Any sign that testing standards begin to influence allies or multilateral forums

If those pieces appear, CAISI frontier AI testing could become one of the few policy efforts with actual operational bite. If they do not, skepticism will be fair.

Why this matters beyond Washington

Even if you do not work in government, this development matters because national security testing often shapes the broader market. Standards built for the highest-risk systems tend to trickle outward. Large vendors adopt them first. Buyers start asking for them next. Then regulators circle around after the pattern is already set.

That pattern has shown up in cybersecurity, cloud compliance, and privacy controls. AI will likely follow the same path, though with more conflict and more lobbying. Why would frontier models be any different?

And there is a deeper issue here (one many companies still dodge). The firms building the strongest systems want to move fast, but they also want the public to trust that they can self-police. These new agreements suggest federal officials are less willing to take that claim at face value.

The next test is whether this becomes muscle, not theater

NIST’s move with CAISI looks like a sign of policy maturity, not panic. That is good. The U.S. needs institutions that can evaluate advanced AI systems with technical depth and a clear view of national security consequences.

But the hard part starts now. Real trust will depend on whether CAISI frontier AI testing leads to repeatable methods, credible outside assessment, and decisions that affect how powerful models are deployed. If that happens, this could mark the start of a stricter AI governance era. If not, it will be another headline swallowed by the next model launch.

My bet? The pressure will only build from here, and the companies still treating security testing like a public relations exercise are going to have a rough few years.