Meta Age Verification AI Raises New Privacy Questions

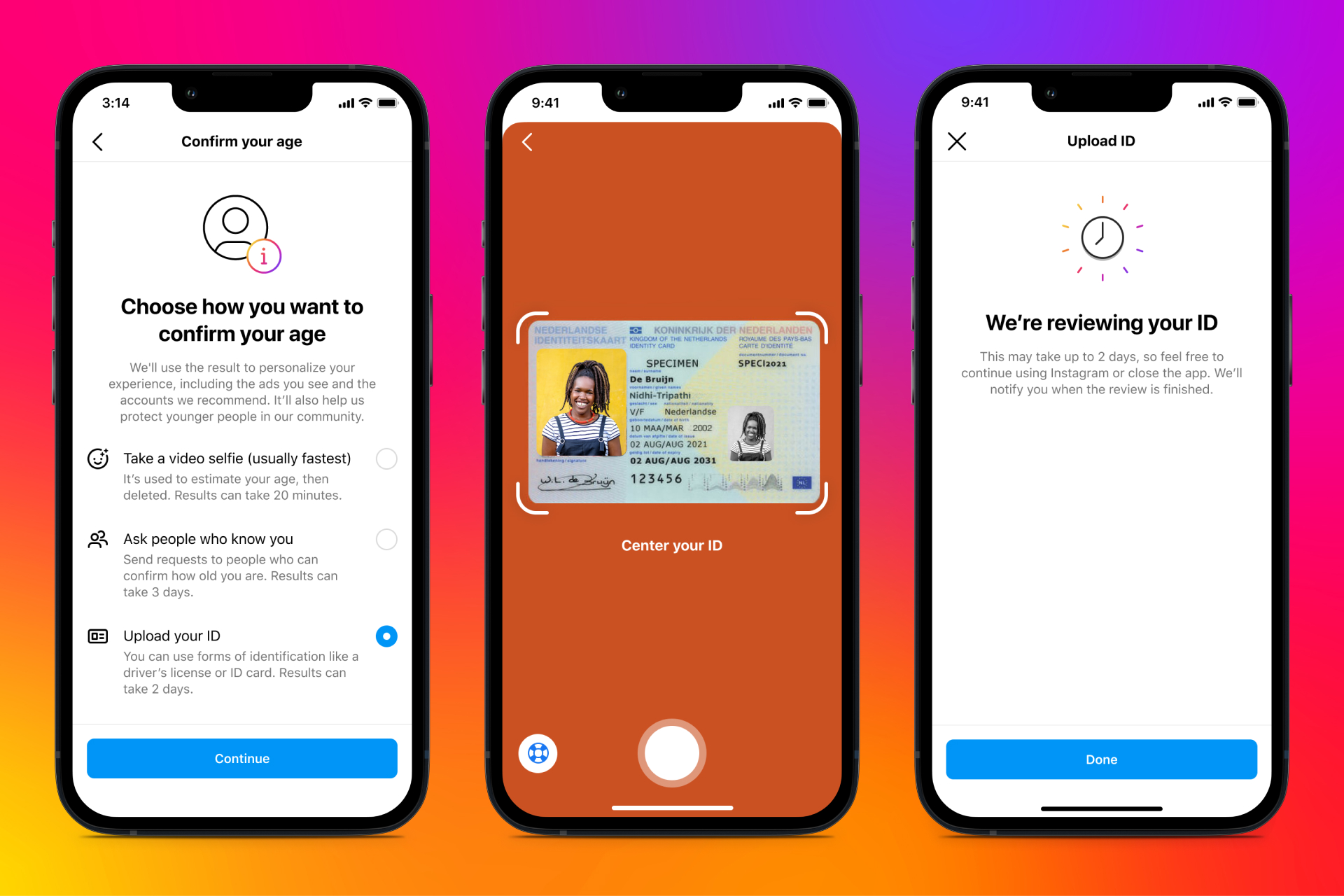

If you use Instagram or Facebook, age checks are no longer a side issue. They are becoming part of the product itself. Meta age verification AI is the latest example, with the company moving toward systems that can analyze signals like height and bone structure to judge whether a user may be underage. That matters now because platforms face mounting pressure from lawmakers, parents, and child safety groups to keep teens out of adult spaces and to enforce age-based rules with more than a checkbox.

But this shift opens a harder question. How much biometric-style analysis should a social platform be allowed to use just to estimate your age? Meta says the goal is safety. Critics will ask whether the trade-off is too steep, especially when the technology can make mistakes and the rules around face analysis are still unsettled.

What stands out here

- Meta age verification AI appears to rely on physical signals such as height and bone structure, not just self-reported birthdates.

- The move reflects a broader platform trend toward automated age estimation for teen safety compliance.

- Biometric inference brings fresh privacy and accuracy concerns, especially for users near the age threshold.

- Regulators will likely focus on consent, data retention, and how these systems handle false positives.

How Meta age verification AI appears to work

According to TechCrunch, Meta plans to use AI to analyze physical traits including height and bone structure to identify whether users are underage. That suggests an age estimation system based on images or video, likely paired with existing account signals such as friend graph data, behavior patterns, account history, and declared age.

Look, this is not a small product tweak. It is a shift from asking users how old they are to having software make an informed guess. Think of it like airport security adding another scanner. The promise is tighter screening. The cost is more scrutiny for everyone passing through.

That changes the stakes.

Age estimation models are already used across parts of the tech industry. Some vendors analyze facial geometry, skin texture, and other visual markers to estimate an age range. Meta’s reported interest in bone structure pushes the system into more sensitive territory, because it edges closer to biometric profiling even if the company does not label it that way.

Why Meta is doing this now

Meta is under pressure from several directions at once. Lawmakers want stronger child safety controls. Regulators in the US and Europe have pushed platforms on age-appropriate design. And parents want fewer gaps that let younger kids enter spaces built for older teens or adults.

Checkbox age gates clearly do not work well. Anyone who has used the internet knows that. So platforms are trying to build systems that infer age instead of trusting what users type into a form.

Meta is chasing a simple outcome with a messy tool: fewer underage users slipping through, and fewer accusations that the company is asleep at the wheel.

There is also a legal motive. If a platform can show it used automated tools to detect likely underage accounts, it gains a stronger defense when critics ask what it did to enforce its own rules. Whether that defense holds up will depend on the details.

The privacy problem with Meta age verification AI

The sharpest issue is data sensitivity. If Meta age verification AI analyzes body features from images, users will want to know what is processed, how long it is stored, and whether the analysis is used only for age checks or for other internal systems.

That last point matters a lot. A model built for one purpose has a way of drifting into others over time. Ad targeting, trust and safety, account recovery, identity verification. Once the pipeline exists, who really believes it stays in a neat box forever?

And there is a fairness issue. Physical development varies widely across teenagers and young adults. Some minors look older. Some adults look younger. Any system that draws a line from body structure to legal or policy status will produce errors. For users around 16 to 21, those errors may be frequent enough to become a real product problem.

Questions Meta will need to answer

- What data does the model analyze, still images, video, or both?

- Is the analysis done on-device, in the cloud, or through a third-party vendor?

- How long are source images and age estimates retained?

- Can users appeal if the system flags them as underage?

- What accuracy rates has Meta seen across age bands, genders, and ethnic groups?

How accurate can age estimation really be?

Honestly, this is where hype usually outruns reality. Computer vision can estimate age ranges, but exact age judgments are much harder. A system may be decent at telling a child from a middle-aged adult. Distinguishing a 15-year-old from an 18-year-old is another story.

That gap matters because platform policies often hinge on narrow thresholds. The difference between 13, 16, and 18 can determine account access, messaging settings, ad restrictions, and content exposure. A fuzzy model being used for hard cutoffs is a recipe for friction.

And friction cuts both ways. If the system misses underage users, Meta still gets blamed. If it wrongly limits legitimate users, the company faces complaints about unfair treatment and opaque moderation. It is a no-win setup unless Meta offers clear review paths and publishes meaningful performance data.

What this means for parents, teens, and adult users

Parents may welcome tougher checks if they reduce fake adult accounts created by younger users. That is the upside. But parents should also ask what kind of image analysis their children are being subjected to and whether they can opt out.

Teens face the highest impact. If Meta classifies them as younger than they are, they may get pushed into more restrictive settings. If it classifies them as older, the safety goal breaks down. Either way, the system becomes a gatekeeper.

Adult users are not outside this picture either. Any broad deployment of Meta age verification AI could sweep in adults whose photos trigger age uncertainty. Then what happens? More ID checks, more account friction, and more pressure to hand over sensitive data.

What regulators and watchdogs should watch next

The immediate issue is not whether child safety matters. It does. The real issue is whether Meta can prove that this method is proportionate, limited, and accountable.

Regulators should focus on a few non-negotiable points:

- Purpose limits. Age estimation data should be used only for age-related safety controls.

- Retention limits. Source imagery and derived signals should not sit around longer than needed.

- Independent testing. Accuracy and bias claims should face outside review.

- User appeal rights. People need a workable path to challenge a wrong age estimate.

- Clear disclosure. Users should know when visual analysis is being used and why.

There is a broader policy fight here too. Governments want platforms to do more age enforcement, yet stronger enforcement often pushes companies toward more invasive tools. That tension is not going away. It will get sharper.

Where this heads next

Meta age verification AI may end up being a preview of how social platforms handle identity signals over the next few years. Less trust in self-reported data. More machine judgment. More pressure to prove who you are, or at least how old you look.

That may satisfy some safety demands, but it also moves the internet one step closer to a world where platforms constantly inspect the human body for clues. For a company with Meta’s record, that should make people pause.

The next step is simple. Watch whether Meta publishes real details on accuracy, retention, and appeals, because if it does not, this will look less like child safety policy and more like another expansion of platform surveillance.