Google and Intel Double Down on AI Infrastructure for Real Enterprise Gains

Enterprises are scrambling for dependable compute, and the Google Intel AI infrastructure partnership lands right in the middle of that scramble. The two giants are tightening co-design work across accelerators, networking, and tooling so customers can train and serve models without choking on cost or latency. If you are weighing cloud commitments this year, you need to know what this pairing changes, what stays the same, and how to protect your budget. The news matters because Intel needs a comeback narrative while Google Cloud wants to differentiate against Nvidia-heavy rivals. That tension could translate into sharper pricing and new optimization paths for you.

What Stands Out Now

- Co-designed hardware and software aims at better price-performance for training and inference.

- Google Cloud customers may see faster on-ramps for x86-optimized AI workloads.

- Intel gets a marquee reference to counter the GPU-first story dominating the market.

- Early adopters should push for transparent benchmarking before committing spend.

This is where the rubber meets the road.

Why the Google Intel AI infrastructure partnership matters

Most enterprises are locked between GPU scarcity and runaway cloud bills. Google tying closer to Intel signals an attempt to broaden the menu beyond H100 queues. It is also a hedge: if Google can make x86 and emerging Intel accelerators deliver competitive inference, it lowers dependence on a single supplier. Think of it like a balanced sports roster: you want more than one star player so the season is not lost to a single injury.

Intel brings deep compiler and networking work that could cut overhead on large model serving. Google contributes its global backbone and steady progress on AI-optimized data centers. Together they promise shorter provisioning times and saner capacity planning. The question is whether performance per dollar will beat the status quo.

Look, promises of “better TCO” land every quarter. The only proof that matters is your own benchmark on your own workload.

How to evaluate the Google Intel AI infrastructure partnership

Start with clear success metrics. Are you chasing latency, throughput, or cost per token? Anchor tests on those targets, not vendor-provided averages. Use mixed workloads that mirror peak and steady states so you do not get surprised later. And ask for the roadmap: will future Intel accelerators show up on Google Cloud with sustained supply, or only in limited regions?

- Run a bake-off: Compare a representative transformer model on current Intel instances versus GPU options.

- Inspect the software stack: Check kernel versions, oneAPI maturity, and whether key libraries are tuned for your frameworks.

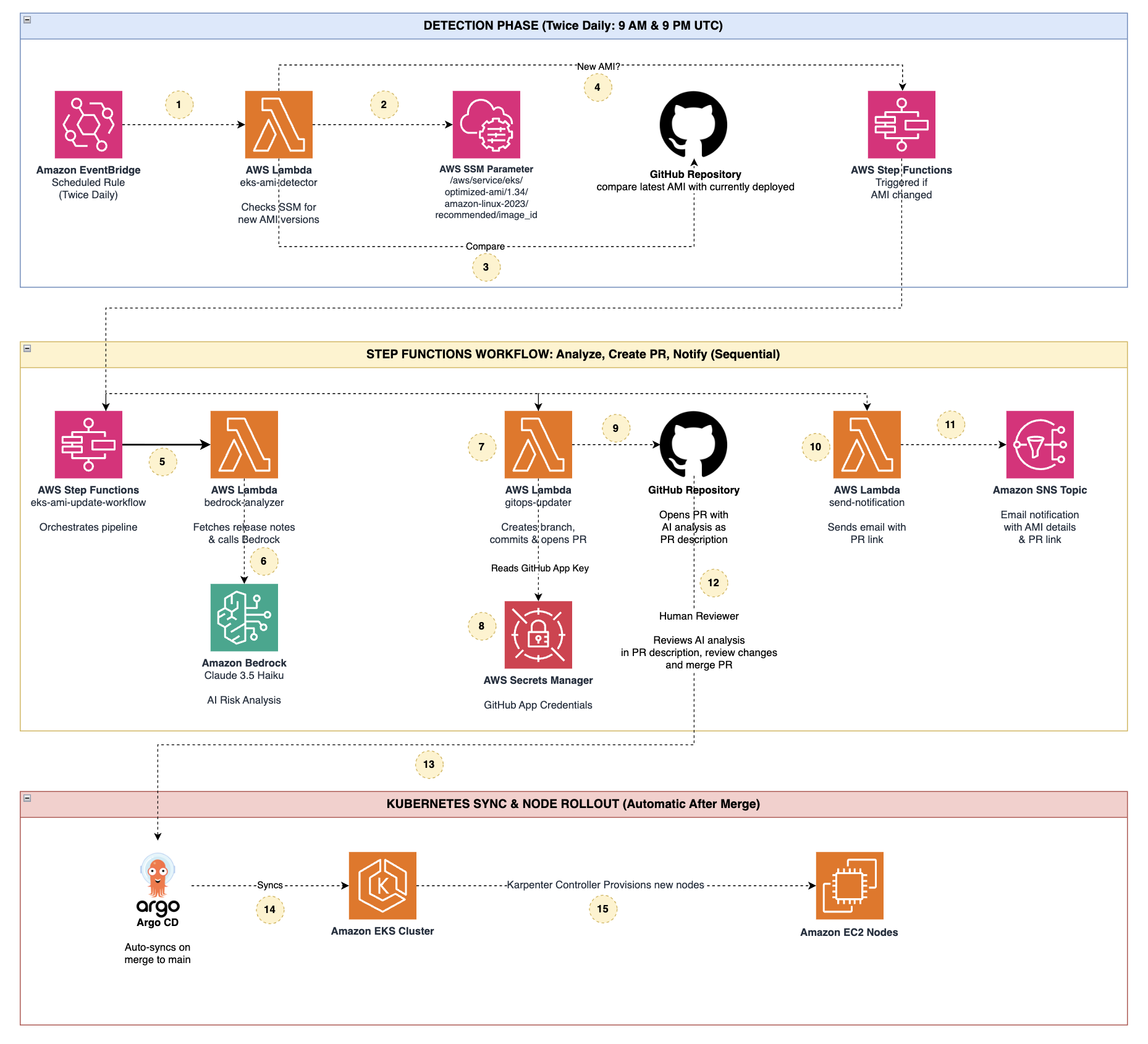

- Model lifecycle: Ensure deployment, monitoring, and rollback flows integrate with your existing CI/CD.

- Pricing clarity: Push for committed use discounts and visibility into egress costs before scaling.

One practical tip: reuse your existing observability pipeline so you can compare apples to apples across clouds.

Developer impact inside the Google Intel AI infrastructure partnership

Developers care about reliable tools. Google hints at tighter integration between Vertex AI and Intel’s oneAPI, which could shrink setup time. If custom operators are on your roadmap, verify compiler support and debug tooling up front. Nobody wants to chase opaque kernel panics during a launch week. But what happens when your workload hits an unexpected memory wall?

Because Intel’s software stack has matured slowly, insist on pilot credits and joint support channels. Treat this like testing a new kitchen range: you need to know how it handles both a slow simmer and a full boil (and whether the knobs get too hot to touch).

Business angle of the Google Intel AI infrastructure partnership

CFOs will like the competitive pressure this creates. If Google and Intel can offer viable alternatives, GPU-heavy pricing may soften. Still, do not assume savings; negotiate based on demonstrated performance. Ask for transparent SLAs tied to availability and network throughput, not just uptime. The partnership also gives procurement teams leverage to keep multi-cloud options open.

Single-sentence verdict? This alliance is a calculated bid to rebalance power in AI infrastructure.

Questions to press with vendors

- Which regions will host the newest Intel-based instances first?

- How often will firmware and drivers be updated, and who owns rollback risk?

- Will sustained-use discounts apply equally to accelerator and CPU-based SKUs?

- Can you provide side-by-side latency traces for our target model architecture?

Risks and watchpoints for the Google Intel AI infrastructure partnership

Early adopters risk betting on silicon that lags GPUs on certain model sizes. Software maturity gaps could add hidden engineering costs. Vendor lock-in is another concern if optimizations rely on proprietary hooks. Guard against that with portable tooling and open standards where possible.

And do not overlook the ecosystem. Third-party vendors may optimize for Nvidia first, leaving Intel paths a step behind. If your timeline is tight, plan for contingency capacity on existing GPU fleets.

What to do next with the Google Intel AI infrastructure partnership

Set up a 30-day pilot with a bounded workload and clear acceptance criteria. Bring your legal team in early to secure price protections and data-handling clauses. Keep an eye on Intel’s quarterly roadmaps; slips there ripple straight into Google’s availability.

Honestly, the smartest move is to let the partnership compete for your workload rather than granting blind trust.

Where this could go

If Intel executes on faster accelerators and Google supplies capacity at scale, the market finally gets a credible counterweight to GPU dependence. That scenario could reset cloud AI pricing. If not, this remains a press release boost for Intel and a negotiating chip for Google. Which outcome do you want to bet on?

/socialsamosa/media/media_files/2025/11/03/fi-all-templates-4-2025-11-03-14-08-44.jpg)