Microsoft AI Transcription Model Aims for Enterprise Dominance

Your meetings, support calls, and lecture halls are still noisy data leaks. Microsoft thinks its new Microsoft AI transcription model, championed by Mustafa Suleyman, can clean that up and turn speech into searchable knowledge faster than rivals. The timing matters because budgets for voice AI keep climbing while trust in black-box systems stalls. You need a model that handles accents, industry jargon, and privacy without turning deployment into a science project. The pitch here: Microsoft will bundle this engine across Teams, Azure, and third-party integrations, lowering the friction for developers who want reliable speech-to-text without stitching together multiple vendors. But does this stack actually deliver, and where are the traps?

Why this matters now

- Pricing pressure is forcing teams to pick one transcription pipeline for everything.

- Latency and accuracy gaps still decide whether sales calls become usable data.

- Data residency and retention rules make or break rollouts in finance and healthcare.

- Developer ergonomics determine if you ship in weeks or stall for a quarter.

What makes the Microsoft AI transcription model different

Microsoft is betting on tight coupling with Azure Cognitive Services, so speech flows straight into storage, analytics, and compliance tools. That removes the shuffle between vendors. And because Suleyman is pushing a safety-first narrative, expect more guardrails on content filtering and PII redaction out of the box.

Silence can be data too.

“If Microsoft nails this, call centers will not sound the same,” is the line I keep hearing from product leads who have tested early builds.

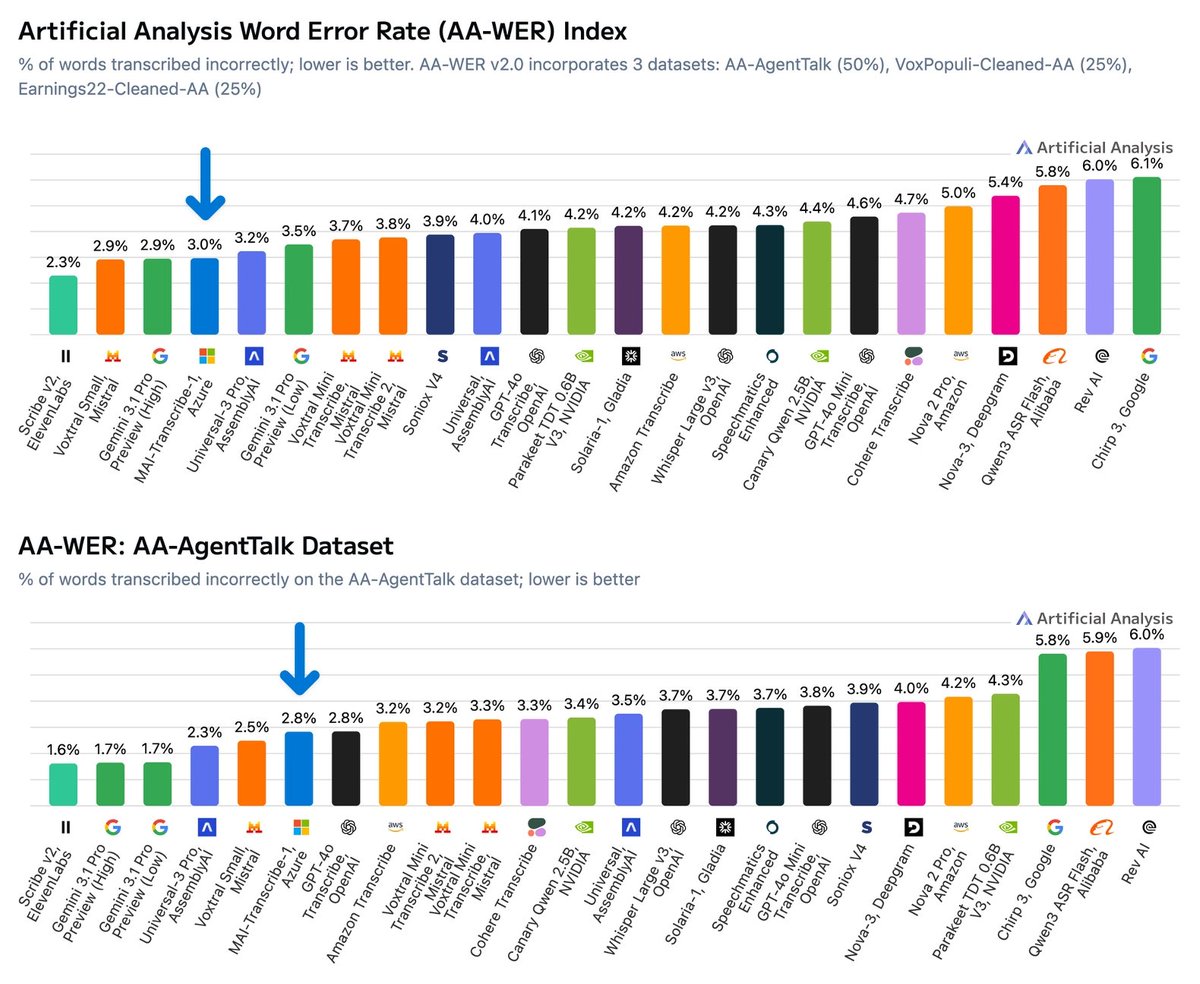

Performance claims sit around lower word error rates on messy audio, but I want to see benchmarks against call-center English, not just studio-quality samples. Who wants another glossy demo that wilts under air-conditioning hum?

Where the Microsoft AI transcription model fits

Think of it like a new chef joining a busy kitchen (the rest of Azure). The ingredients are there: storage, analytics, security. The question is whether this chef can keep pace during the dinner rush without burning the line.

- Meetings in Teams: Native integration could shrink setup to a toggle. Watch the opt-in defaults if you have strict consent rules.

- Contact centers: Pairing with Dynamics and Power Platform means real-time prompts for agents. Latency needs to stay under two seconds to be useful.

- Developer platforms: The Speech SDK promises one API for streaming and batch modes. Test domain adaptation early so your industry jargon does not vanish.

How to vet the model before you commit

I have covered enough speech launches to know the danger: pretty demos, painful edge cases. Here is a pragmatic checklist.

- Accuracy under noise: Record your own floor noise and accents. Compare against Whisper or AssemblyAI on the same clips.

- Cost at scale: Run a month-long pilot; burst pricing often hides inside long-tail usage.

- Compliance: Map data flows. Where is audio stored, and who can access transcripts? Audit logs are non-negotiable.

- Customization: Test phrase lists and custom vocabulary. But do not overfit; real users always surprise you.

- Failover plan: If the service blips, do you drop audio or queue it? Reliability beats novelty.

Risks and open questions

Microsoft says safety and privacy sit at the center, yet the company still needs to prove clear retention controls for regulated industries. And while Suleyman’s leadership hints at rapid iteration, that speed can unsettle buyers who demand stable APIs. Another concern: how transparent will evaluation data be? Without third-party audits, trust stays thin.

Honestly, the market does not need another speech widget; it needs a workhorse that respects user consent while staying fast.

What teams should do next

Start with a narrow pilot in a high-value workflow, like sales coaching, and measure downstream metrics such as call conversion uplift. Roll findings into your security review, then set a go/no-go based on latency and cost variance. If the model hits your thresholds, expand to note-taking or compliance monitoring. If not, keep your fallback vendor live while Microsoft tightens the bolts.

Looking ahead

Microsoft has the distribution muscle to make this model the default choice in enterprise speech. Whether it earns that slot depends on transparent benchmarks and predictable pricing. Are you ready to trust a single vendor with every spoken word your company produces?