Nvidia CUDA Strategy Explained

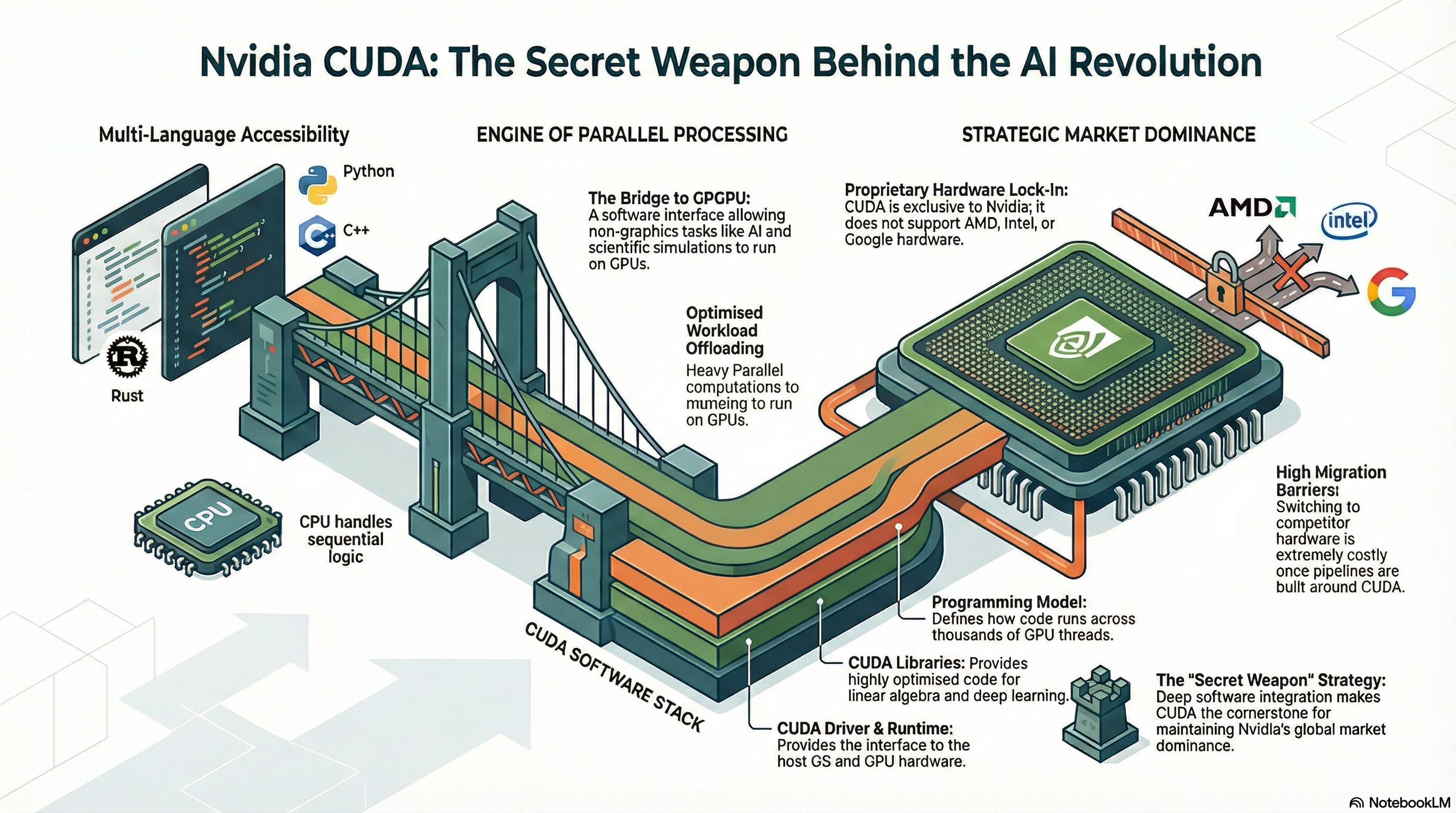

If you are trying to understand why Nvidia keeps widening its lead in AI, the answer is not just faster chips. It is the Nvidia CUDA strategy. That matters now because companies are spending billions on AI infrastructure, and many still frame the market as a hardware race. That view misses the real lock-in. CUDA, Nvidia’s software platform for programming GPUs, has spent years turning silicon into an ecosystem. Developers know it. Researchers build on it. Cloud vendors sell around it. And competitors keep running into the same wall. If you are choosing AI tools, planning infrastructure, or betting on who wins the next phase of machine learning, you need to look past the box in the server rack. The software stack is the moat, and CUDA sits at the center of it.

What matters most

- CUDA is Nvidia’s strongest competitive edge, because it ties developers, frameworks, and enterprise workflows to its GPUs.

- AI buyers often focus on chip specs, but software support usually decides how fast teams can ship real work.

- Rivals like AMD and Intel can build capable hardware, yet matching CUDA’s developer base is a much slower fight.

- The market treats Nvidia like a chip company. In practice, it behaves a lot like a platform company.

Why the Nvidia CUDA strategy matters more than raw chip speed

CUDA launched in 2006 as a way to let developers use Nvidia GPUs for general-purpose computing. That sounds technical, maybe even narrow. It was neither. It gave researchers and engineers a stable path to write code once and keep building on Nvidia hardware over time.

That early move now looks seismic. Modern AI depends on software layers such as CUDA, cuDNN, TensorRT, and integrations with PyTorch and TensorFlow. The chip is still vital, of course, but the chip alone does not train a model or optimize inference costs. The surrounding stack does.

Look, this is the part many investors and executives miss. Buying AI hardware without a mature software environment is like buying a Formula 1 engine without a pit crew. The machine may be fast, but the system around it wins races.

How CUDA became Nvidia’s moat

Developer habits are hard to break

Once developers learn a toolchain and companies build production systems around it, switching gets expensive. Code has to be rewritten. Libraries have to be retested. Performance tuning has to be redone. Teams also need people who know the new stack, which is its own tax.

That is why CUDA matters so much. It is not only a programming model. It is a long-running standard inside large parts of AI and high performance computing.

Framework support compounds the lead

Popular machine learning frameworks have long offered strong support for Nvidia GPUs. That creates a feedback loop. Developers choose Nvidia because support is deep. Framework maintainers keep optimizing for Nvidia because that is where users are. Then enterprises follow, because they want the lowest friction path.

CUDA did not win because hardware stopped mattering. It won because Nvidia made hardware easier to use than most rivals could match.

Enterprise buyers hate surprises

Big companies care about more than benchmark charts. They want predictable deployment, stable drivers, tooling, security updates, and vendor support. Nvidia has spent years building that boring but non-negotiable layer. Boring wins a lot of budget meetings.

Nvidia CUDA strategy and AI lock-in

Is this lock-in? Yes, in the plain business sense. The Nvidia CUDA strategy makes it easier to stay than to leave. That does not mean customers are trapped forever. It means switching has real cost, and that cost protects Nvidia’s position.

Wired’s framing gets at the core issue. Nvidia may sell GPUs, but its advantage looks more like software platform power than commodity hardware economics. Apple did something similar with devices and operating systems. Microsoft did it with Windows. Different market, same logic.

One sentence says it all.

CUDA also gives Nvidia time. Even if a competitor launches a strong AI accelerator, customers still need tooling, documentation, training, compatibility layers, and proof that the system works at scale. Hardware can close a gap in a product cycle. Ecosystems usually take years.

What AMD, Intel, and others are up against

Competitors are not standing still. AMD has ROCm. Intel has its own AI software efforts. Open standards such as SYCL and projects tied to the broader open source community aim to reduce dependence on one vendor. Those moves matter. They may gain real traction, especially where buyers want lower costs or less concentration risk.

But here is the hard truth. Matching CUDA is less like launching a new processor and more like building a city. You need roads, utilities, habits, service workers, and a reason for people to move in. A good chip is the foundation. It is not the whole place.

Where rivals may have an opening

- Price pressure. If Nvidia hardware stays scarce or expensive, buyers will work harder to qualify alternatives.

- Open source momentum. Better compatibility layers could make mixed-vendor environments less painful.

- Specialized workloads. Some inference tasks do not need the full CUDA ecosystem, especially if cost per query matters most.

- Regulatory scrutiny. Governments may pay closer attention if one stack becomes too dominant in critical AI infrastructure.

Honestly, that still does not guarantee a shake-up. Enterprises often stick with the known option until pain becomes obvious.

What this means if you buy or build AI systems

If you run an engineering team, the lesson is simple. Evaluate AI infrastructure as a software decision first and a hardware decision second. Ask how quickly your team can train, deploy, tune, monitor, and maintain models on a given stack. Ask how portable your code will be in two years. And ask what happens if supply tightens again.

A practical checklist helps:

- Measure developer time, not just GPU throughput.

- Check framework compatibility for your exact workloads.

- Estimate migration cost before you assume multi-vendor flexibility.

- Review support for inference optimization, orchestration, and monitoring.

- Model the risk of relying too heavily on one vendor.

Plenty of teams buy on headline performance and regret it later. The best infrastructure choices usually feel a little less flashy and a lot more usable.

Why calling Nvidia a software company is not a stretch

People hear that phrase and push back because Nvidia still makes chips. Fair enough. But business identity is not about what ships in a box. It is about where pricing power, customer dependence, and strategic control come from.

By that standard, CUDA is central to Nvidia’s position. It shapes developer behavior, steers enterprise purchasing, and keeps adjacent tools tied to Nvidia’s roadmap. That is platform behavior. And platform companies tend to keep more control than hardware vendors that compete on specs alone.

(You can see this every time a new GPU launch triggers more discussion about software support than transistor counts.)

What to watch next

The next real test for the Nvidia CUDA strategy is whether the rest of the market can make software portability feel normal rather than painful. If that happens, Nvidia’s lead narrows. If it does not, the company keeps acting less like a chipmaker and more like the operating layer of modern AI.

So where should you place your attention? Watch developer tooling, framework optimization, and migration friction. Those signals often tell you more than the latest benchmark chart. And if rivals want to dent Nvidia, they will need to win hearts, habits, and workflows, not just one speed test.