Nvidia Neural Texture Compression: VRAM Savings Without the Blur

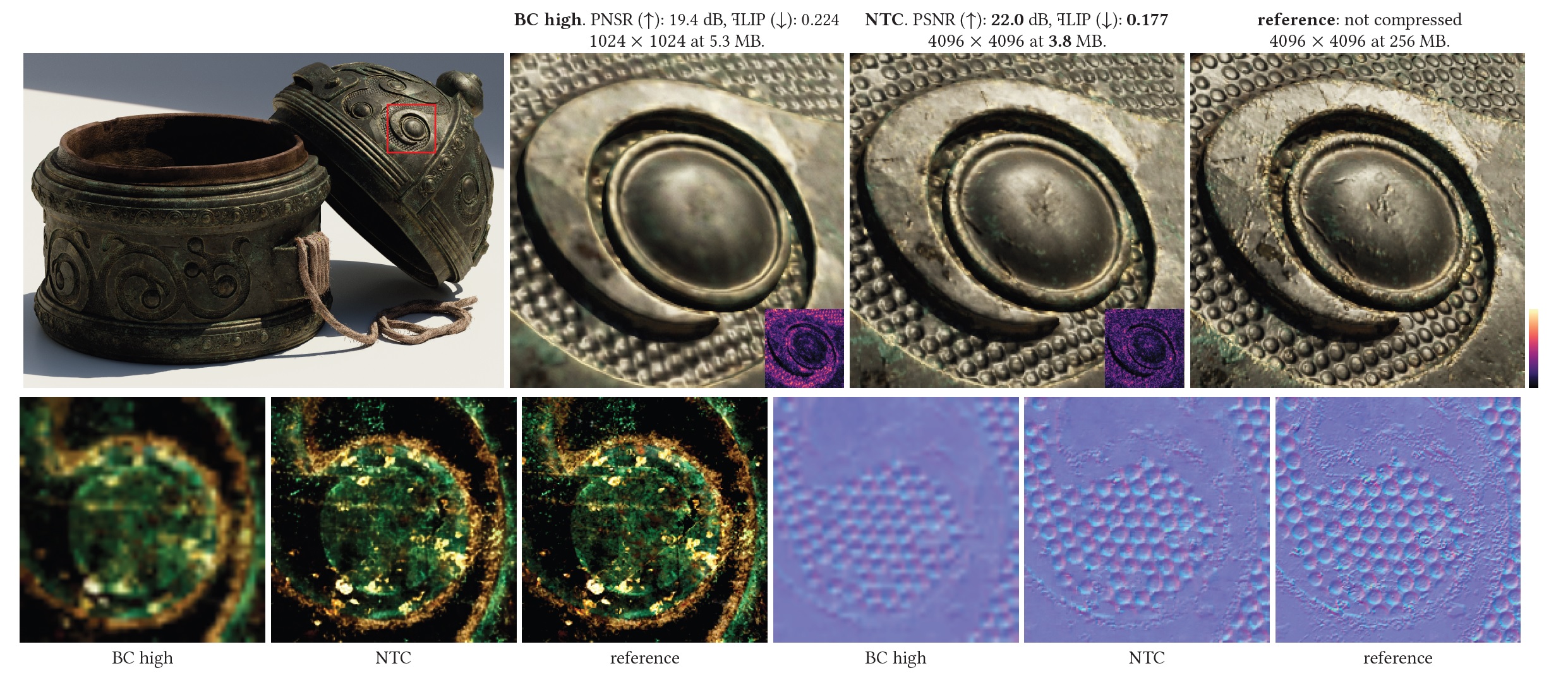

You want sharper visuals without paying the VRAM tax, and Nvidia thinks its Neural Texture Compression (NTC) can deliver. The company’s demo shows 6.5 GB of textures squeezed down to 970 MB with no visible loss, a seismic claim in a market where memory prices sting. NTC uses an RTX Tensor core pass to decode compressed textures on the fly, promising smaller downloads, faster loads, and fewer stutters in open world games. But does the tech hold up outside a controlled demo, and how soon could you actually use it? Let’s unpack the playbook and see where NTC fits in your upgrade plans.

What Stands Out

- NTC demo claims up to 85% VRAM savings while matching image quality frame by frame.

- Decoding runs on Tensor cores, freeing CUDA cores for shading and ray tracing.

- Smaller texture packs could cut install sizes and patch times for AAA titles.

- Quality hinges on per-title training; early integrations will vary by engine support.

Why Nvidia Neural Texture Compression matters right now

VRAM has become a tax on modern PC builds.

High-res textures balloon game downloads and force 8 GB cards to their limits. NTC shifts the burden: instead of storing giant textures, the GPU reconstructs them with a neural decoder. That means a 970 MB footprint could stand in for 6.5 GB, giving midrange cards breathing room. The approach echoes how high-efficiency audio codecs trade bits for smarts, only here the Tensor cores act like a dedicated DSP for pixels. If you run RTX 30 or 40 series hardware (think RTX 4070), you already own the silicon that can make this work.

How Nvidia Neural Texture Compression works under the hood

Nvidia trains a lightweight model per texture set. At runtime, the game streams compact data, the Tensor cores decode it into high-frequency detail, and the shader pipeline receives ready-to-sample textures. Because decoding is offloaded, CUDA and RT cores keep pushing frames. The company pairs NTC with GDeflate for asset streaming, positioning the two as a one-two punch for load times. Could this reduce traversal hitching in open worlds? That is the real test.

Tensor cores handle the heavy lifting, keeping shading lanes clear for lighting and ray tracing.

Where you might see NTC first

Engine support dictates rollout speed. Nvidia already has an NTC plug-in for Unreal Engine 5 in the lab, and the company hints at integration with asset pipelines that developers already use. I expect tech-forward studios to experiment in live service titles where download size and patch cadence hurt retention. Indie teams may wait for turnkey tooling and clearer licensing terms.

Developer checklist to trial NTC

- Start with non-critical textures to gauge artifacts on diverse scenes.

- Profile Tensor core utilization to avoid starving DLSS or frame generation.

- Test streaming behavior on PCIe 3.0 systems to catch bandwidth edge cases.

- Compare VRAM residency with and without NTC across 1080p, 1440p, and 4K.

Trade-offs and open questions

Every neural decode adds latency, even if it is minor. How does NTC behave when DLSS, frame generation, and ray tracing all lean on the same Tensor pool? What happens when mods swap in custom texture packs trained on different datasets? Until real games ship with NTC toggles, buyers should treat the 85 percent number as a ceiling, not a guarantee. And yes, you will need RTX-class hardware; older GTX cards are out.

Practical tips if you want in early

If you mod, watch for community texture packs that adopt NTC once tools leak out. Developers should run A/B captures at fixed seeds to catch subtle specular shifts that screenshots can hide. Benchmark with scene replays rather than live runs to isolate VRAM gains from CPU variance. Think of it like swapping a bulky pantry for a tight spice rack: you save space, but only if you keep the ingredients labeled.

Where this could lead next

Nvidia is lining up NTC with DLSS, RTX IO, and GDeflate to make asset delivery as dynamic as rendering. If it works, midrange GPUs stay relevant longer, and Steam players see fewer 100 GB installs. If it stumbles, developers may stick with tried-and-true BCn compression. The stakes feel high because VRAM costs remain stubborn and console memory budgets shape multiplatform targets. Will studios bet on a neural decoder in their shipping builds? That is the question to watch.

Look for the first public UE5 demo drop and pay attention to modder forums; early proofs will show whether NTC is a keeper or just another lab teaser.