OpenAI Trial Evidence: What Musk’s Exhibits Actually Show

You are probably seeing headlines about secret emails, broken promises, and a courtroom fight between Elon Musk, Sam Altman, and OpenAI. The real question is simpler. Does the OpenAI trial evidence actually prove that OpenAI betrayed its founding mission, or does it mostly show a messy startup history with competing versions of the truth? That matters now because OpenAI sits at the center of the AI market, and this case could shape how people think about nonprofit control, commercial AI labs, and who gets to claim the moral high ground. Look, old emails can be explosive. They can also be cherry-picked. So the smart move is to separate what the exhibits appear to show, what they do not, and why this legal fight may turn more on governance and intent than on one dramatic document.

What stands out fast

- Musk’s exhibits aim to show a gap between OpenAI’s early nonprofit mission and its later commercial structure.

- The documents reportedly include emails and internal messages tied to fundraising, control, and the push toward advanced AI development.

- Context matters a lot. Startup discussions are often blunt, tactical, and inconsistent.

- The legal bar is higher than the public relations bar. Something can look bad in headlines and still fall short in court.

Why the OpenAI trial evidence matters beyond the feud

This case is bigger than personal drama. OpenAI helped set the template for a new kind of AI organization, one that mixes nonprofit origins with a for-profit engine built to raise huge sums of money. That hybrid model has always looked unstable.

And now the strain is public.

If Musk can show that OpenAI’s leaders made concrete commitments and then moved in the opposite direction, the case gets more serious. If the evidence shows only internal debate, strategic repositioning, or vague mission language, the moral argument may land harder than the legal one.

Founding ideals sound clean on day one. Scaling a frontier AI lab rarely does.

Think of it like building a stadium. The original sketch may promise open public space, easy access, and civic value. Then construction starts, costs explode, and luxury boxes show up. People ask whether the builders adapted or abandoned the plan. That is the fight here.

What Musk’s exhibits appear to argue

Based on reporting from The Verge, the exhibits in the case focus on early OpenAI communications and organizational decisions. The broad argument is that OpenAI was launched with a public-interest mission, then shifted toward a model that benefited private investors and concentrated control.

1. Early mission statements and promises

Musk’s side appears to point to the founding story itself. OpenAI was framed as a counterweight to concentrated AI power, especially at firms like Google. The nonprofit identity was part of that pitch.

But mission language is slippery. Companies and nonprofits alike use big ideals to rally support. A court usually wants something more precise than aspirational rhetoric. Was there a binding agreement, or just a shared direction?

2. Internal debate over control and funding

One of the sharper issues in the OpenAI trial evidence is control. Who was supposed to steer the lab, and under what terms? Frontier AI research is expensive. Training top models requires chips, data, talent, and infrastructure at a scale that burns cash fast.

So if internal emails show leaders wrestling with the need for capital, that alone is not shocking. Honestly, it would be shocking if they were not. The harder question is whether the push for funding changed the core deal that brought people together in the first place.

3. The move toward a capped-profit structure

OpenAI’s shift to a capped-profit model has long been the flashpoint. Supporters say it was the only credible way to fund advanced AI work while keeping some mission guardrails. Critics say it opened the door to exactly the kind of commercial drift the nonprofit label was supposed to prevent.

(And yes, both arguments can be partly true.)

If the exhibits show that leaders privately understood this shift as a practical necessity, that may help explain the move. If they show something stronger, such as knowingly saying one thing in public while planning another in private, that would carry more legal and political weight.

Where the case may still look thin

Here is the part that gets lost in social media summaries. Damaging documents are not the same as winning documents.

- Founding discussions are often loose. People say ambitious, conflicting things in early-stage projects.

- Mission drift is hard to prove as fraud. Courts tend to look for clear promises, reliance, and measurable harm.

- Nonprofit and for-profit structures can coexist awkwardly. Awkward is not automatically unlawful.

- Musk’s own role may complicate the story. If he pushed for certain directions in OpenAI’s early days, the narrative gets less tidy.

That last point matters. A lot. If the record shows that multiple founders explored power, scale, and monetization in different ways, the case stops looking like a simple betrayal and starts looking like a factional split over who would control the future.

What readers should watch in the OpenAI trial evidence

If you want to judge the case without getting trapped by hype, focus on a few concrete things.

- Specific language. Are there explicit commitments, or broad value statements?

- Timing. Did key decisions happen before or after major fundraising pressure?

- Consistency. Do private messages sharply contradict public claims?

- Governance details. Who had authority to approve structural changes?

- Remedies. What is Musk actually asking the court to do?

Why does the remedy matter? Because lawsuits are not just about proving a point. They are about what a judge can realistically order. Rewriting the history of OpenAI is one thing. Forcing structural changes is another.

What this says about AI governance

This dispute exposes a basic problem in the AI sector. Everyone likes the language of safety, openness, and public benefit until the compute bill arrives. Then the conversation changes.

That is not unique to OpenAI. Anthropic, Google DeepMind, Meta, and xAI all face versions of the same pressure. Advanced model development rewards scale, and scale rewards capital. Public-interest promises can survive in that system, but only if governance rules are hard-edged and non-negotiable.

The real lesson is not that one lab changed. It is that soft mission language breaks under hard funding pressure.

As a veteran tech reporter, I think that is the least flattering and most believable reading. People want a villain. The documents may instead show a more familiar Silicon Valley pattern, where ideals hold until growth demands something expensive and ugly.

What happens next

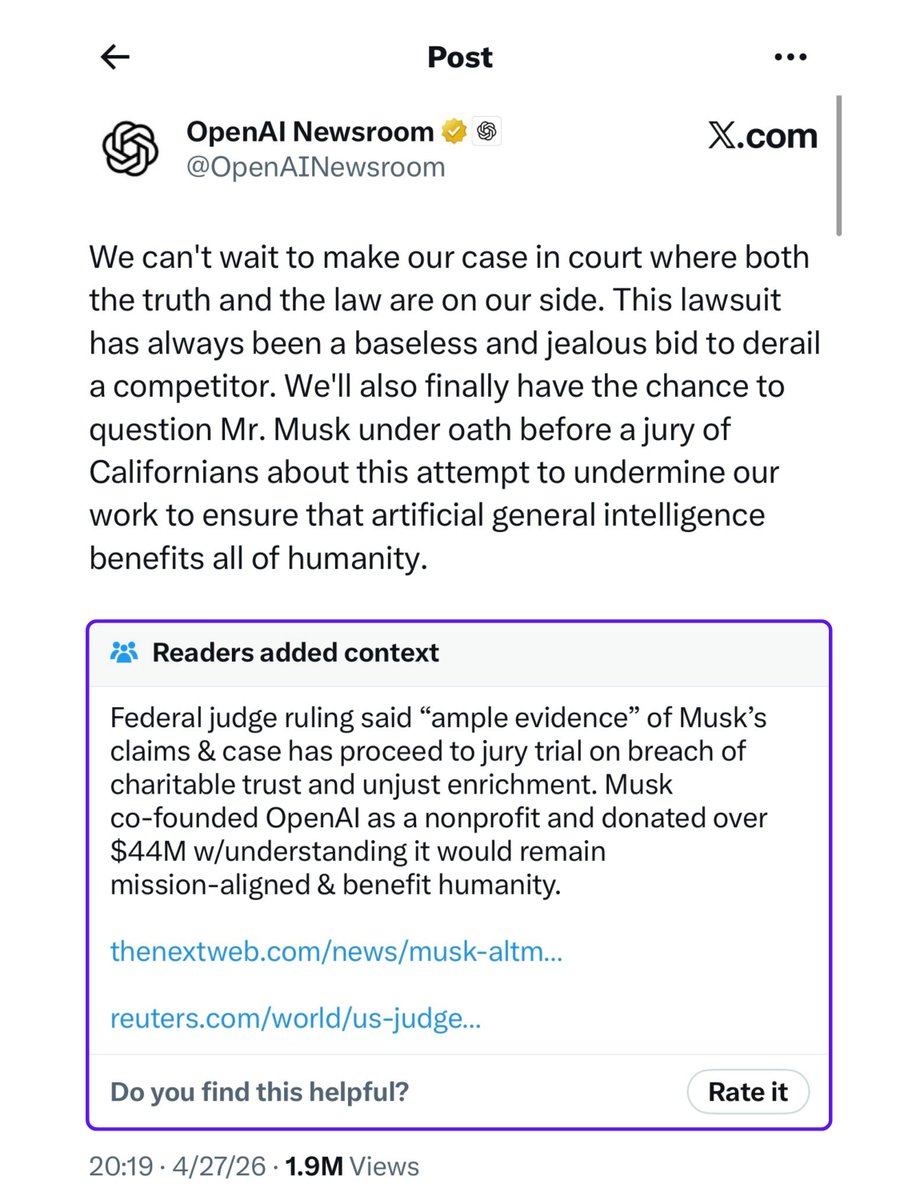

The headlines will keep chasing the most dramatic exhibit. You should ignore the noise and watch for the court’s response to the actual claims, especially around contractual commitments, fiduciary duties, and whether the nonprofit mission created enforceable obligations.

If stronger documents emerge, the case could tighten quickly. If not, this may remain a potent reputational attack rather than a decisive legal blow. Either way, the OpenAI trial evidence is forcing a question the whole AI industry has tried to dodge. What good is a lofty founding mission if it cannot survive contact with money?