US AI Trust Survey 2026: Why Adoption Outpaces Confidence

More Americans are bringing generative apps into daily work, yet the new AI trust survey shows confidence slipping. You face a strange paradox: usage rises because tools feel unavoidable, while faith in the outputs erodes after every botched answer. The data signals a credibility gap that hits small teams and enterprises alike. So what do you do when AI feels mandatory but risky? I have covered these swings for years, and this one feels seismic because it mixes productivity pressure with a trust drought. The question nags at every leader: can you keep deploying models while your staff doubts the results?

What the Numbers Really Say

- Adoption climbed across age groups, with the steepest gains among professionals under 35.

- Trust ratings fell year over year, especially on accuracy and bias handling.

- Workplace AI use shifted from experimentation to routine tasks.

- Higher income brackets reported both higher usage and sharper skepticism.

Why Adoption Beats Trust in the AI Trust Survey

Look, incentives drive behavior. Teams grab AI because deadlines shrink and budgets stay flat, and the AI trust survey confirms that pressure. But trust lags for three reasons: opaque model updates, visible hallucinations, and thin accountability when outputs fail. Think of it like relying on a rookie pitcher who throws fast but misses the strike zone too often. You keep sending them to the mound because you have no bench, yet every walk chips away at clubhouse faith.

“People feel they must use AI to keep pace, but they do not feel they can rely on it.”

How to Raise AI Credibility at Work

Here’s the thing: you cannot fix trust with slogans. You need mechanics.

- Set accuracy baselines on real workloads, not demos. Track weekly and publish the numbers.

- Demand source transparency by logging which models and data versions feed each output.

- Create red-team drills so staff can safely break prompts and reveal failure modes.

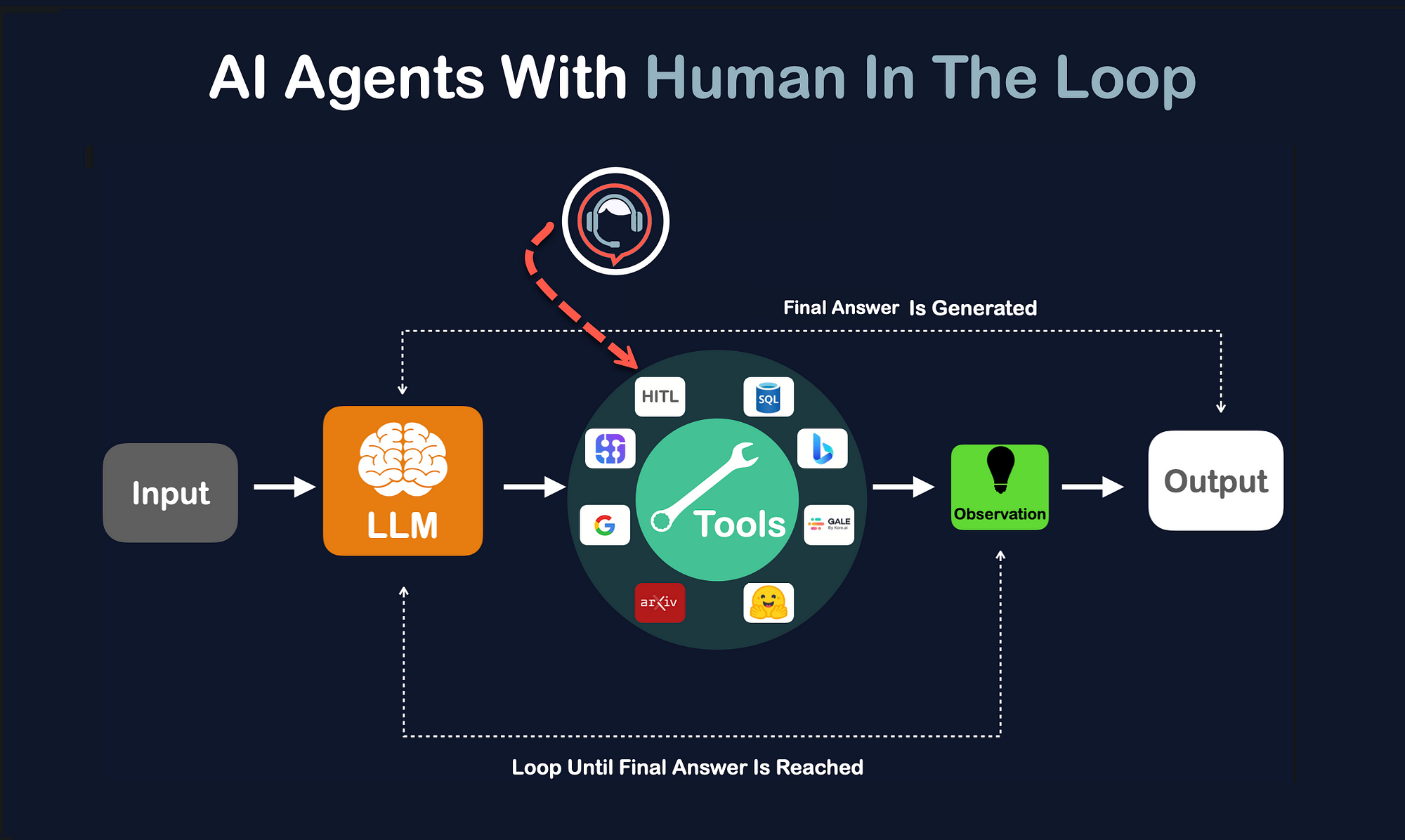

- Route critical decisions through human review until error rates fall to an agreed threshold.

One single misfire can undo months of adoption momentum.

Regulation, Liability, and the Cost of a Bad Answer

Policy is catching up. New state bills are pushing disclosure for automated decisions in hiring and lending, and that changes your risk math. If you deploy a model that denies a loan wrongly, you own the fallout. Imagine running a kitchen where every dish needs a tasting spoon; the extra step slows service, but it keeps customers safe and loyal.

What This Means for Vendors and Buyers

Vendors love to tout accuracy claims without revealing test sets. Buyers should push back and ask for repeatable benchmarks and third party audits. And if a model cannot show consistent performance across demographics, why sign the contract?

Practical Checklist Before You Roll Out Another Model

- Is there a live dashboard showing drift and error trends?

- Do you have budget for human QA on critical workflows?

- Have you tested prompts against your compliance rules?

- Can you pause deployment quickly if metrics slide?

Where Trust Goes Next

The trust slide is not permanent. Transparent metrics, fast rollback paths, and clearer liability terms can close the gap. Will vendors accept that level of scrutiny? They will if buyers insist. Your move.