Who Controls AI Answers

You use AI to get quick answers, summaries, and advice. That convenience comes with a harder question. Who controls AI answers when the system picks sources, frames facts, and decides which voices count? That matters now because AI products are moving into search, customer support, news discovery, and daily work tools at a speed that leaves little room for public debate. Campbell Brown, who once led news partnerships at Meta, has weighed in on that power shift, and her perspective lands at the center of a live argument about trust, editorial judgment, and platform control. If AI systems become the front door to information, the old fight over who shapes public understanding does not go away. It gets more concentrated.

What matters most

- AI answers are editorial choices, even when companies present them as neutral output.

- Campbell Brown’s perspective matters because she has worked where platforms and publishers collide.

- Source ranking, model tuning, and safety policies all shape what users see.

- The big risk is quiet control. Users often cannot tell why one answer appeared and another did not.

Why the “who controls AI answers” debate is getting louder

Search engines used to send you a list of links. AI systems increasingly hand you a finished response. That shift sounds small, but it changes the power structure. The product is no longer just organizing information. It is interpreting it.

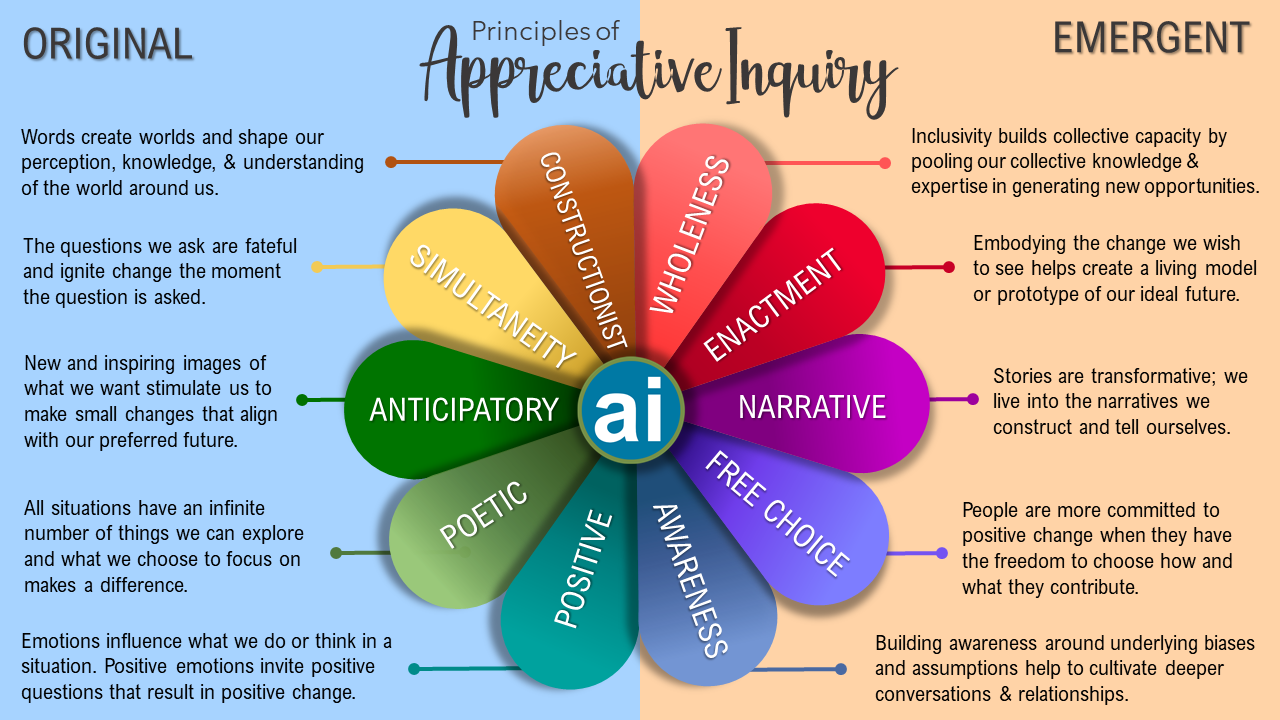

And interpretation always carries judgment. Which sources look reliable? Which claims get softened? Which topics trigger caution or refusal? These are policy choices wrapped in code.

That is why the who controls AI answers debate is not just a technical squabble. It is a governance issue. If one assistant becomes your first stop for health advice, election context, market research, or schoolwork, that assistant starts acting a bit like an editor, a librarian, and a publisher all at once.

AI systems do not merely retrieve information. They prioritize it, compress it, and present it with an implied authority that many users rarely question.

Why Campbell Brown’s view carries weight

Brown is not a random commentator stepping into the AI argument. She has spent years around the fault line between media companies and giant platforms. That matters because the same old tension is back in a new form.

For years, publishers worried that social platforms controlled distribution without taking full responsibility for accuracy or civic impact. Now AI companies face a related problem, except the stakes may be higher. Instead of sending traffic out, they may keep the user inside the answer box.

Look, anyone who has covered platform power for a while has seen this pattern before. First comes scale. Then comes dependence. Then comes the fight over rules, revenue, and accountability.

How AI companies actually shape answers

If you want to understand control, follow the pipeline. AI output does not appear by magic. It is shaped at several layers, and each layer can tilt the result.

1. Training data

Models learn patterns from huge datasets that reflect the open web, licensed content, books, forums, and other material. Those datasets are uneven. Some viewpoints are overrepresented. Others barely show up.

2. Fine-tuning and policy rules

After pretraining, companies tune models to behave in specific ways. They add human feedback, safety policies, and refusal standards. This can reduce harmful output. It also gives the company strong influence over tone, boundaries, and acceptable framing.

3. Retrieval and ranking

Many AI systems now fetch live information from selected sources. Here is the hidden hinge. Which sources are approved, ranked, or excluded? That one design choice can reshape an answer fast, like a soccer coach changing the midfield and suddenly owning the match.

4. Product design

Even the interface matters. Does the tool show citations clearly? Does it reveal uncertainty? Can you compare sources? Or does it deliver one smooth answer that feels final?

That last part is non-negotiable.

What users should worry about most

Bias gets the headlines, but opacity may be the bigger issue. Many users know that AI can be wrong. Fewer understand how much invisible filtering happens before an answer reaches the screen.

Ask yourself a blunt question. If an AI assistant gives you a confident answer on a contested topic, can you tell who it trusted, what it ignored, and why?

Usually, no.

And that is where trust gets shaky. Traditional journalism has editors, standards, corrections, and visible bylines. Search has rankings and, at least in principle, multiple links. AI systems often compress all of that into one polished paragraph. Fast, useful, and hard to audit.

What responsible AI answer systems should include

Companies like OpenAI, Google, Anthropic, and Meta will keep pushing AI deeper into information products. Fine. But if they want public trust, they need stronger habits than “trust us.”

- Clear citations

Users should be able to see the source behind factual claims without hunting for it. - Visible uncertainty

If an answer is disputed, incomplete, or based on thin sourcing, the system should say so plainly. - Source diversity

A healthy answer system should avoid overreliance on one ideological, geographic, or commercial lane. - Policy transparency

Companies should explain, in readable language, how they handle sensitive topics, refusals, and source selection. - Appeals and corrections

Publishers, experts, and users need a way to flag bad summaries or false attributions.

What this means for publishers and the open web

Publishers are in a tight spot. AI companies need high-quality reporting and reference material, but they also risk reducing the traffic that keeps that reporting alive. It is the old platform squeeze with a sharper edge.

Honestly, this is where the optimism gets thin. If AI products summarize original work without sending meaningful attention or revenue back to the source, the supply of reliable information weakens over time. Then the models themselves degrade because the web they learn from gets worse.

That feedback loop is easy to miss (especially when product demos look clean). But it is real.

My read on where this goes next

The public argument over AI usually swings between panic and hype. Neither helps much. The practical issue is simpler. We need to know who is making judgment calls inside these systems, and what checks exist when those calls go wrong.

Brown’s comments matter because they point to the human layer behind the machine layer. Someone sets the rules. Someone picks the standards. Someone decides which tradeoffs are acceptable.

That is not a reason to reject AI answers. It is a reason to demand better disclosure, stronger sourcing, and less theater around neutrality. The next phase of this fight will not be about whether AI can answer questions. It will be about whether the people behind the answers are willing to show their work.