xAI vs OpenAI Trial: What Model Distillation Could Change

AI companies copy, borrow, license, and compete at a pace that leaves most readers squinting at the fine print. That is why the xAI OpenAI trial matters right now. This case puts one of the thorniest issues in modern AI on the table, namely whether model distillation crosses a legal line when a rival believes its systems or outputs were used in ways that break the rules. If you build with large language models, buy enterprise AI tools, or simply track where this market is headed, the dispute is worth your time. A courtroom fight between Elon Musk’s xAI and OpenAI is not just company drama. It could shape how labs train models, protect their products, and police competitors in the years ahead.

What matters most

- The xAI OpenAI trial centers attention on model distillation, a common AI technique that can become contentious when ownership and permitted use are disputed.

- The case matters beyond the two companies because any ruling could influence AI licensing, API terms, and enforcement across the industry.

- Businesses using third-party models should pay attention to how vendors describe training data, outputs, and restrictions on downstream use.

- This is also a market power story, with major labs trying to protect expensive model development from fast-following rivals.

Why the xAI OpenAI trial matters

At its core, this fight is about control. Training frontier AI models costs a fortune in chips, data work, engineering talent, and infrastructure. If one company can use another company’s model outputs to improve its own system cheaply, that changes the economics fast.

Look, model distillation itself is not some fringe trick. It is a standard machine learning method where a smaller or separate model learns from the behavior of a larger model. Researchers have used it for years to compress systems, speed inference, and cut costs. The tension starts when distillation is alleged to rely on systems, outputs, or access methods that a provider says were off-limits.

AI labs want the upside of open competition. They also want hard walls around the parts of their stack that cost billions to build.

That contradiction is now impossible to ignore.

What is model distillation, really?

Think of model distillation like a chef trying to recreate a signature sauce after tasting it many times. The chef may not know the exact recipe, but repeated samples can reveal enough patterns to produce something close. In AI, a student model learns from a teacher model’s outputs, probabilities, or behavior across many prompts.

Done internally, this is routine. A company trains a big model, then distills it into a smaller one for lower cost and faster deployment. Done across company lines, things get messy. Was the data publicly available? Did the API terms allow that use? Were safeguards bypassed? Those details matter more than the headline.

Why distillation is attractive

- It can reduce inference costs.

- It can help create smaller models for phones, laptops, or enterprise deployments.

- It can speed up iteration when frontier model training is too expensive.

- It can narrow the gap between market leaders and challengers.

And that last point is the one that makes lawyers perk up.

xAI OpenAI trial and the bigger AI power struggle

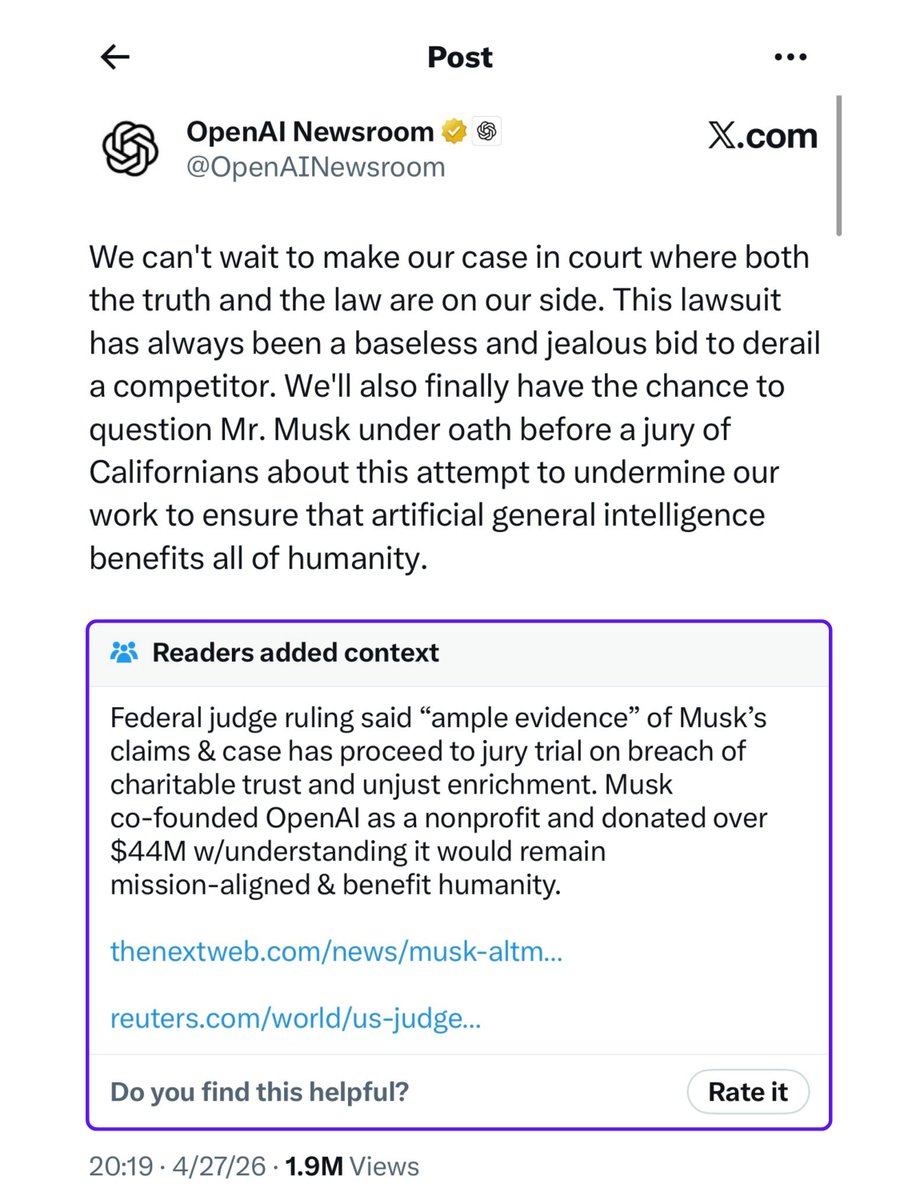

This dispute lands in a market already crowded with legal friction. OpenAI faces scrutiny over training data, copyright, partnerships, and platform power. Elon Musk, meanwhile, has made OpenAI a recurring target in both business and legal arenas. So yes, there is technical substance here. But there is also a very public contest over who gets to define the rules of the AI market.

Honestly, that broader context matters because courts do not operate in a vacuum. Judges and the public can see that AI companies often take flexible positions depending on whether they are defending their own work or criticizing someone else’s. One month a firm argues rapid innovation demands room to experiment. The next month it says a competitor moved too close to the fence.

That does not make every claim weak. It does make the politics impossible to separate from the law.

What businesses should watch in the xAI OpenAI trial

If your company uses OpenAI, xAI, Anthropic, Google, Meta, or open-source models, there are practical lessons here. You do not need to be in court to get caught by the fallout. Contract terms, vendor risk reviews, and procurement checklists may all tighten if this case produces a clear signal.

Questions worth asking your AI vendors

- How do you restrict model output use for training other systems?

- Do your terms ban automated collection, benchmarking, or synthetic data generation for distillation?

- Can you explain, in writing, how your model was trained and what safeguards were used?

- If a legal dispute emerges, what indemnity or support do customers get?

That last question tends to get very real, very fast.

Buyers should also review internal use. Teams sometimes run quick experiments with public chatbots, export large output sets, and feed them into side projects. It feels harmless until a legal team asks where the data came from and whether the terms allowed that workflow.

What courts may focus on

Without pretending to know the full evidentiary record, there are a few issues that usually matter in disputes like this:

- Access. How did the accused party obtain outputs or model behavior?

- Terms of service. Were use restrictions clear, accepted, and enforceable?

- Technical evidence. Can either side show meaningful similarity, extraction patterns, or prohibited automation?

- Damages. If a violation happened, what is the measurable harm?

Here is the catch. AI cases often run into a proof problem. Similar outputs do not always prove copying because many models converge on similar answers. But unusual overlap, repeated behavioral signatures, or internal records can shift the picture quickly (if they exist).

Why this case could reset AI norms

The AI industry still behaves like a half-built city. Some roads are paved. Others are dirt paths with expensive branding. A courtroom ruling on model distillation could help set traffic rules where companies have mostly relied on contract language, platform restrictions, and public pressure.

Would that settle everything? Of course not. But it could influence how companies write API agreements, detect extraction attempts, structure partnerships, and train smaller models from larger ones.

It may also push labs toward stricter monitoring. More rate limits. More watermarking attempts. More suspicious-eye treatment for developers who generate huge volumes of structured prompts and outputs. That could hurt legitimate research too, which is why this debate is harder than fanboys on either side admit.

My read on the xAI OpenAI trial

I have covered enough tech lawsuits to know that splashy filings often promise more than the final record delivers. Still, this one deserves attention because the underlying issue is real. Distillation sits near the center of AI economics. If the leading labs cannot protect their systems from imitation through outputs, their moat gets thinner. If they overreach, they risk choking competition and fair experimentation.

That balance is non-negotiable.

My guess is the long-term result will not be a clean win for openness or lock-in. It will be a denser web of contracts, more technical controls, and a bigger compliance burden for everyone touching frontier models. That sounds dull. It is also how industries mature.

What to watch next

Keep an eye on the evidence, not just the personalities. Watch for claims about API abuse, internal communications, unusual output matching, and how the court frames model distillation itself. Is it treated mainly as a standard research method, or as a possible route around the cost of training original systems?

The answer could ripple well past xAI and OpenAI. And if AI firms want to be treated like serious infrastructure companies, they may soon have to prove where competitive learning ends and prohibited copying begins.