AI Content Moderation Tools: Moonbounce Bets on Safer Generative Media

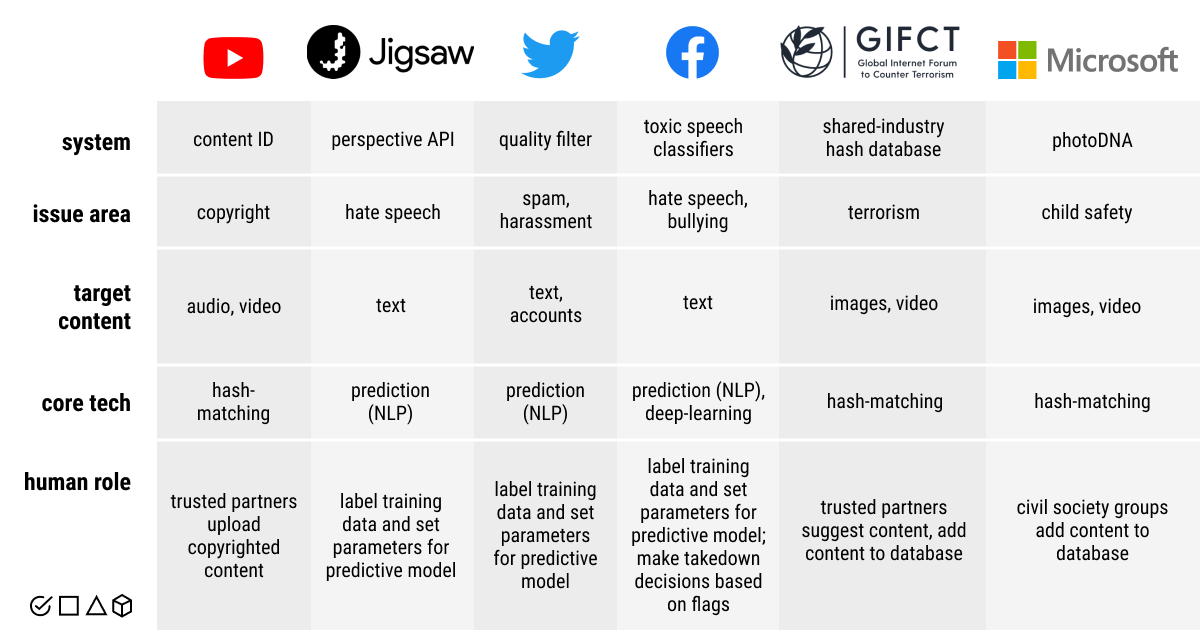

Your feeds are already filling with AI-written posts and synthetic video, and you know the trolls will follow. Companies are scrambling for AI content moderation tools that can flag hate speech, deepfakes, and prompt-engineered exploits before they hit users. Moonbounce just raised fresh capital to tackle this pile-up with a stack built for the speed of model outputs, not yesterday’s human-in-the-loop queues. The pitch: faster detection, clearer audit trails, and policies that adapt as quickly as models do. If you run a platform today, you need a plan that blends automation with accountable oversight. Otherwise you’re playing whack-a-mole with zero cover.

Fast Facts on AI Content Moderation Tools

- Moonbounce’s round signals investor faith that moderation will be a profit center, not just a cost line.

- Generative outputs demand classifiers that handle text, images, audio, and video in one workflow.

- Auditable decisions matter for regulators and brand advertisers alike.

- Latency targets now sit in milliseconds because user churn happens fast.

AI Content Moderation Tools Driving Trust

Moonbounce positions itself as the layer that screens model outputs before they reach production. Think of it like a goalkeeper in soccer, but the shots never stop and the ball keeps changing shape.

Automate what you can, but keep humans ready to review edge cases. That balance builds trust.

The company touts multimodal classifiers, policy-as-code templates, and real-time dashboards aimed at safety teams. Investors are betting that platforms will pay to cut response times and reduce headline risk.

Regulation still lags.

How Teams Can Deploy AI Content Moderation Tools Today

- Start with high-risk surfaces: new user onboarding, public comments, and any feature that accepts media uploads.

- Layer detection: pair language toxicity filters with image and audio detectors to catch cross-modal attacks.

- Set thresholds by context: a gaming chat can tolerate more slang than a health forum (document the rationale).

- Keep an override path: let moderators annotate false positives so models improve without slowing the queue.

Here’s the thing: latency is a product feature. If a system takes three seconds to decide, users will notice and bail. Moonbounce claims sub-200ms scoring on common workloads, which would put it in the competitive pack alongside providers like Hive and Spectrum Labs.

Buying vs Building AI Content Moderation Tools

Do you roll your own or bring in a specialist? If you already run a machine learning team, building can look cheaper on paper. But vendors deliver policy frameworks, dashboards, and support contracts that speed audits. Ask how they handle model drift, and demand test cases that match your weirdest edge content. Why buy speed without clarity?

Moonbounce leans into transparency with explainability reports, an area where many incumbents stay vague. That could sway brands wary of shadowy models making takedown calls.

Risks and Gaps That Still Need Work

Adversarial prompts evolve weekly. Synthetic voices can mimic real people and trick identity checks. Cross-language abuse slips through monolingual filters. And legal exposure grows as governments eye mandatory risk assessments. The market needs clearer benchmarks and third-party audits to keep vendor claims honest.

Honestly, I want to see Moonbounce publish red-team results and share failure modes. A platform that only brags about accuracy without showing blind spots is skating on thin ice.

What Comes Next for AI Content Moderation Tools

Expect more real-time watermark checks on video, tighter integration with trust-and-safety case tools, and on-device filters that run before upload. Platforms that master this stack will keep communities cleaner and advertisers calmer. Those that stall will face fines and churn. Ready to test your stack against the next wave of synthetic spam?