AI Doctor Second Opinions: What Patients Should Trust

You can now ask an AI for a medical second opinion in seconds. That sounds useful, especially if you are stuck waiting for a specialist, confused by test results, or unsure whether a diagnosis makes sense. But AI doctor second opinions raise a harder question. Should you treat the answer as medical guidance, or as a smarter search box with better bedside phrasing? That matters now because companies and high-profile tech investors are pushing these tools into healthcare faster than the evidence has caught up. Patients are being told AI can help them ask better questions, spot missed issues, and prepare for appointments. Some of that is true. Some of it is sales talk. If you are going to use one, you need a clear line between support and substitution.

What stands out

- AI doctor second opinions can help organize information, but they do not replace a licensed clinician who knows your history.

- Context is the weak spot. Medical answers often turn on details that chatbots miss or misread.

- These tools are best used before or after a visit, not as the final word on diagnosis or treatment.

- Trust depends on evidence and oversight, not on who funded or promoted the product.

Why AI doctor second opinions appeal to patients

The appeal is obvious. Healthcare is slow, expensive, and often hard to parse. People leave appointments with new terms, partial explanations, and a stack of portal notes written for other clinicians.

An AI tool can turn that mess into plain language. It can summarize records, explain possible causes, and suggest questions to bring back to your doctor. For a worried patient, that feels like relief.

And sometimes relief matters.

Wired’s reporting on Reid Hoffman’s interest in AI doctor tools lands in that gap between real need and tech ambition. The pitch is not hard to understand. If AI can help people get a sharper second look at their health concerns, why not use it?

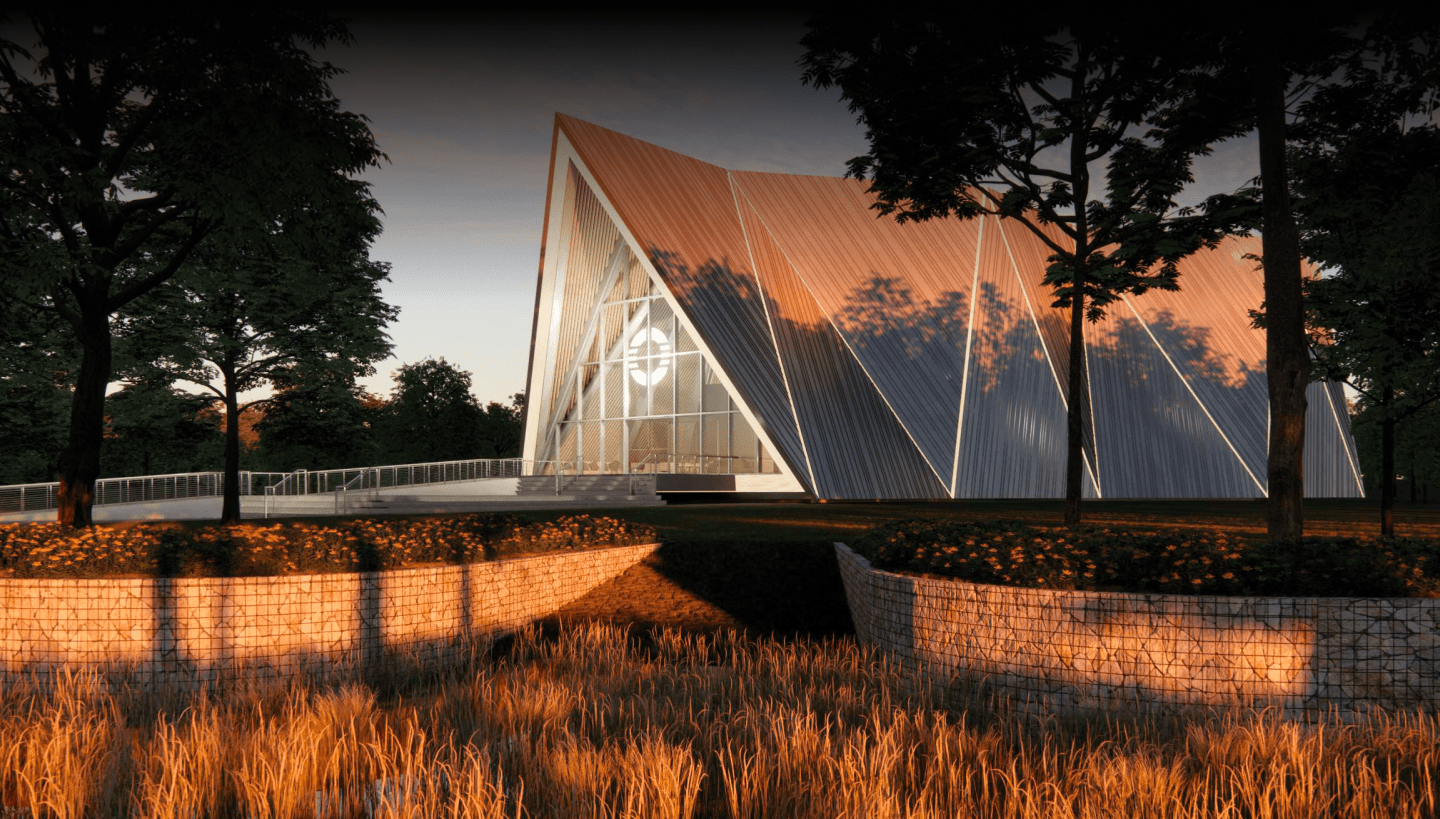

Because medicine is not customer support. It is closer to architecture. A small error in the sketch can turn into a crack in the whole building.

Where AI doctor second opinions can actually help

Look, there are practical uses here. Patients do not need purity tests. They need tools that are good at specific jobs and bad at pretending to do the rest.

1. Translating medical jargon

Lab reports, imaging summaries, and specialist notes are often dense. AI can turn them into readable language and explain terms like benign lesion, differential diagnosis, or incidental finding.

2. Preparing for appointments

A decent system can help you build a question list based on symptoms, medications, family history, and recent tests. That can make a short visit more useful.

3. Spotting missing follow-up questions

If your diagnosis depends on timing, symptom pattern, drug interactions, or prior conditions, AI may prompt you to ask about details that never came up in the room.

4. Summarizing a complex case

For patients juggling multiple specialists, AI can stitch together a timeline from records and notes. That alone can reduce confusion.

Used well, an AI second opinion tool is less like a doctor and more like a sharp medical editor. It can clean up the draft. It should not sign the chart.

Where AI doctor second opinions break down

This is the part hype tends to blur. Chatbots can sound certain even when the underlying answer is shaky, incomplete, or flat-out wrong. That style problem is not cosmetic. It can change behavior.

Why does that matter so much? Because a patient may act on a calm, polished answer that skips an urgent warning sign or overstates a low-risk theory.

Missing context

Medicine runs on context. Age, pregnancy, immune status, medication dose, timeline, race and sex-linked risk, prior surgeries, and symptom progression can all change the answer. If the prompt is thin, the output may be thin too.

False confidence

Large language models are built to produce plausible language. They are not built to feel doubt in the way a careful clinician does. That means they may present a weak inference in a very steady voice.

Uneven evidence

Some health AI systems are tested for narrow use cases. Many are not. And general-purpose chatbots often rely on broad training data rather than validated clinical pathways. Those are very different things.

Privacy and data handling

Patients may paste scans, diagnoses, medications, and family history into tools without knowing how the data is stored, reviewed, or used for training (if it is used at all). That is not a side issue. It is part of the trust equation.

How to use AI doctor second opinions without fooling yourself

Honestly, this is where most of the value sits. Not in asking AI to be your doctor, but in using it like a disciplined prep tool.

- Feed it documents, not vibes. Use the exact wording from test results, visit notes, and medication labels.

- Ask for explanations and question lists. Do not ask for a final diagnosis first. Ask what findings matter and what follow-up questions could clarify them.

- Request uncertainty. Prompt the tool to rank possibilities and explain what facts would change the answer.

- Check for red flags. Ask whether any symptom pattern could require urgent care or same-day review.

- Take the output back to a clinician. Use it to sharpen the conversation, not to end it.

A simple prompt can work well: “Here are my symptoms, labs, and medications. Explain this in plain English. List the top questions I should ask my doctor. Note any urgent warning signs. Tell me what information is missing.”

What trust should look like in AI doctor second opinions

Trust should be earned with evidence, limits, and accountability. Not branding. Not investor charisma. And not demos that go smoothly because the prompt was carefully staged.

If a company offers an AI medical second opinion product, ask a few blunt questions:

- Was it tested on real patient cases?

- What kind of clinician oversight exists?

- Does it cite clinical guidelines or source material?

- How does it handle uncertainty and edge cases?

- What happens to your health data after you submit it?

Those questions are non-negotiable. A trustworthy system should answer them clearly.

Wired’s piece taps into a larger shift in healthcare and tech. Big names want AI to sit closer to the patient. That may help access. It may also move fragile decisions into products that are still learning where their limits are. I have covered enough tech cycles to know this pattern well. The demo arrives first. The guardrails show up later.

The real role for AI doctor second opinions

The smartest use case is narrow and plain. Let AI help patients understand, organize, and prepare. Let clinicians diagnose, examine, weigh tradeoffs, and take responsibility.

But there is still a live question hanging over all this. If an AI tool becomes better than the average web search, better than a rushed urgent care visit, and better at explaining options than most health portals, will patients start trusting it more than they trust the system around them?

That is the next fight. And healthcare should answer it with proof, not optimism.