China Microdrama AI Backlash

Short-form video is cheap to make, fast to ship, and brutally competitive. That is why China’s microdrama industry rushed toward automation. Scripts can be generated in minutes. Faces can be cloned. Entire scenes can be assembled with a fraction of the old crew. But the China microdrama AI backlash shows what happens when speed starts to flatten quality, trust, and jobs at the same time. Viewers notice repetitive plots. Human actors push back. Regulators start paying attention. And platforms that once rewarded pure output now have to answer a harder question. What kind of entertainment market are they actually building? Look, this is bigger than one niche format. It is a live test of how AI changes media production when volume becomes the business model.

What to watch

- Microdramas became a perfect AI target because they rely on fast turnaround, low budgets, and repeatable plot formulas.

- The backlash is not only about technology. It is about audience fatigue, creator pay, and the value of human performance.

- Platforms and regulators now face a tradeoff between cheap scale and long-term trust.

- China’s response could shape other short-video markets that are chasing the same economics.

Why the China microdrama AI backlash happened so fast

Microdramas are built for velocity. Episodes are short. Production schedules are tight. Distribution runs through mobile platforms where attention is won one swipe at a time. That setup makes AI attractive because it trims writing, editing, dubbing, and visual production costs.

But efficiency cuts both ways. If every studio uses the same tools, the output starts to look the same. You get recycled revenge plots, stiff dialogue, uncanny digital performers, and stories that feel assembled instead of written. Audiences may tolerate that for a while. Then the market turns.

That turn matters.

The backlash also reflects labor pressure. Human writers, actors, editors, and production staff see AI as a direct threat in a sector already known for thin margins. In a business like this, replacing one crew member is not like swapping a light bulb. It is more like taking salt out of restaurant cooking. The meal still arrives, but something obvious is missing.

What makes microdramas so vulnerable to AI overuse

Formula is the product

Microdramas often lean on familiar hooks, cliffhangers, and emotional spikes. That makes them easy to imitate with generative tools. If a format rewards repetition, machines will flood it with repetition even faster than humans can.

Speed beats polish

Studios in this market are often judged by output and conversion, not artistic depth. So the first question becomes, can this be produced by tomorrow? The second question, should it exist at all, gets ignored.

Platforms amplify the cheapest content

Recommendation systems do not care whether a scene was written by a person or assembled by software. They reward retention, clicks, and binge behavior. And that can create a feedback loop where low-cost AI content keeps multiplying until users get tired of it.

Cheap production is not the same as cheap entertainment. Audiences pay with time, and they get stingy when the product starts to feel fake.

Who loses if the China microdrama AI backlash gets worse?

The obvious losers are workers whose tasks can be automated. Writers are exposed first because plot generation and dialogue drafting are easy targets. Entry-level actors and voice talent are vulnerable too, especially if producers decide that synthetic performers are good enough for throwaway content.

Viewers lose in a different way. They get more content, but less texture. Stories begin to feel like reruns wearing a different costume. Honestly, that is a bad deal even if the videos are free.

Platforms are not safe either. If users stop trusting what they watch, engagement falls. Advertisers notice. Regulators notice faster.

What the backlash says about AI in media production

The China microdrama AI backlash is a useful warning for every media company chasing lower costs. AI works best when it removes drudge work, not when it becomes an excuse to flood the market with hollow output. There is a difference between helping an editor cut faster and replacing creative judgment altogether.

Here is the real tension. Media executives love scale because scale looks like margin. But audiences still respond to specificity, surprise, and human messiness. Those things are harder to automate. A machine can copy the rhythm of a hit. It struggles with the odd little choices that make a scene worth remembering.

So what should producers take from this?

- Use AI for pre-production support, such as organizing notes, rough translations, and scheduling.

- Keep humans in the creative loop for casting, script revision, pacing, and final edits.

- Label synthetic elements clearly when digital actors, cloned voices, or AI-generated scenes are used.

- Measure repeat viewing and audience complaints, not just cheap acquisition metrics.

- Protect original talent pipelines because the industry still needs writers and performers who can create fresh material.

How regulation and platform policy could change next

China has already shown a willingness to regulate online content and generative AI. That means microdrama platforms are unlikely to get a long leash if synthetic content starts triggering fraud concerns, copyright fights, or public anger. Expect tighter disclosure rules, more content review, and pressure on companies to verify consent around digital likeness and voice use.

And there is a business reason for that pressure. If viewers cannot tell what is real, trust erodes across the whole category. Platforms may decide that stricter labeling is cheaper than cleaning up a wider credibility crisis later (and that would be the sensible call).

Studios should also watch copyright disputes. AI systems trained on scripts, performances, or visual assets can raise ownership questions that are still unsettled in many markets. Microdramas move fast, but legal systems do not.

What creators and publishers should do now

If you run a studio, publisher, or creator business, this story should hit close to home. The temptation is obvious. AI cuts time and trims payroll. But if your audience starts feeling that every show has the same pulse, you are training them to leave.

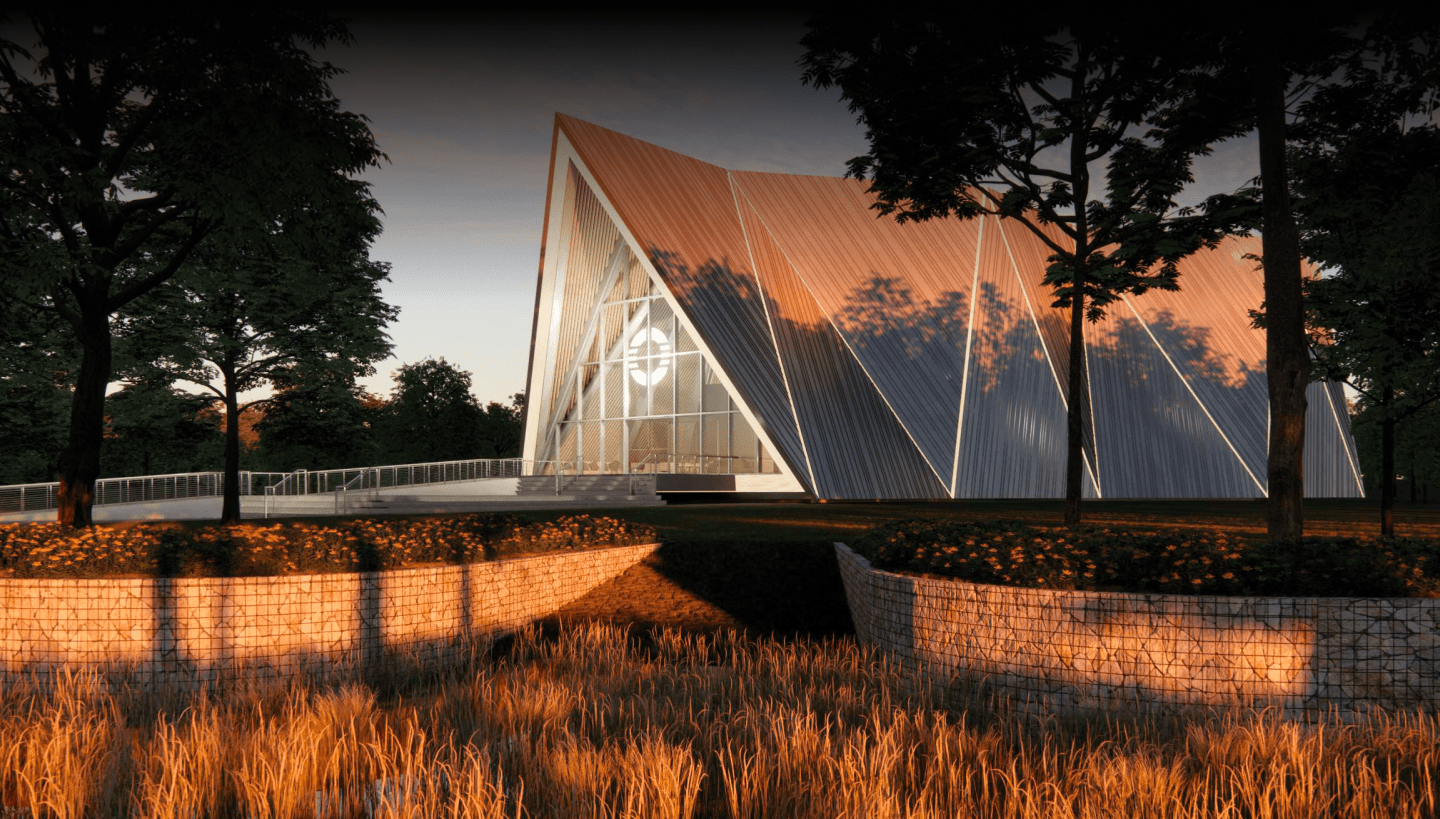

A smarter approach is selective use. Let AI handle repetitive production tasks. Keep people on the parts that shape tone, tension, and performance. Think of it like architecture. Software can speed up drafts and measurements, but you still want a human deciding whether the building is worth entering.

- Audit where AI actually saves money versus where it lowers quality.

- Set internal rules for disclosure and consent.

- Track audience sentiment, not just completion rate.

- Invest in a few distinctive titles instead of endless copycat volume.

The next test for short-form entertainment

The fight over microdramas in China is really a fight over creative standards in an algorithm-driven market. If platforms reward pure quantity, AI will keep pumping out disposable stories. If they reward trust and originality, producers will adapt.

The rest of the industry should pay attention. Short-form video companies everywhere are staring at the same spreadsheet, and many will make the same mistake unless the backlash gets loud enough. The next few years will show whether AI becomes a solid production assistant or just a faster way to make forgettable television. Which side do you think most platforms will choose when growth slows?