AI Youth Safety Testing Lab Explained

You are hearing more claims that chatbots are safe for teens and kids, but the real problem is simple. Who checks those claims before the products spread into phones, classrooms, and family life? The new AI youth safety testing lab matters because companies are moving fast, while rules, audits, and age-specific standards still lag behind. That gap affects what young users see, how they are nudged, and whether dangerous advice slips through. Parents want facts. Schools want guardrails. Policymakers want proof that safety systems work under pressure, not just in polished demos. And if independent testing can expose weak points early, that could shape how AI products are built and sold over the next few years.

What stands out

- Independent testing could give outsiders a way to check youth safety claims instead of taking company marketing at face value.

- AI risks for minors go beyond explicit content. They include manipulation, harmful advice, emotional dependency, and weak age protections.

- A lab is useful only if it tests real-world behavior, publishes clear methods, and pushes companies to fix failures.

- Look for whether the effort leads to standards that schools, regulators, and app stores can actually use.

Why the AI youth safety testing lab matters

Look, this idea lands at the right time. AI systems aimed at general users are already answering health questions, giving relationship advice, simulating friendship, and producing media at scale. Young people are in that stream whether companies target them directly or not.

That is the issue.

The reported lab would create a place to test how AI products behave around minors and youth-related harms. That sounds obvious, yet the industry has largely relied on internal evaluations, public promises, and scattered research. Independent stress testing is closer to a crash test in the auto business. You do not know much from the sales brochure. You learn more when someone slams the product into a wall and measures what breaks.

And here is the tougher question. If an AI system can pass a general safety review but still encourage self-harm, sexual grooming patterns, eating disorder content, or coercive emotional attachment in edge cases, is it really safe for younger users?

What an AI youth safety testing lab should actually test

If this effort is serious, it cannot stop at obvious filters for profanity or porn. Youth safety is wider than content moderation. It includes design choices, recommendation behavior, memory features, voice tone, and how a system responds when a young user is upset or vulnerable.

Core areas that need scrutiny

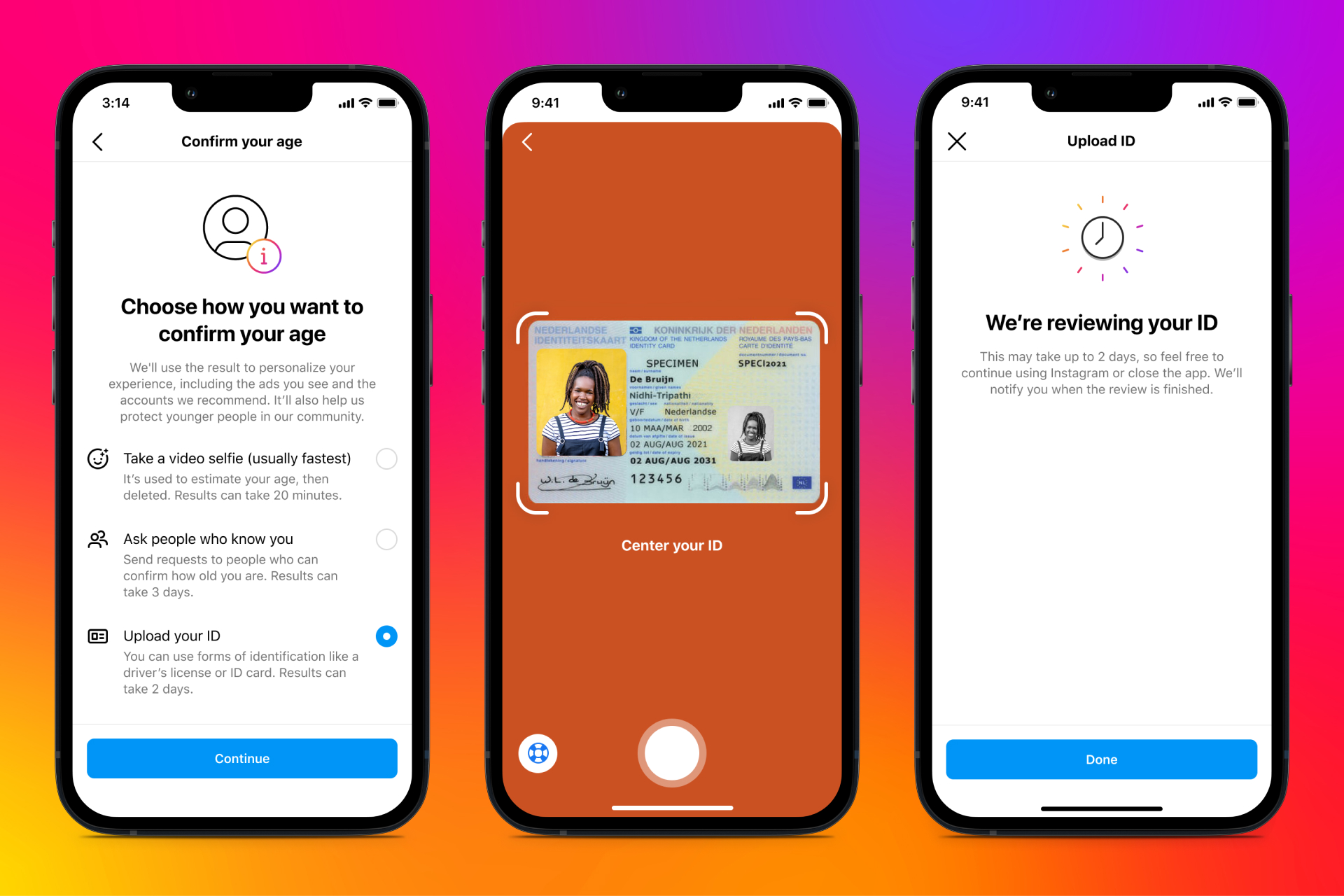

- Age assurance and access controls

Can minors get around age gates in seconds? Do protections change by age band, or is every user treated the same? - Self-harm and crisis responses

Does the model avoid dangerous instructions and steer users toward help? Does it stay consistent after repeated prompts? - Sexual safety

Can the system be pushed into grooming-like roleplay, sexualized conversations with supposed minors, or explicit image generation? - Manipulation and dependency

Does the AI encourage secrecy, exclusivity, or emotional reliance? This area is messy, but it is non-negotiable. - Education and misinformation

Will it present false facts confidently to students? Does it invent sources or overstate certainty? - Privacy and data handling

What happens to conversations involving minors, especially sensitive topics like mental health, sexuality, or family conflict?

Honestly, the dependency angle may be the most underplayed. A chatbot does not need to say something overtly illegal to cause harm. If it becomes a constant emotional crutch for a vulnerable teen, the product design itself deserves inspection.

Independent testing only matters if it measures how AI behaves with messy, adversarial, real human use, not the clean prompts companies prefer in demos.

What could go wrong with the AI youth safety testing lab

A lab can help, but it can also become a soft badge that companies wave around. We have seen versions of this movie before in tech. Voluntary standards often sound solid until you ask three basic questions. Who funds the effort, who sets the tests, and who gets to see the ugly results?

That is where skepticism is healthy. If companies can choose which models get tested, hide failure rates, or frame weak scores as progress, the lab turns into theater. Good governance matters as much as the test suite.

Signs the model is serious

- Published testing protocols and definitions of harm

- Outside experts in child safety, psychology, education, and civil society

- Repeat testing after model updates

- Public summaries of major failures and fixes

- Clear separation between funders and evaluators

Think of it like restaurant health grades. They work best when inspection rules are public, surprise matters, and bad scores carry real consequences. A private thumbs-up with no detail does not tell the public much.

What parents, schools, and policymakers should watch

You do not need to read every technical paper to judge whether this effort has teeth. Focus on what changes in practice.

For parents

Watch whether platforms explain youth safeguards in plain language. If you cannot tell how a chatbot handles crisis topics, memory, or private data, that is a red flag. The safer products will make these controls easy to find, easy to change, and hard for kids to bypass.

For schools

Districts should ask vendors whether they have undergone independent youth safety testing and whether results cover student use cases. That includes tutoring, image generation, writing help, and mental health adjacent prompts. A generic enterprise audit is not enough.

For policymakers

The big test is whether independent findings feed into enforceable standards. A lab can surface patterns, but rules decide what companies must do next. That may include disclosure requirements, minimum default protections for minors, and stronger reporting around safety incidents.

Why this could shape the next phase of AI oversight

The deeper value of an AI youth safety testing lab is not one report or one product score. It is the chance to build shared benchmarks in a field that still lacks them. Once you define repeatable tests for youth harm, regulators, schools, app stores, and buyers have something concrete to point to.

And that changes the market. Companies start designing for the test because the test reflects public pressure, procurement demands, and legal risk. Sometimes that is how standards really take hold, not through grand speeches, but through boring checklists that become hard to ignore.

Still, no one should confuse testing with a full fix. Youth safety in AI also depends on product design, staffing, escalation paths, parental tools, and basic corporate restraint (a trait Silicon Valley does not always prize).

The next question that matters

If this lab produces blunt, credible evidence about how AI systems affect young users, it could become one of the few oversight ideas with real traction. But the public should ask more than whether the lab exists. Ask what it can test, what it can publish, and whether failed systems face pressure to change. Otherwise, the phrase AI youth safety testing lab will sound better than the protection it delivers. The next year should tell us whether this becomes a genuine check on AI companies, or just another seal of approval they can buy around.