Inside the Amazon Lab Building an Alternative to Nvidia’s AI Chips

Nvidia dominates the AI chip market. But Amazon’s Trainium is emerging as a serious competitor. Built by a team that traces back to Amazon’s 2015 acquisition of Israeli chip designer Annapurna Labs, the Trainium chip now powers Project Rainier, one of the world’s largest AI compute clusters with 500,000 chips used by Anthropic. AWS claims Trainium3, running on new Trn3 UltraServers, costs up to 50% less than comparable cloud servers for similar AI performance. That cost advantage has attracted both OpenAI and Apple as customers.

Why Trainium Is Gaining Ground Against Nvidia

- Trainium3 is a 3-nanometer chip manufactured by TSMC with liquid cooling

- AWS says it delivers up to 50% cost savings compared to traditional cloud servers

- Custom Neuron switches create mesh networking that reduces latency between chips

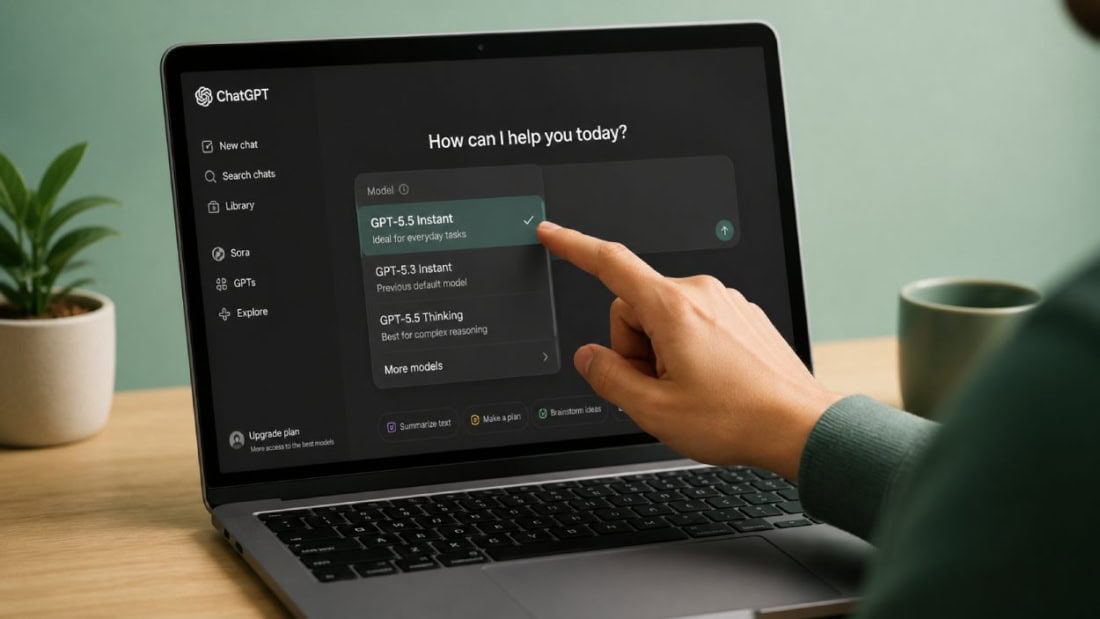

- PyTorch support means switching requires “basically a one-line change and a recompile”

- Amazon committed 2 gigawatts of Trainium capacity to OpenAI as part of a $50 billion investment

The Switching Cost Problem Nvidia Relies On

Nvidia’s market position depends partly on switching costs. Applications built for Nvidia’s CUDA stack need to be re-architected to run on other hardware. That has kept developers loyal even when cheaper alternatives exist.

Amazon is attacking this barrier directly. Trainium now supports PyTorch, the most popular open-source framework for AI model development. According to Mark Carroll, AWS director of engineering, the transition requires a one-line code change and a recompile. That claim, if accurate at scale, removes the biggest obstacle to adoption.

Apple publicly praised the chip team’s work at AWS re:Invent in 2024. In a rare moment of openness, Apple’s director of AI described how Apple used Graviton, Inferentia, and Trainium across its infrastructure.

How Trainium3 Gets Built

The Trainium3 chip is designed at an Austin, Texas lab and manufactured by TSMC at 3 nanometers. The lab hosts a process called the “bring-up,” where engineers activate a new chip for the first time after 18 months of design work. Teams work 24/7 for three to four weeks, fixing issues so chips can be mass-produced.

The chip is liquid-cooled, a significant engineering upgrade from earlier air-cooled versions. During the Trainium3 bring-up, the prototype’s heat sink dimensions were off, so engineers grabbed a grinder and modified it on the spot in a conference room to avoid disrupting the team’s pizza party atmosphere.

The Competitive Picture

Amazon has also partnered with Cerebras Systems, integrating its inference chip on servers running Trainium for faster AI performance. Combined with custom Nitro virtualization hardware and proprietary liquid cooling, Amazon controls the full stack from chip to server to networking.

Trainium is already a multibillion-dollar business for AWS, according to CEO Andy Jassy. The team is currently designing Trainium4. Whether it can capture significant market share from Nvidia depends on how quickly large AI labs like OpenAI adopt it at production scale. The $50 billion deal suggests that adoption is accelerating.