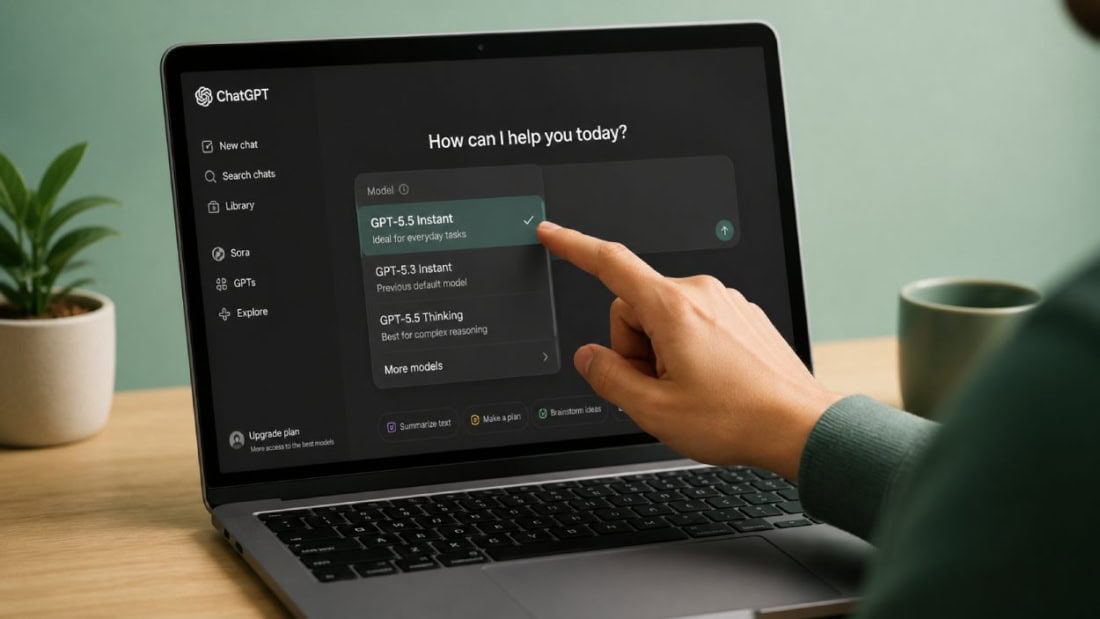

OpenAI Makes GPT-5.5 Instant the Default in ChatGPT

If ChatGPT has felt a little different lately, you are not imagining it. OpenAI has started making GPT-5.5 Instant default in ChatGPT for more users, a shift first reported by The Verge. That matters because the default model shapes nearly every casual query, work draft, coding prompt, and quick fact check you run through the app. Most people never switch models manually. They use what is in front of them.

So what changes when the default gets faster and lighter? Usually, you trade some depth for speed, cost control, and smoother everyday use. For OpenAI, this is a product decision as much as a model decision. And for you, it raises a simple question. Is the faster default actually the better assistant for daily work?

What stands out

- GPT-5.5 Instant default in ChatGPT signals that OpenAI wants quicker responses for routine use.

- The move fits a familiar pattern in AI products. The default model is often tuned for speed, scale, and lower operating cost.

- Power users may still prefer heavier models for reasoning, coding, and nuanced writing tasks.

- This change matters most because many ChatGPT users never touch the model picker.

Why GPT-5.5 Instant default in ChatGPT matters

Default settings quietly run the show. People talk about frontier models, benchmark scores, and reasoning gains, but the default model is what actually shapes user behavior at scale. It is the front door.

OpenAI is making a bet that a faster model will satisfy more people more of the time. That is not a wild idea. For a large slice of ChatGPT use, speed wins. You ask for a summary, a rewrite, a meal plan, a quick code fix, or an email draft. Waiting longer for a slightly sharper answer often feels like bad product design.

The real product is not just the smartest model. It is the one people will keep using because it feels fast, stable, and easy.

Look, this is how consumer software usually matures. Early on, companies chase raw capability. Later, they optimize the experience people actually come back for.

What OpenAI appears to be optimizing for

Speed first

Instant in the name is not subtle. OpenAI seems to want ChatGPT to feel more responsive in the same way Google Search feels immediate. That matters in chat interfaces because lag kills momentum. A two-second wait can feel minor on paper and annoying in practice.

Lower cost at larger scale

Running a top-tier model for every user prompt is expensive. A faster, lighter default likely helps OpenAI manage compute demand while keeping the product responsive during peak usage. That is not glamorous, but it is non-negotiable if you serve millions of people every day.

Better fit for routine tasks

Most prompts are not graduate seminars. They are quick asks. Rewrite this note. Explain this term. Turn these bullets into an email. If that is your workflow, a fast model can feel like the right tool.

One model cannot be best at everything.

Where GPT-5.5 Instant default in ChatGPT may fall short

There is always a tradeoff. Faster models often do well on common tasks, but they can wobble when prompts get layered, ambiguous, or technically dense. Think of it like cooking on high heat. Great for a quick stir-fry. Less ideal for a slow braise.

If you rely on ChatGPT for serious coding help, legal-adjacent drafting, technical research synthesis, or careful step-by-step reasoning, you may notice the limits sooner. The answer may arrive faster, but speed alone does not make it reliable.

Honestly, this is where the hype around defaults can get sloppy. A default model is not a declaration that it is the best model in every context. It is a decision about what works for the median user at scale.

How to tell if the new default works for your needs

You do not need benchmark charts to test this. Run the same prompts you use every week and compare output quality, follow-up burden, and response time. Keep it practical.

- Pick 5 common prompts from your real workflow.

- Test quick tasks like summaries, rewrites, and short research questions.

- Test one hard prompt that needs reasoning or structured output.

- Check whether you need more follow-up prompts to get the result you want.

- Decide based on time saved, not marketing claims.

And ask yourself one blunt question. Do you want the fastest decent answer, or the best possible answer on the first try?

What this says about the ChatGPT product strategy

This move lines up with a broader trend in AI products. Companies are separating models by job instead of pushing one giant model into every task. That is sensible. You do not use a race bike for grocery runs, and you do not need heavyweight reasoning for every email edit.

For OpenAI, setting GPT-5.5 Instant as the default in ChatGPT suggests a layered strategy:

- Use a fast general model for everyday chat.

- Reserve more advanced models for premium tiers or specialized tasks.

- Reduce friction for mainstream users who care more about responsiveness than model taxonomy.

That last point matters more than it sounds. Most users do not want to study a menu of model names. They want the app to work.

How this compares to the wider AI market

OpenAI is hardly alone here. Google, Anthropic, and other AI vendors have all wrestled with the same product tension. Should the default model aim for maximum intelligence, or should it aim for the best everyday experience? Those are not always the same thing.

The answer usually depends on audience. Consumer chat products reward speed and simplicity. Enterprise buyers often care more about consistency, controls, and task-specific performance. If OpenAI sees ChatGPT as both a mass consumer app and a serious work tool, it has to juggle both.

That balancing act is getting harder, not easier (especially as users expect one chat box to handle everything from travel plans to SQL debugging).

What you should do next

If you use ChatGPT casually, this change will probably feel fine, maybe even better. Faster replies make the product feel lighter on its feet. For quick work, that counts.

If you use ChatGPT for deeper analysis, do not assume the default is your best option. Test model switching when accuracy, reasoning, or nuance matters. A few extra seconds may save you several rounds of corrections.

The bigger question is where AI assistants go from here. Will defaults keep drifting toward speed and cost efficiency, while the best reasoning sits behind extra clicks and premium tiers? That seems likely. Watch the default model closely. It tells you what the company thinks most users are willing to trade away.