Big Tech AI Capital Spending Is Racing Toward $1 Trillion

You are watching one of the biggest spending waves in tech history unfold in real time. The problem is that raw headline numbers can blur what actually matters. If Big Tech AI capital spending tops $1 trillion by 2027, as analysts cited by CNBC now expect, you need to know where that money is going, why it keeps climbing, and what it says about the AI market’s real shape. This matters now because capital expenditure is the hard proof behind all the AI talk. Companies can make flashy product demos all day. Writing checks for data centers, GPUs, networking gear, and power infrastructure is different. That is commitment. And it gives you a clearer read on which parts of the AI economy are real, which parts are overheated, and which bottlenecks could slow everyone down.

What jumps out

- Big Tech AI capital spending is shifting from experiment mode to full industrial buildout.

- The money is flowing into data centers, Nvidia-class accelerators, networking, and power capacity.

- Cloud giants are spending because demand for AI services appears durable, not temporary.

- The biggest risk is not weak interest in AI. It is whether infrastructure can keep up.

Why Big Tech AI capital spending keeps climbing

The short answer is simple. AI is expensive to build at scale. Training frontier models burns through huge clusters of chips. Serving those models to millions of users adds another layer of cost. Then you need data center shells, cooling systems, switches, fiber, and electricity.

Look, this is no longer a software-only story. It is an infrastructure story. Think of it like building a port city, not launching an app. The glamorous part gets attention, but the docks, roads, and power lines decide whether the whole thing works.

CNBC reported that analysts now see Big Tech capital expenditures topping $1 trillion in 2027, driven by the AI boom. That figure matters because capex is one of the few metrics executives cannot easily dress up. If firms like Microsoft, Alphabet, Amazon, and Meta keep lifting spending guidance, they are signaling that customer demand and competitive pressure are strong enough to justify the burn.

Capex is where AI hype meets the balance sheet.

Where the money is actually going

If you hear “AI spending” and picture only GPUs, you are missing half the bill. The full stack is wider and more stubbornly expensive than many bulls admit.

- Accelerators and chips

High-end AI chips remain the centerpiece. Nvidia still sits at the center of this market, while AMD and custom silicon efforts from the hyperscalers keep gaining attention. - Data center expansion

New AI workloads demand more space, denser racks, and upgraded cooling. Older facilities often cannot handle the thermal and power requirements. - Networking gear

Moving data fast between clusters is non-negotiable. Ethernet, InfiniBand, optical systems, and advanced switching all become mission-critical. - Power infrastructure

Utilities, transformers, substations, and backup systems are becoming central parts of the AI story. Honestly, this may be the least flashy and most decisive line item. - Custom silicon and software layers

Big Tech is also spending to reduce long-run dependence on outside suppliers and improve efficiency per workload.

That power piece deserves more attention.

Investors love to talk about chips because chips are easy to count and compare. Power is messier. But if the grid, local permitting, or equipment lead times break down, AI deployment slows no matter how many accelerators a company orders.

What Big Tech AI capital spending says about demand

Here is the real question. Are these companies overbuilding, or are they reacting to genuine demand that still outruns supply?

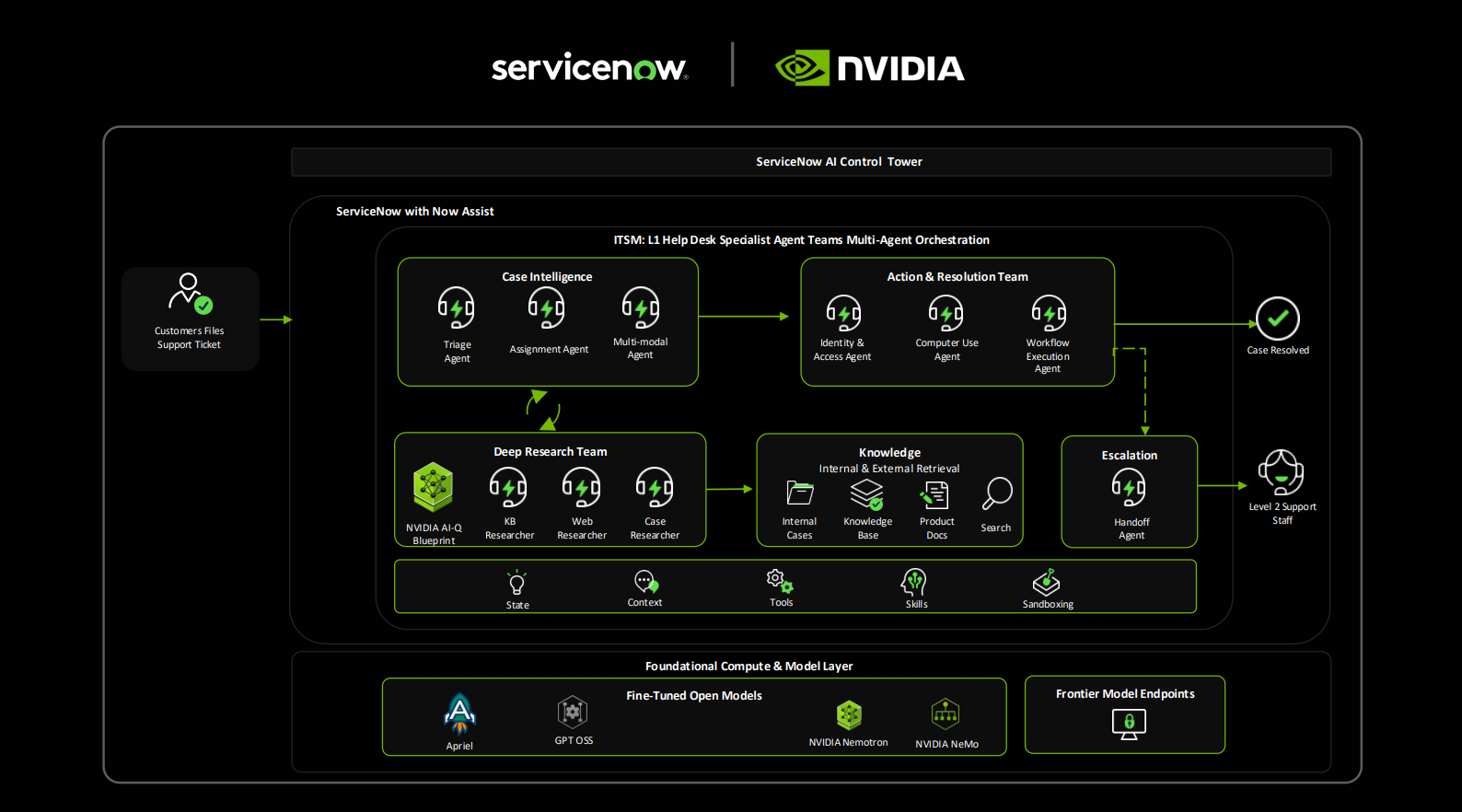

Right now, the evidence leans toward real demand. Cloud providers have repeatedly pointed to strong AI workload growth, and enterprise customers keep moving from pilot projects to production use cases. Those include coding tools, search, customer service, document processing, ad targeting, and internal knowledge systems.

But demand is uneven. Some AI products will earn real revenue. Others will be expensive features in search of a business model. A veteran reporter’s rule applies here: follow the buyers who renew, not the demos that trend on social media.

And Big Tech is spending because no major platform company wants to be caught short. If your rival can offer faster inference, better model access, or cheaper enterprise AI services, you risk losing both cloud share and developer loyalty. This is an arms race, yes, but it is also basic defense.

The risks behind the $1 trillion buildout

The bullish case is obvious. AI becomes a long-cycle infrastructure market, and the largest platforms gain even more scale advantages. The bear case is less dramatic but still serious.

1. Returns could lag the spending

Capex can rise faster than revenue for a while. That is manageable if demand compounds. It gets ugly if pricing falls before utilization improves.

2. Supply bottlenecks may shift

Last year it was chips. Next year it could be power, cooling gear, or construction timelines. Infrastructure constraints move around.

3. Regulators may pay closer attention

If a handful of giant firms control the capital base needed to train and serve leading models, competition questions get sharper. That is especially true in cloud and model distribution.

4. Enterprises may become more selective

Early AI budgets often chase curiosity. Later budgets demand hard returns. That shift tends to expose weak products fast.

But the core thesis still looks solid. Even if some spending gets ahead of near-term monetization, the broad direction seems hard to reverse because AI capability now ties directly to platform strength.

Who benefits if Big Tech AI capital spending keeps rising

The obvious winners are hyperscalers and chip suppliers. Still, the second-order winners may be just as interesting.

- Semiconductor firms that supply accelerators, memory, packaging, and interconnects

- Networking vendors building high-bandwidth, low-latency systems

- Data center operators with access to land, power, and fast deployment cycles

- Utilities and power equipment companies that can support dense AI facilities

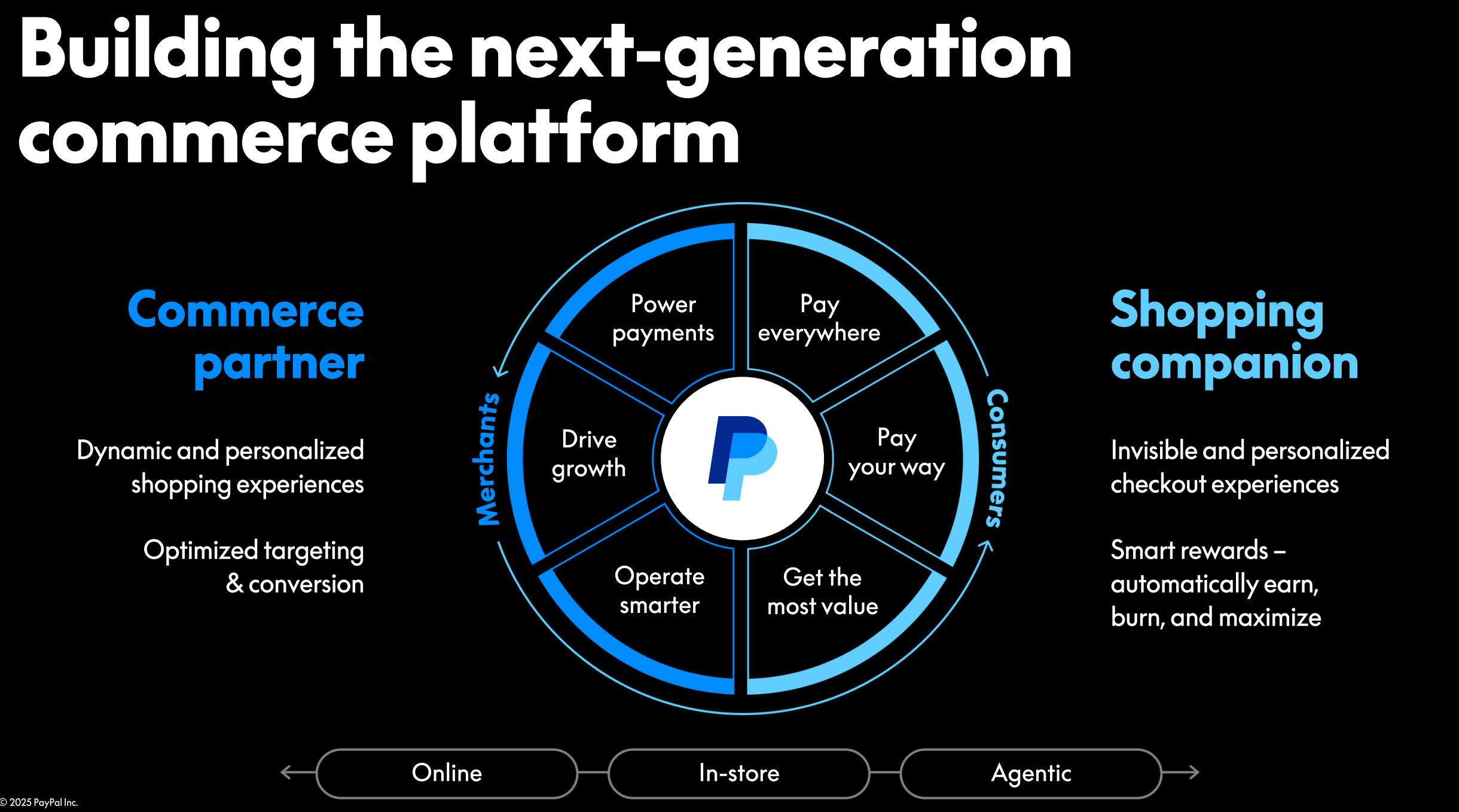

- Enterprise software firms that turn expensive AI infrastructure into recurring business value

Here’s the thing. The winners are not always the loudest brands. In many booms, the steady suppliers make the cleanest money. Think less red-carpet product launch, more cement and steel.

What you should watch next

If you want to judge whether this buildout remains healthy, track a few specific signals instead of every AI headline.

- Quarterly capex guidance from Microsoft, Amazon, Alphabet, and Meta

- Cloud revenue tied to AI services and model consumption

- Nvidia and AMD supply commentary

- Data center vacancy, leasing, and construction timelines

- Power availability in key data center markets

- Enterprise reports on AI project deployment and spending discipline

A rhetorical question is worth asking here: if spending really crosses the trillion-dollar line, who besides the largest tech firms can still compete at the frontier?

That is where this story gets sharper. Frontier AI may start to look less like the open web era and more like a capital-heavy utility market, where only a few players can fund the base layer and everyone else builds on top.

The next pressure point

The CNBC report is a marker, not the finish line. A forecast of more than $1 trillion in Big Tech AI capital spending by 2027 tells you the industry has moved past curiosity and into heavy construction. It also tells you the next battles will be fought over economics, access, and infrastructure control. Watch the companies that can turn spending into durable services. Then watch the bottlenecks. They usually tell the truth before executives do.