ChatGPT’s Product Picks Keep Missing the Mark

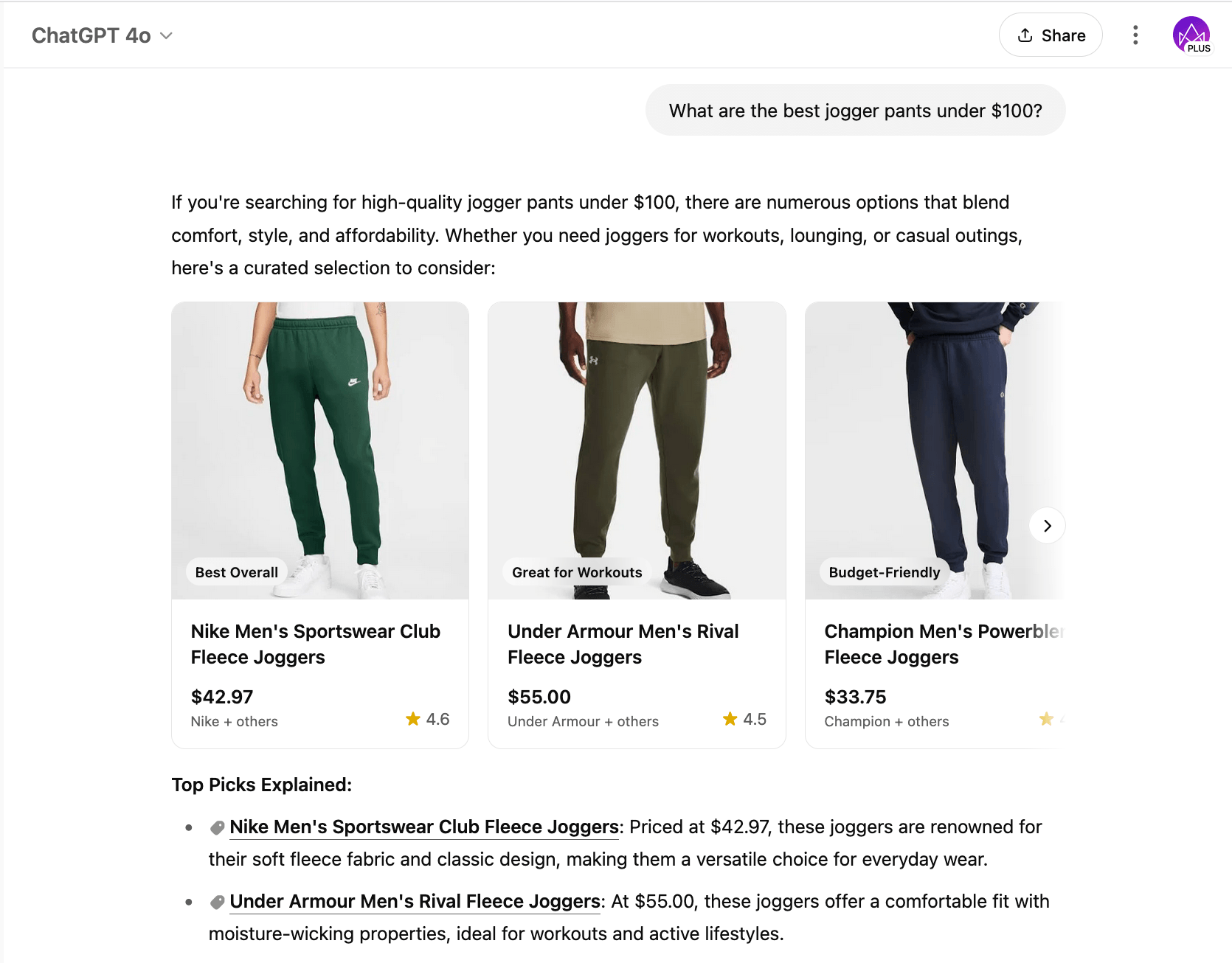

You want quick buying advice, not a guessing game. Yet ChatGPT product recommendations still trip over basic facts, as I saw when I asked for WIRED’s top picks and got nonexistent gadgets. That matters now because more shoppers lean on chatbots instead of trusted reviewers, and bad tips waste money. The model sounds confident even when it hallucinates, and most people will not cross check. The stakes rise as retailers bolt these bots into storefronts. So how do you get value without being misled?

Fast takeaways

- ChatGPT mixes real reviews with invented products, even when asked for specific outlets.

- Confidence wording can mask gaps in its training data.

- Clear prompts and cross checks lower your risk but never eliminate it.

- Human-curated lists remain more reliable for now.

Where ChatGPT product recommendations stumble

When I asked ChatGPT what WIRED recommends, it served titles and devices WIRED never reviewed. The bot stitched together brands and adjectives like a fridge-magnet poet, confident yet off target. Who wants to trust a review bot that cannot name a real product?

The core issue is retrieval. Without live links to vetted review databases, the model falls back on statistical guesses. Think of it like asking a point guard to play goalie; the skills do not match the role. You may get a flashy save, or you may get an own goal.

“ChatGPT sounds sure of itself even when the shelves are empty.”

One sentence tells the story. Hallucinations cost you time and money.

How to make ChatGPT product recommendations safer

You cannot fix the model, but you can reduce your exposure. Start with specific context: the outlet, the category, and the year. Then ask for links so you can verify the sources. If you see vague language, stop and check.

- Use brand and model names in your query, not just “best laptop.”

- Ask for publication dates and compare them to current stock.

- Cross check against a known review site before buying.

- Ask the bot to cite at least two real-world sources.

Here’s the thing: retail chatbots built on the same stack will repeat these quirks unless they bolt on authenticated data pipelines.

Better options than raw ChatGPT product recommendations

Rely on curated lists from reviewers with names and testing methods. Browser extensions that pull prices from live retailer APIs will beat a text model trained on older data. And if you still want a quick read, ask the bot to summarize a specific URL, not to invent a list.

Sometimes a single call to a human editor saves an hour of returns.

Where the industry goes next

Model makers are racing to add retrieval systems and fact filters. Retailers will need to prove that to shoppers with transparency on data sources and real-time validation. Until then, trust but verify. Will you hand your wallet to a bot that still hallucinates brands?