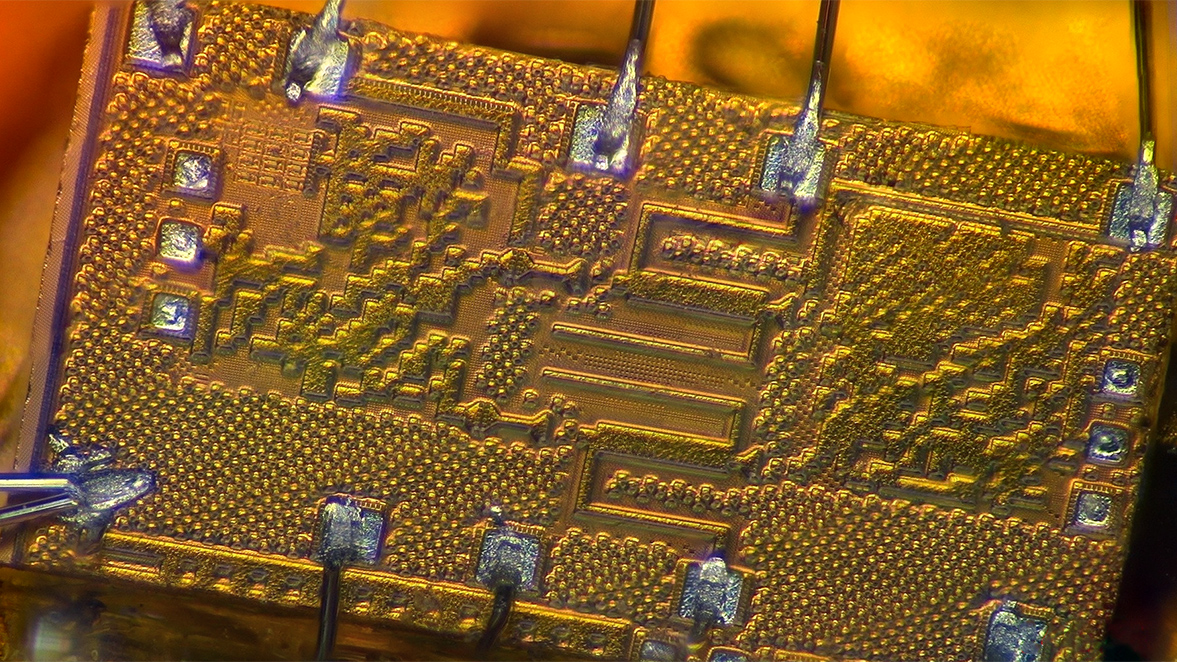

Cognichip bets on AI chip design to shrink hardware risk

AI hardware teams hit a wall when tape-out costs balloon and schedules slip. Cognichip claims AI chip design can cut that drag by automating floorplanning, verification, and power tuning, and it just banked $60 million to prove it. The company is pitching toolchains that learn from prior layouts, flag timing traps, and suggest fixes faster than sleep-deprived engineers. That matters now because every extra spin wipes out months of model revenue and burns cash you would rather spend on training data. If you are scaling accelerators, you need a path to predictable delivery that does not sacrifice reliability. And the pitch is simple to grasp: fewer reruns, cleaner power budgets, and a shot at staying ahead while Nvidia, Intel, and AMD dominate headlines.

What matters now

- Cognichip raised $60M to ship AI-first design automation tools for silicon teams.

- The company says it can cut weeks from layout closure and improve power targets.

- Its stack leans on reinforcement learning and historical tape-out data to propose floorplans.

- Investors are betting chip houses want fewer respins as AI workloads surge.

Cognichip wants AI to design the chips that power AI, and just raised $60M to try.

AI chip design playbook

I have seen EDA cycles chew up startups before, so a fresh take is overdue. The Cognichip stack tries to simplify floorplanning like a basketball coach making mid-game substitutions: keep the best five on the court and adjust when the defense shifts. It mines historical netlists, spots congestion, and proposes alternate placements. Who trusts a model to pick floorplans better than a veteran engineer? That skepticism is healthy, but the allure is clear when the alternative is another quarter lost to routing fixes.

- Start with critical path profiling to feed the model accurate timing edges.

- Run the AI-assisted placer to surface floorplans that honor power domains.

- Validate with static timing analysis and manual spot checks (think congested routing layers).

- Lock in a candidate and push automated verification to catch corner cases early.

- Track each iteration, because data from misses improves the next spin.

This is the inflection point.

AI chip design risks and reality

The bold claim is fewer respins, yet tape-out risk never goes to zero. Models trained on prior blocks may choke on novel accelerator topologies. Power intent files can drift, and a stray constraint can undo gains. The product will need tight guardrails and transparent logs so engineers can audit every suggestion. Like a chef balancing salt and acid, you still need taste to know when the model overconfidently seasons the layout.

- Data fit: If training data skews toward older nodes, performance on 3 nm designs will lag.

- Toolchain friction: Integration with Synopsys and Cadence flows must be painless or teams will bail.

- Accountability: Clear diffs on every auto-placement keep blame traceable when timing breaks.

Where this heads next

Investors are betting the next efficiency wave comes from design, not bigger fabs. If Cognichip can prove repeatable wins on live tape-outs, incumbents will respond fast. The upside for you is obvious: fewer midnight ECOs and more time tuning models. The open question: will engineers accept AI partners once the first silicon lands on time?