The performance gap between open-source and proprietary large language models has narrowed dramatically in early 2026. Models like Qwen 3.5, Llama 4, and Mistral Small 4 now match or exceed last year’s proprietary frontier models on standard benchmarks. For many production workloads, the choice between open-source and proprietary is no longer about capability but about deployment preferences, support, and ecosystem.

Where Open-Source Models Excel in 2026

- Qwen 3.5 9B matches 70B-class reasoning performance at a fraction of the compute cost

- Llama 4 Scout 17B scores within 2% of GPT-4 Turbo on MMLU and HumanEval

- Mistral Small 4 ships 119B parameters with Apache 2.0 licensing

- DeepSeek R1 offers specialized reasoning capabilities that compete with o1-class models

- Open-source fine-tuning allows adaptation to specific domains without API dependency

Why the Gap Closed So Quickly

Three factors accelerated open-source progress. First, training dataset quality improved as organizations like Hugging Face, EleutherAI, and Allen AI released curated, high-quality training sets. Second, distillation techniques let smaller open-source models learn from the outputs of larger proprietary ones. Third, hardware access democratized as cloud GPU pricing dropped and consumer GPUs gained enough memory to run meaningful models.

Open-source LLMs now compete with proprietary models not because they scaled to the same size, but because better data, training techniques, and distillation methods closed the quality gap at smaller parameter counts.

The most important shift is philosophical. In 2024, open-source was seen as a budget alternative to proprietary APIs. In 2026, open-source is a strategic choice. Companies use open-source models to maintain control over their AI stack, avoid vendor lock-in, and fine-tune for specific use cases that general-purpose APIs handle poorly.

The Remaining Advantages of Proprietary Models

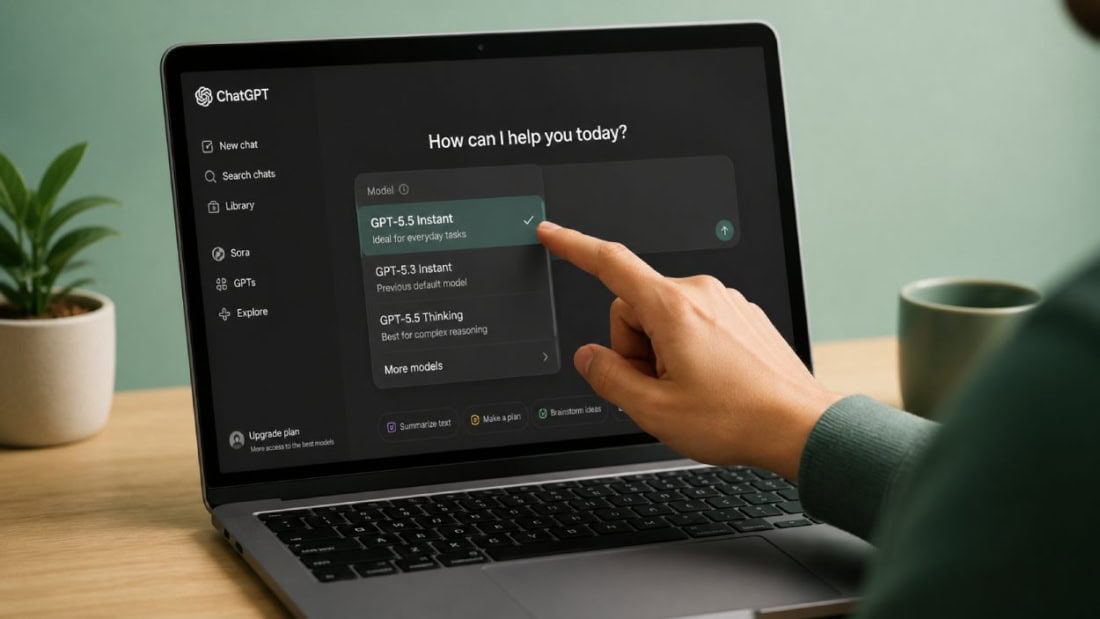

Proprietary models still lead in three areas. First, maximum context window size, where models like GPT-5.4 and Claude Opus 4.6 offer 1 million tokens versus the 128K-256K typical of open-source models. Second, multimodal capabilities remain more polished in proprietary offerings. Third, managed API infrastructure with guaranteed uptime, rate limiting, and enterprise support contracts simplifies deployment for non-technical teams.

For organizations with ML engineering capacity, the open-source option delivers comparable quality at lower ongoing cost. For organizations that prefer managed services, proprietary APIs still offer convenience that justifies their premium.

What This Means for Developers Choosing Models

Evaluate open-source models on your specific workload before defaulting to a proprietary API. Run your production prompts through Qwen 3.5 or Llama 4 and compare output quality. The results may surprise you. If quality is comparable, the total cost of ownership for self-hosted open-source models is typically 60-80% lower than API-based alternatives at scale.