OpenAI Trial Expert Warns of an AGI Arms Race

You are hearing a lot of noise around the OpenAI lawsuit, Elon Musk, and the future of artificial general intelligence. The hard part is sorting legal theater from the issue that may matter more: an AGI arms race. That phrase sits at the center of the latest testimony tied to the OpenAI trial, where Musk’s only expert witness argued that the push to build ever more capable AI systems could turn into a speed contest with weak guardrails. Why should you care? Because if top labs and their backers treat AGI like a winner-take-all prize, safety, governance, and public accountability can slip fast. And once that logic takes hold, it is hard to reverse. The courtroom drama gets clicks. The strategic warning deserves more attention.

What stands out here

- The expert witness tied the OpenAI dispute to a broader AGI arms race, not just a corporate fight.

- The core fear is incentive pressure. Labs may move faster because rivals are moving faster.

- This argument matters beyond the lawsuit because it points to weak governance across the AI sector.

- You should watch whether courts, regulators, and major AI labs start treating AGI competition as a public risk issue.

Why the AGI arms race warning matters

The phrase AGI arms race is not random branding. It frames advanced AI development as a contest where each player feels forced to accelerate, even if everyone admits the risks are real. Think of it like a defensive bidding war in pro sports. One team overpays for talent, the next team panics, and soon the whole market is distorted.

That is the real concern behind the expert testimony reported by TechCrunch. If AI labs believe the first company to reach AGI gets the biggest upside, then restraint starts to look like surrender. And that is a bad setup for safety work, independent oversight, and honest disclosure about model limits.

Look, once executives and investors start reading caution as weakness, the safety conversation can get pushed to the edge of the table.

The OpenAI trial is about more than OpenAI

The legal fight may focus on governance, corporate structure, and alleged departures from OpenAI’s original mission. But the testimony pushes the story into bigger territory. It asks whether the incentives around frontier AI are now so intense that no lab can slow down without fearing strategic loss.

That is the part policymakers should pay attention to. Courts can resolve pieces of a business dispute. They are far less equipped to manage an industry where the main players may be racing each other toward systems they only partly understand.

And yes, that should make you uneasy.

What the expert witness is really saying

Based on the TechCrunch report, Musk’s expert witness is raising a classic strategic problem. Even if each company says it cares about safe deployment, the market can still reward speed over caution. In plain English, good intentions do not cancel bad incentives.

This is not a new concern in AI policy circles. Researchers and governance groups have warned for years that frontier model development can create competitive pressure, especially when companies are chasing investment, cloud capacity, talent, and public prestige. But hearing that concern aired in a high-profile OpenAI trial gives it fresh weight.

Three practical implications

- Safety promises may weaken under pressure. Voluntary pledges sound solid until a rival releases a stronger model first.

- Transparency can shrink. Labs may share less about training methods, capability jumps, or failure modes if openness helps competitors.

- Regulators may arrive late. By the time formal rules appear, the race dynamic may already be baked into the market.

What to watch next in the AGI arms race debate

If you want to judge whether the AGI arms race concern is real, skip the slogans and watch behavior. What do the big labs actually do when capability progress speeds up?

- Do they delay launches when safety tests raise red flags?

- Do they publish meaningful evaluation results?

- Do they accept outside audits or only internal review?

- Do investors reward caution, or punish it?

- Do governments set thresholds for frontier models before the next big leap?

Honestly, that last point is non-negotiable. Without clear policy triggers, every company can claim to support safety while acting as if someone else should slow down first.

Why this could reshape AI governance

The most interesting part of this story is not Musk. It is the possibility that courts and regulators begin to treat frontier AI competition as a structural risk, much like financial concentration or national security exposure. That shift would change the conversation from “Can this company be trusted?” to “Are the incentives for all companies broken?”

That is a tougher question, but it is the right one. A system built on speed, secrecy, and giant capital demands does not become safer because one executive gives a thoughtful interview. It becomes safer when the costs of reckless behavior are real and immediate.

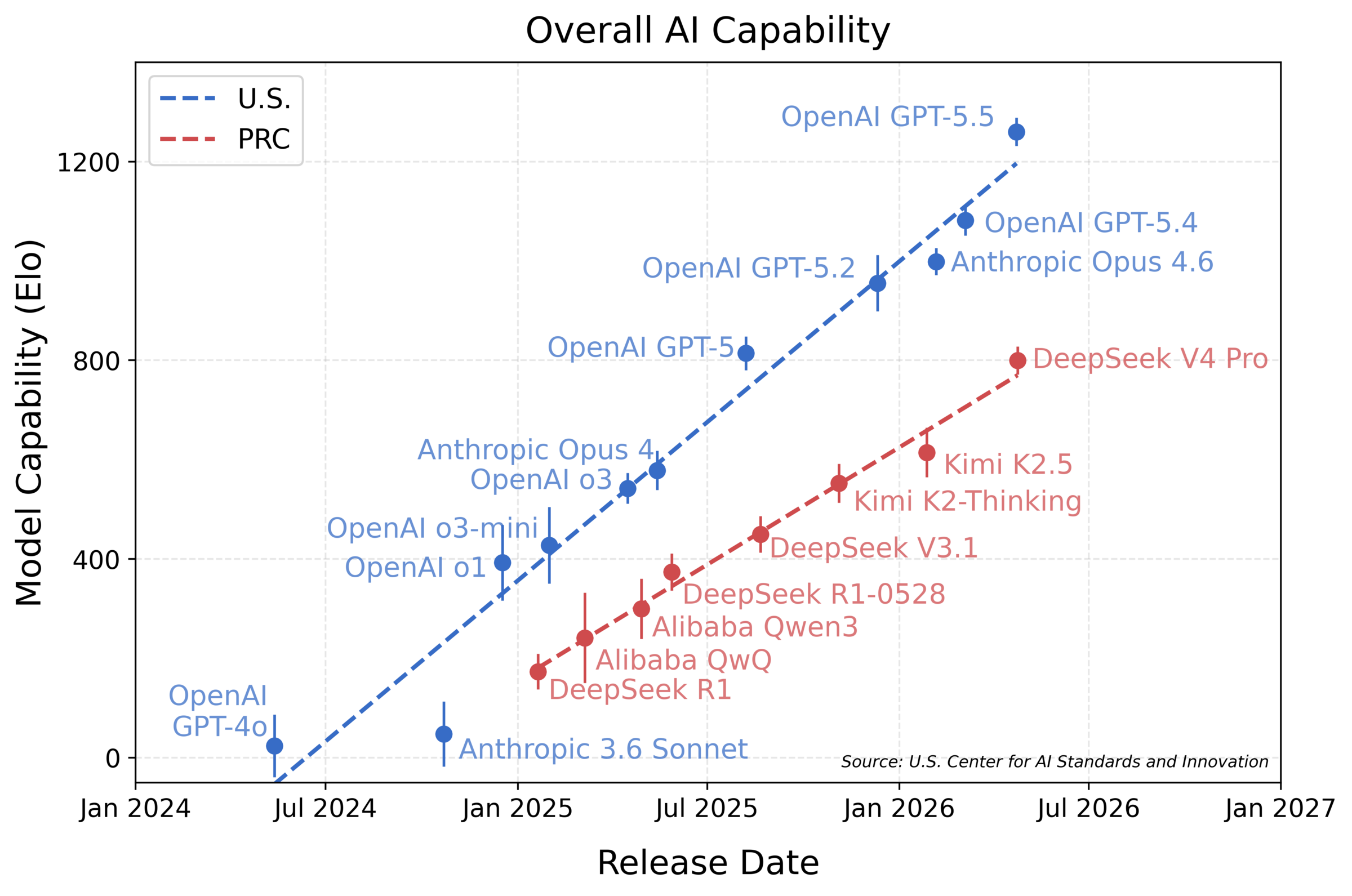

There is also a global angle here (and it is easy to miss). If U.S. firms argue they cannot slow down because foreign rivals might pull ahead, then domestic regulation starts to look politically harder. That is how arms-race logic spreads. Fast.

What smart readers should do with this story

You do not need to pick a side in the OpenAI lawsuit to learn something useful from it. Treat this case as a signal flare. The testimony highlights a pressure point that runs through the whole AI sector.

If you work in AI, ask whether your company’s incentives reward caution or punish it. If you invest in AI, ask how safety claims are tested. If you write policy, focus less on mission statements and more on enforceable rules for compute, evaluations, deployment, and incident reporting.

The big question is not whether companies say they fear an AGI arms race. It is whether they will accept limits that make the race slower.

Where this heads from here

The OpenAI trial may end with a narrow legal result. The broader issue will not. As frontier AI models improve, the pressure to move first could get stronger, not weaker. That is why this expert testimony matters.

The next phase of the AI fight will turn on incentives. Who gets rewarded for slowing down when caution is warranted? Until there is a clear answer, the AGI arms race warning will hang over every major lab release.