Qodo’s $70M Bet on AI Code Verification and Reliable Autonomy

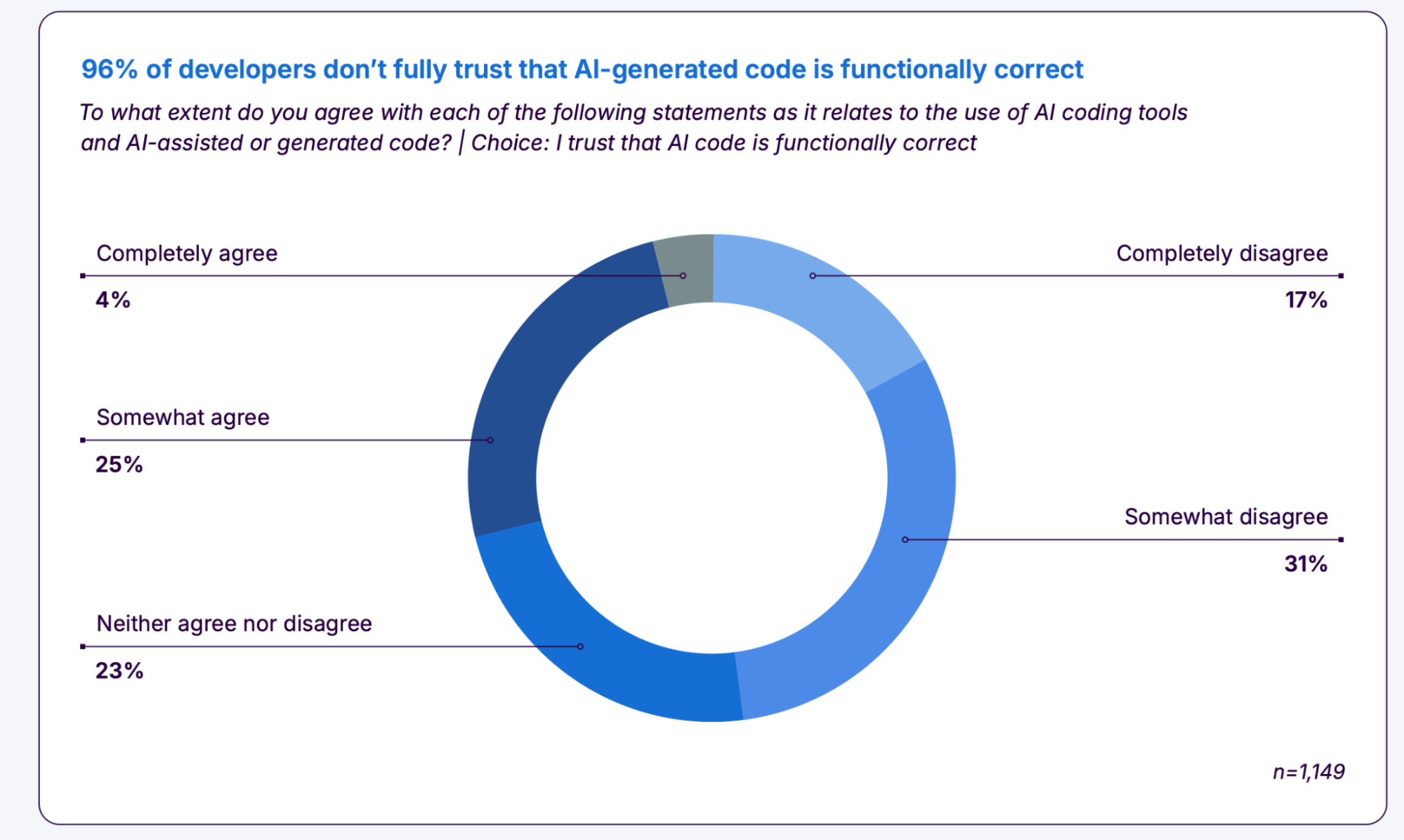

Developers love the speed of AI pair programmers, but you still own the bugs when code fails. Qodo just raised $70 million to scale AI code verification, pitching a safety layer that keeps automated commits shippable. The pitch lands because production outages cost real money, regulators are circling, and customers expect uptime that your current test suite may not guarantee. AI code verification promises to catch logic slips, supply chain risks, and compliance gaps before they land in prod. If the copilot writes ten patches an hour, who is checking every edge case? Qodo claims its platform answers that and turns verification into a first-class job instead of a frantic afterthought.

Quick Signals to Watch

- Qodo targets enterprise teams that already use AI coding tools and face audit pressure.

- Funding suggests buyers want verification as a service, not another linter.

- Platform maps tests to requirements to prove coverage for regulators.

- Claims include automatic remediation suggestions tied to source context.

AI Code Verification: Why It Matters Now

Shipping faster is easy; shipping safely is not. Qodo positions AI code verification as the defensive line in a sports analogy, blocking bad plays before they reach production. Breach costs keep climbing, and regulators now ask for proof of control, not promises. That shifts verification from a nice-to-have to a budgeted line item. And the timing is sharp because AI-generated pull requests keep rising while reviewer bandwidth stays flat.

“The AI that writes your code should help prove it is safe, or you will drown in review debt,” one security lead told me.

This single-sentence paragraph exists.

How Qodo Says It Works

Look, every vendor claims magic. Qodo’s story hinges on mapping source changes to formal checks. The platform ingests code, test results, dependency metadata, and policy rules. It then scores each change for safety, suggesting missing test cases and flagging unverified behaviors. The company argues this beats a simple static scan because it tracks requirement lineage. That matters when auditors ask which tests back a payment flow or a privacy rule. The workflow mirrors a building inspection: AI builders raise the walls quickly, and Qodo signs off the structural integrity before anyone moves in.

Technical Moves That Stand Out

- Context-linked verification. Reports tie each flagged issue to the exact source line and requirement ID.

- Incremental analysis. Only changed components get rechecked, keeping pipeline time predictable.

- Remediation hints. Suggestions reference prior approved patterns, reducing guesswork.

- Supply chain checks. Dependency provenance sits beside test status to catch tampering.

Where This Fits in Your Stack

Teams already running CI can slot AI code verification as a gate before merge. You can keep current linters and SAST tools; Qodo aims to orchestrate them and add requirement coverage proof on top. That means fewer manual checklists and less spreadsheet tracking. But how will you measure success? Start with two metrics: reduction in escaped defects and time to approve AI-generated pull requests. If both move in the right direction within a quarter, the platform earns its budget.

And yes, there is still a trust question.

Risks, Limits, and What to Test

Automated verification can mis-prioritize issues if the requirement graph is wrong. Humans still need to declare what “good” looks like. There is also model drift risk when AI suggestions rely on patterns learned from prior merges. Treat this like a playbook: run parallel for a sprint, compare findings with human review, and tune the rules. Can the tool handle non-happy-path services like legacy SOAP or real-time embedded code? Ask for proof before betting production on it.

How to Pilot AI Code Verification

Here’s the thing: pilots fail when scope is vague. Pick a service with high change velocity and clear SLAs. Wire the tool into CI as a non-blocking check for two weeks. Track:

- Number of AI-generated pull requests flagged for missing tests.

- Mean time to approve those pull requests after verification feedback.

- Escaped defects tied to the pilot service.

After baseline data, turn on blocking mode for critical paths only. Keep humans in the loop on severity triage (a parenthetical aside is useful here). Rotate reviewers so knowledge spreads.

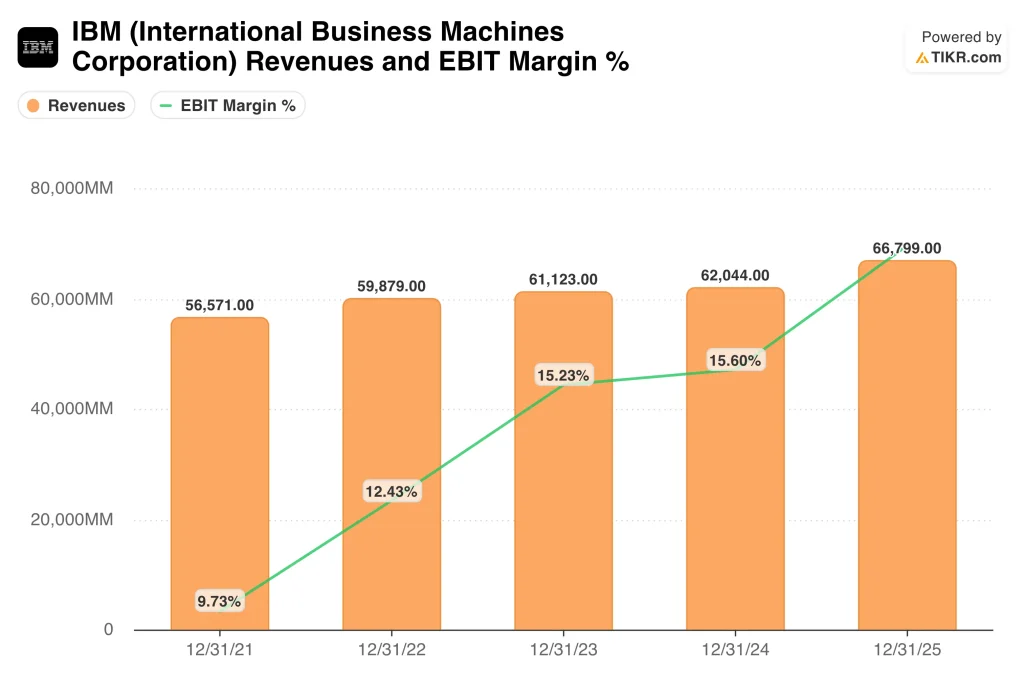

Pricing and Market Context

Qodo did not disclose pricing, but enterprise deals usually anchor to seat counts or service volume. The $70 million round signals investors expect a durable market for AI code verification, not a fad. Competing players like robust CI vendors and security platforms will respond, so expect integrations and packaging wars. Think of it like cloud bills a decade ago: verification spend will become a line item finance teams watch.

What Comes Next

Generative coding is speeding up releases, and verification is the next bottleneck. If Qodo proves its coverage claims under audit, competitors will follow. If not, engineers will revert to manual gates and slow everything down. Do you want speed with safety, or a race to rollback every Friday night?