AI Without the Cloud: How On-Device LLMs Are Becoming Practical

Most people use AI through cloud services. You type a prompt, it travels to a data center, and the response comes back over the internet. But a growing number of companies are betting that on-device LLMs, models that run directly on your phone or laptop, will become the preferred option for users who care about privacy, speed, and reliability. Multiverse Computing’s CompactifAI app runs a compressed model called Gilda locally on mobile devices. Nothing CEO Carl Pei is building an AI-first smartphone that processes intent locally. The push toward running AI offline is accelerating.

Where On-Device AI Stands Today

- Multiverse Computing’s CompactifAI runs on smartphones without internet using quantum-inspired model compression

- Nothing is developing an AI-first phone that learns user intent locally

- Apple Intelligence processes many AI features on-device for privacy

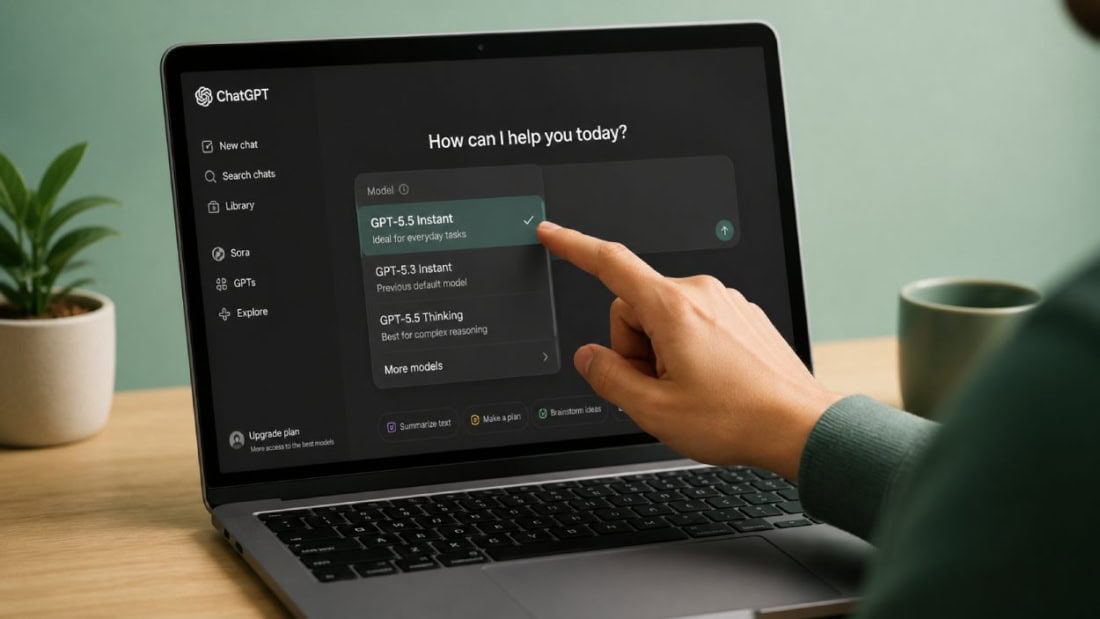

- Compressed models from OpenAI, Meta, DeepSeek, and Mistral AI are available through Multiverse’s API

- The main limitation is device hardware: older phones lack the RAM to run models locally

Why On-Device AI Matters for Privacy

When you use a cloud-based chatbot, your data leaves your device. Your prompts, context, and any files you upload travel to servers owned by the AI provider. For personal use, this might be acceptable. For healthcare providers, financial firms, legal teams, and anyone handling sensitive information, it is a real concern.

On-device models keep data local. Nothing you type leaves your phone. No third-party server ever sees your queries. For regulated industries, this is not just a preference. It may become a compliance requirement.

Multiverse Computing’s compression technology uses quantum-inspired techniques to shrink models from major AI labs to sizes that fit on mobile devices while preserving useful performance.

The Hardware Barrier

Running a useful AI model requires significant RAM and processing power. Multiverse’s CompactifAI works on newer smartphones with enough memory, but older iPhones and budget Android devices cannot handle it. When the hardware falls short, the app automatically switches to cloud-based processing, which removes the privacy advantage.

This is a temporary problem. Phone hardware improves every year. Apple’s latest chips already support on-device AI processing for features like text summarization and image recognition. As chips get faster and more memory-efficient, the range of AI tasks that can run locally will expand.

The Vision: Phones That Think Without Asking

Nothing CEO Carl Pei described a three-stage evolution during his SXSW talk. First, AI that executes commands. Second, AI that learns long-term user goals and proactively suggests actions. Third, AI that acts autonomously on the user’s behalf without being asked. All of this would run on-device, using an interface designed for the AI agent rather than for human fingers.

Pei’s vision is ambitious, but the underlying technology is converging. Model compression is improving. Phone hardware is getting more capable. Privacy regulations are tightening. The conditions for on-device AI are better now than ever. The remaining question is not whether it will happen, but how quickly it replaces the cloud-first model that dominates today.