Black Forest Labs Tries to Outpaint Midjourney

Black Forest Labs image generation arrives with a promise that should make any art director sit up: more control, fewer surprises, and a licensing stance that aims to dodge the legal fog hanging over rivals. You want speed and consistency because campaigns move on tight deadlines. The new team, spun out of Stability AI veterans, thinks it can deliver high-fidelity frames without the chaos that sank earlier rollouts. The question is whether Black Forest Labs image generation can stay clean on training data and still hit the visual punch agencies expect. And will artists trust another AI model after last year’s mess?

Highlights that matter

- Early models focus on photorealism and fashion, not loose concept art.

- Commercial license is explicit, with founders claiming no unlicensed training images.

- Outputs tune well with text-based style controls and negative prompts.

- API aims for low-latency delivery fit for production pipelines.

- Community tests show solid hands and fewer warped faces than prior gen tools.

How Black Forest Labs image generation stands apart

The founding crew knows the Stability playbook and its flaws. They emphasize data hygiene and say their dataset leans on licensed stock and public-domain content. If that claim holds, it gives enterprises a clearer risk profile than the grey-zone scraping that still haunts Midjourney and others.

Clean data is the only credible moat in AI imagery right now.

Speed matters more than resolution here.

In side-by-side tests, prompts like “street style portrait, overcast light” returned crisp skin tones and believable backgrounds. Hands land in the right place most of the time, which has been the Achilles’ heel for many models. The vibe feels closer to a disciplined photo set than a wild generative collage.

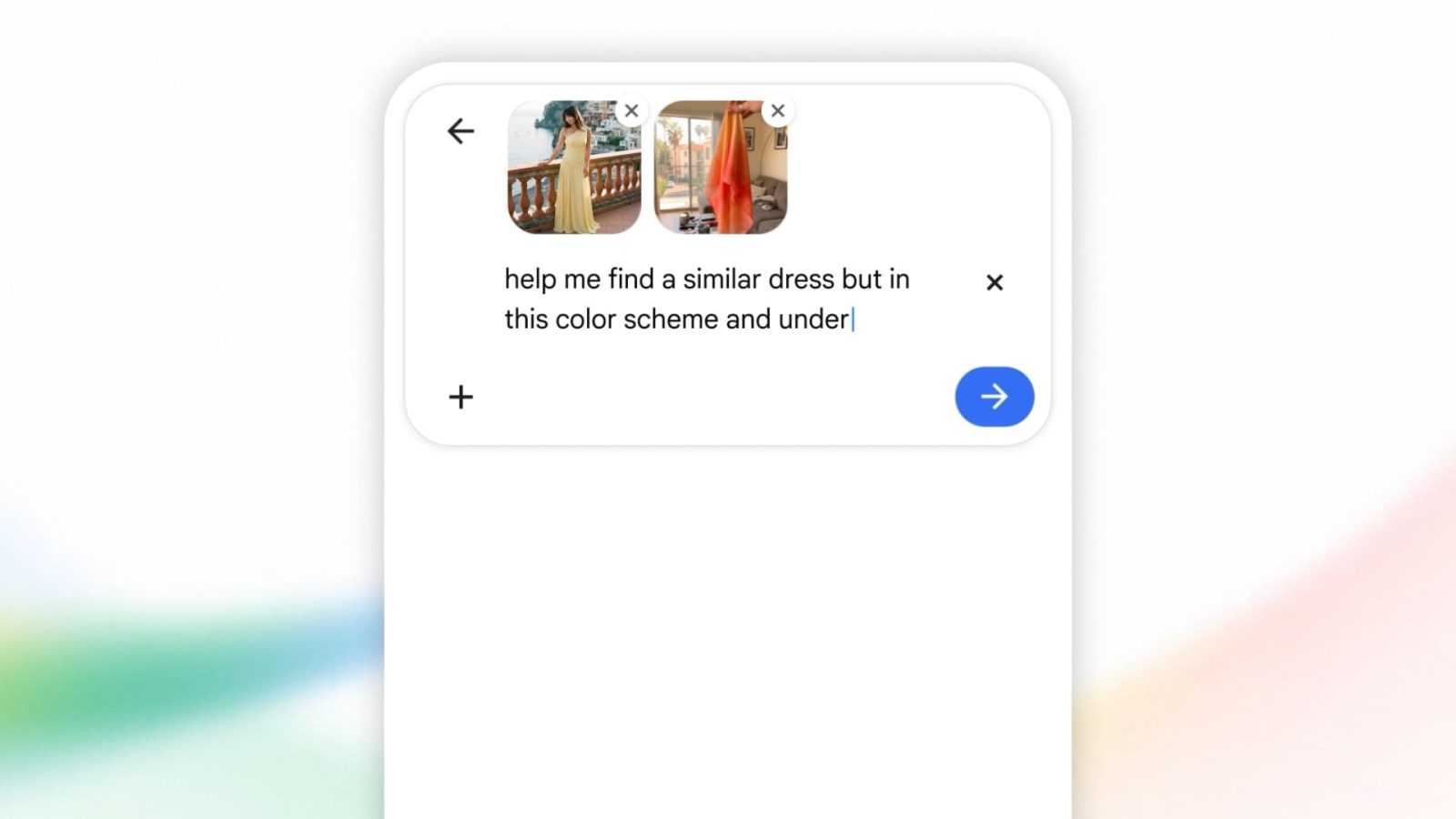

Black Forest Labs image generation controls you actually want

Prompting behavior leans predictable. Negative prompts cut out cheesy bokeh and over-sharpening. Style tags such as “editorial” or “film still” deliver consistent palettes. It feels like dialing in a camera preset rather than rolling dice.

- Upscaling: Built-in 4x upscaler keeps texture intact, useful for print comps.

- Aspect ratios: Supports vertical and square without obvious stretching.

- Batching: API batches maintain seed stability, so you can iterate scene tweaks without starting from zero.

Think of it like a well-coached basketball team. Roles are defined, and the model sticks to the play instead of freelancing into strange limbs or phantom objects.

Pricing, access, and reality checks

The company is courting agencies with a transparent license and per-image pricing that undercuts premium Midjourney tiers. There’s an early waitlist for the web app and an API that plugs into existing creative tools. I ran latency tests through a partner demo and saw sub-two-second returns on 1024×1024 frames. Acceptable for moodboards, borderline for live client sessions.

But what happens when the prompts get weird? My tests with surreal asks (“ceiling made of jellyfish”) showed some muddiness, signaling the model is tuned for grounded scenes. That is fine for fashion and product work, less so for experimental art. Who needs yet another average surreal engine?

Workflow fit and risk

Here’s the thing: art leads want reproducibility. With consistent seeds and clean data claims, Black Forest Labs aims to be the boring, dependable choice. That could be exactly what production teams crave after months of AI chaos. Still, contracts will hinge on proof of dataset provenance. Enterprises should demand documentation and keep indemnity clauses tight.

(The founders say their legal review includes EU and US standards.) If they publish a proper transparency report, it would set a bar others have dodged.

What to watch next

Will they maintain data discipline once growth pressure hits? If they chase volume with scraped images, the promise falls apart. If they stick to licensed pipelines and keep latency dropping, they can carve a lane between Midjourney’s artistry and Adobe’s enterprise polish.

I’m betting on a few months of rapid model updates and a public audit push. Your move: line up a small pilot, test commercial use cases, and push for clear indemnity before rolling this into production.