Search Articles

David Silver on Reinforcement Learning’s Next AI Bet

David Silver on Reinforcement Learning’s Next AI Bet Most AI coverage right now fixates on chatbots, larger context windows, and the race to ship new model features. But that misses a harder question.

Bumble Ditches the Swipe

Bumble Ditches the Swipe If dating apps feel stale, you are not imagining it. The swipe mechanic that once felt fast and addictive now looks dated, and for many users it feels more like sorting than c

AI Cancer Detection in Radiology: What Doctors Still Do Better

AI Cancer Detection in Radiology: What Doctors Still Do Better You have probably seen the pitch by now. AI can scan medical images faster than a human, flag hidden tumors, and help fix radiology backl

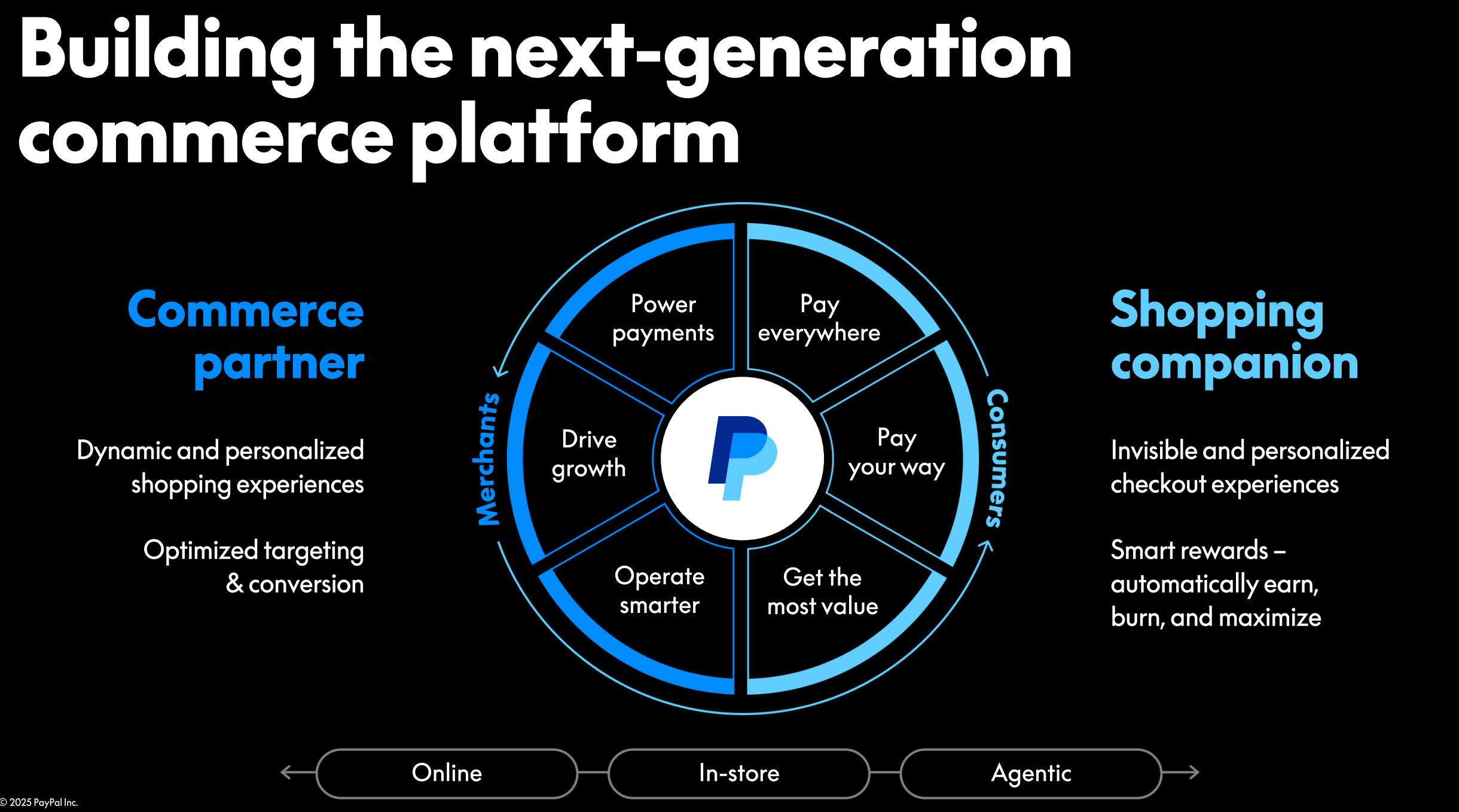

PayPal AI Strategy: What Becoming a Tech Company Again Really Means

PayPal AI Strategy: What Becoming a Tech Company Again Really Means PayPal has a perception problem. For years, many people saw it as a payments utility, not a company setting the pace in tech. Now th

AI Analysis of the Holbein Anne Boleyn Portrait

AI Analysis of the Holbein Anne Boleyn Portrait Art history rarely suffers from a lack of debate, but the fight over Anne Boleyn’s image has a special charge. If you are trying to understand the lates

Apple Sold Out Mac Mini and the OpenCore Legacy Patcher Catch

Apple Sold Out Mac Mini and the OpenCore Legacy Patcher Catch If you have been eyeing a small desktop for local AI work, home lab jobs, or a quiet office setup, the Apple sold out Mac mini story proba

BioticsAI Founder on FDA Approval and Healthcare Fundraising

BioticsAI Founder on FDA Approval and Healthcare Fundraising Building a healthcare startup looks exciting from the outside. Then reality hits. Regulation moves slowly, hospital sales cycles drag on, a

China AI Jobs Are Reshaping Tech Work

China AI Jobs Are Reshaping Tech Work If you work in tech, the rise of AI is no longer an abstract story about future disruption. It is a hiring story, a salary story, and for many people, a job secur

AI-Driven Mac Demand Catches Apple Off Guard

AI-Driven Mac Demand Catches Apple Off Guard If you have been trying to read the PC market lately, you have probably seen two stories at once. One says computers are a mature business with modest upgr

Waymo Emergency Response Problems Are Getting Harder to Ignore

Waymo Emergency Response Problems Are Getting Harder to Ignore If you live in a city where robotaxis are spreading fast, you probably care about one question more than any product demo or investor pit

Where the Goblins Came From Explained

Where the Goblins Came From Explained If you read Where the Goblins Came From and felt half-intrigued, half-unsure what to do with it, you are not alone. The piece matters because it points at a stubb

Pentagon AI Partnerships With OpenAI, Google, and SpaceX

Pentagon AI Partnerships With OpenAI, Google, and SpaceX The Pentagon is moving faster with commercial tech firms, and that should get your attention if you follow AI, defense, or both. The new wave o

xAI vs OpenAI Trial: What Model Distillation Could Change

xAI vs OpenAI Trial: What Model Distillation Could Change AI companies copy, borrow, license, and compete at a pace that leaves most readers squinting at the fine print. That is why the xAI OpenAI tri

Scout AI Raises $100M for Military AI

Scout AI Raises $100M for Military AI Defense tech is back in force, and that puts a company like Scout AI under a bright light. If you track military startups, procurement, or AI policy, this matters

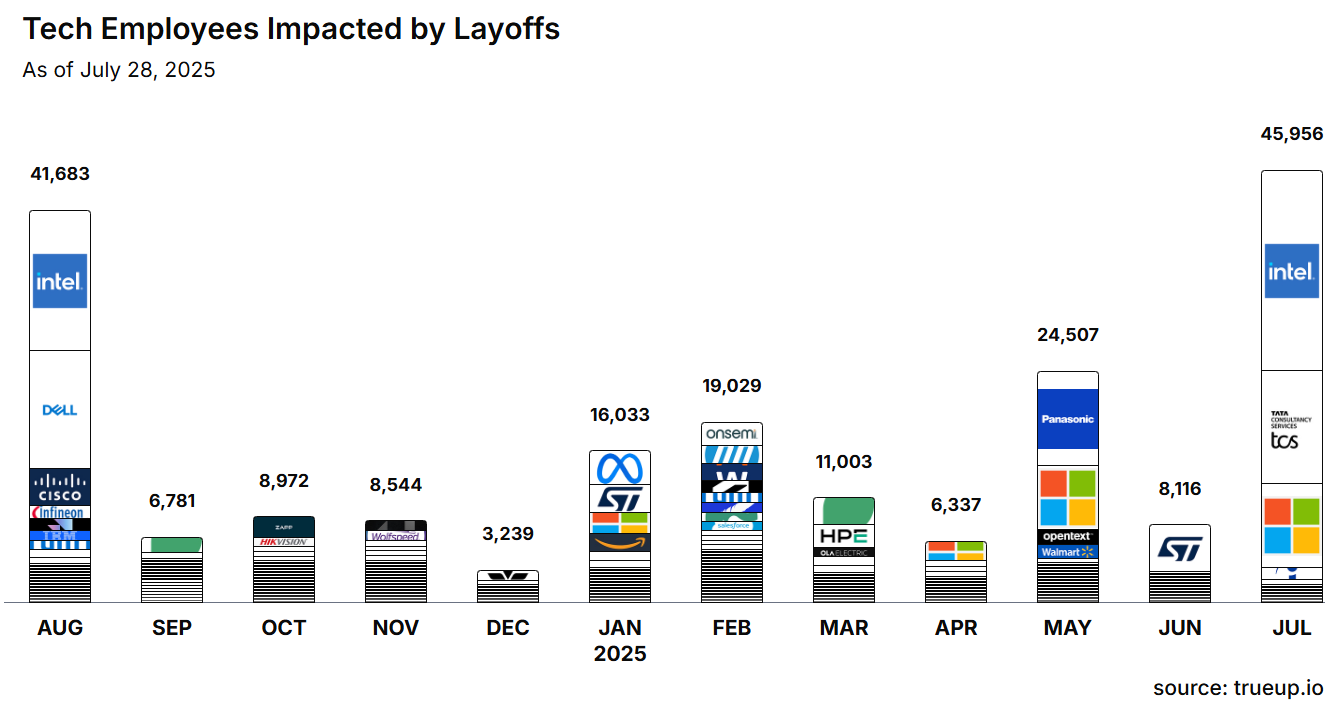

Tech Layoffs and the AI Push

Tech Layoffs and the AI Push Tech workers are trying to read a messy job market. Companies are still talking about growth, yet more teams keep shrinking. The phrase tech layoffs AI push sums up the te

Meta Layoffs 2025: What 10% Staff Cuts Mean

Meta Layoffs 2025: What 10% Staff Cuts Mean If you follow big tech, you have seen this pattern before. A company talks up efficiency, doubles down on AI, and then trims headcount hard. The latest Meta

AI-Powered Table Tennis Robot Marks a Robotics Breakthrough

AI-Powered Table Tennis Robot Marks a Robotics Breakthrough The AI-powered table tennis robot is more than a party trick. It is a hard test of perception, timing, and control, and this new milestone s

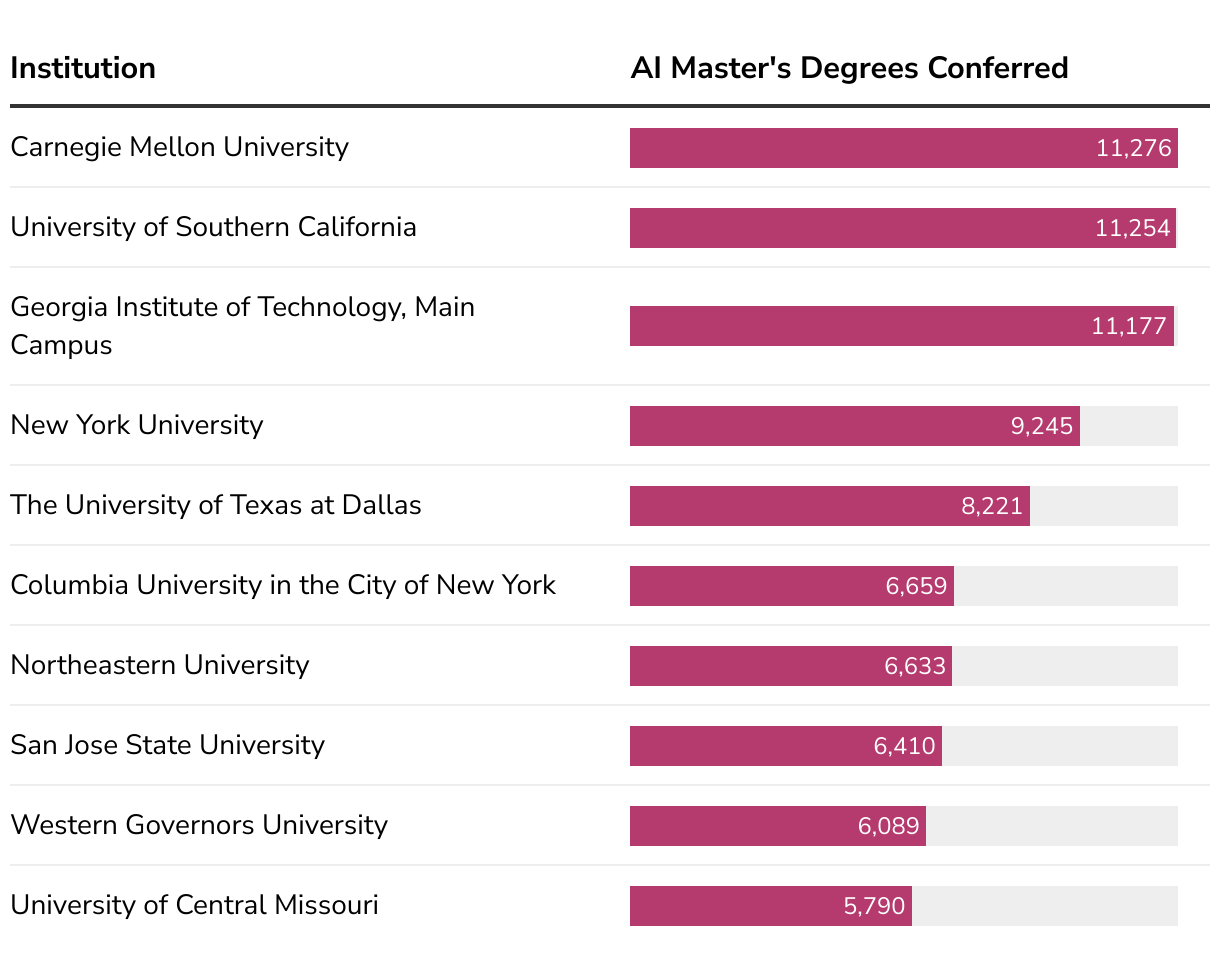

AI College Majors: What Actually Pays Off

AI College Majors: What Actually Pays Off Students keep asking whether AI college majors will still pay the bills by the time they graduate. Employers now hunt for practical model-building skills, not

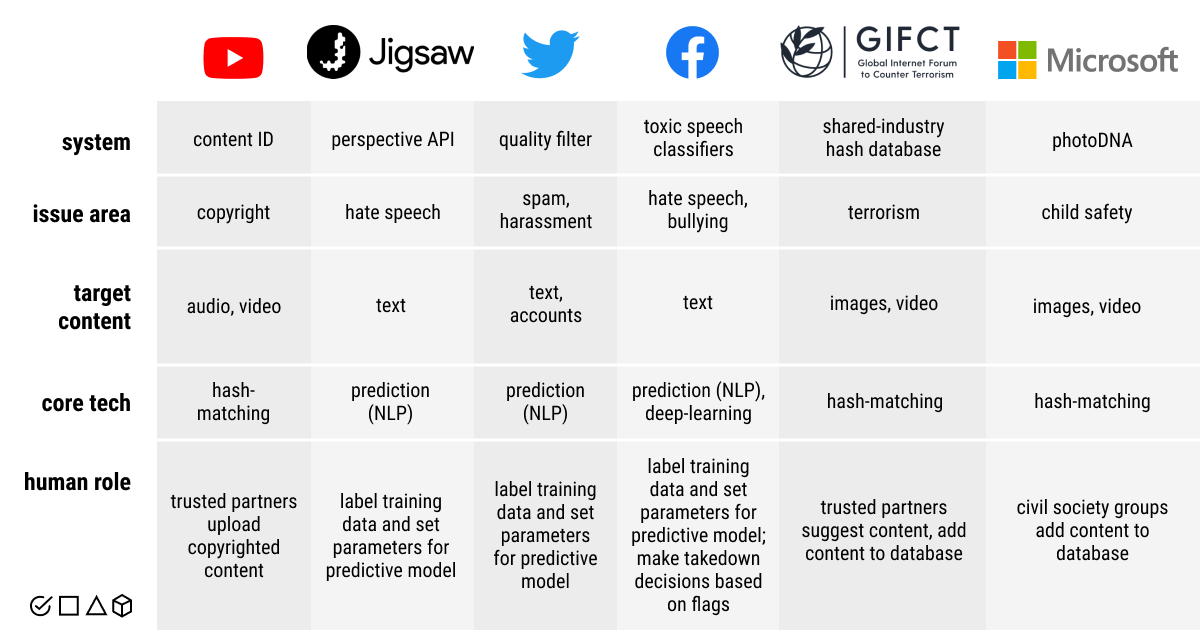

AI Content Moderation Tools: Moonbounce Bets on Safer Generative Media

AI Content Moderation Tools: Moonbounce Bets on Safer Generative Media Your feeds are already filling with AI-written posts and synthetic video, and you know the trolls will follow. Companies are scra

AI Weather Apps: What Matters Now

AI Weather Apps: What Matters Now You open your phone expecting a clear read on the day, but AI weather apps keep serving conflicting alerts. That matters when you are timing a flight or protecting a

The Complete Guide to AI Model Quantization in 2026

The Complete Guide to AI Model Quantization in 2026 Running a 72 billion parameter model requires 144GB of GPU memory in full precision. Most practitioners do not have that hardware. LLM quantization

How to Reduce LLM Hallucinations: 7 Techniques That Work in Production

How to Reduce LLM Hallucinations: 7 Techniques That Work in Production LLMs generate confident, fluent text that is sometimes completely wrong. This tendency to hallucinate is the single biggest barri

Meta Releases Llama 4 Fine-Tuning Toolkit: What’s New

Meta Releases Llama 4 Fine-Tuning Toolkit: What’s New Meta shipped a major update to its Llama 4 fine-tuning toolkit in April 2026. The release includes a unified CLI tool that handles LoRA, QLoRA, an

AI Chip Wars: AMD MI400 vs NVIDIA Blackwell vs Intel Gaudi 3

AI Chip Wars: AMD MI400 vs NVIDIA Blackwell vs Intel Gaudi 3 The AI chip comparison 2026 landscape has three serious contenders for data center AI workloads: AMD’s MI400, NVIDIA’s Blackwell B200, and

RAG Is Not Dead: How Retrieval-Augmented Generation Evolved in 2026

RAG Is Not Dead: How Retrieval-Augmented Generation Evolved in 2026 When GPT-5.4 launched with a 1 million token context window, a common reaction was: “RAG is dead. Just stuff everything in the conte

Large Genome Model: Open Weights for Trillions of Bases

Large Genome Model: Open Weights for Trillions of Bases Labs keep hitting the same wall: translating raw sequencing data into answers without burning budgets or months. An open Large Genome Model trai

Mixture of Experts in 2026: How Modern LLMs Route Tokens Efficiently

Mixture of Experts in 2026: How Modern LLMs Route Tokens Efficiently The largest language models in 2026 share a common architectural pattern: mixture of experts (MoE). GPT-5.4, Qwen 3.5, DeepSeek-V4,

Why AI Stumbles on Certain Games and How to Fix It

Why AI Stumbles on Certain Games and How to Fix It Developers want game agents that win, learn, and entertain, yet AI game playing limitations keep showing up in titles that reward improvisation or hi

How to Fine-Tune Qwen 3.5 on Your Own Data

How to Fine-Tune Qwen 3.5 on Your Own Data Alibaba’s Qwen 3.5 is the strongest open-weight multimodal model available today. But a base model trained on general data will not match the performance you

NVIDIA Rubin Platform Explained: What the Next-Gen GPU Means for AI Training

NVIDIA Rubin Platform Explained: What the Next-Gen GPU Means for AI Training NVIDIA announced the Rubin platform at CES in January 2026 and has been rolling out technical details since. The platform r

Alibaba’s Qwen 3.5 Is the Strongest Open-Weight Multimodal Model Yet

Alibaba’s Qwen 3.5 Is the Strongest Open-Weight Multimodal Model Yet Alibaba released Qwen 3.5 in March 2026 and it immediately set new benchmarks for open-weight multimodal AI. The model processes te

The AI Energy Bottleneck: How Grid-Scale Batteries Fit In

AI Cannot Scale Without Solving the Power Problem First AI runs on electricity, and there is not enough of it. Goldman Sachs projects that AI data center power consumption will rise 175% by 2030. Sigh

What Trainium3’s Neuron Switches Mean for AI Infrastructure

Amazon’s Neuron Switches Are Changing How AI Chips Communicate Individual AI chips are fast. But in a data center, what matters most is how thousands of chips work together. Amazon’s custom Neuron swi

AI Data Collection at Scale: From Delivery Routes to Training Sets

Gig Workers Are Becoming AI’s Data Collection Network Training AI systems that interact with the physical world requires real-world data that cannot be scraped from the internet. DoorDash and Uber hav

Compressing Large AI Models Without Losing Performance

How Quantum-Inspired Compression Shrinks AI Models Large AI models like GPT-4 and Llama require massive computational resources. They run on GPU clusters in data centers, consuming significant power a

How Amazon Builds and Tests AI Chips from Scratch

Inside Amazon’s Chip Lab: Where Trainium Gets Built Amazon’s custom AI chip program started in 2015 when the company acquired Israeli chip designer Annapurna Labs for $350 million. More than 10 years

Gemini 3.1 Flash-Lite Cuts Inference Costs While Doubling Speed

Google shipped Gemini 3.1 Flash-Lite in March 2026 as a purpose-built model for high-volume inference workloads. The model delivers throughput roughly double that of Gemini 3.1 Flash while maintaining

MangroveGS Predicts Cancer Spread with 80% Accuracy Using Gene Patterns

A research team from Johns Hopkins University and the National Cancer Institute published results in March 2026 demonstrating MangroveGS, a machine learning framework that predicts cancer metastasis w

NVIDIA Nemotron 3 Super Targets Multi-Agent Enterprise Coding

NVIDIA launched Nemotron 3 Super in March 2026, a 253-billion-parameter language model purpose-built for enterprise software engineering. The model is trained on a curated dataset of production-grade

Qwen 3.5 9B Outperforms Larger Models on Graduate-Level Reasoning

Alibaba Cloud released Qwen 3.5 in March 2026, and its 9-billion-parameter variant is turning heads. On the GPQA Diamond benchmark, which tests graduate-level science and reasoning, Qwen 3.5 9B scores

AI Framework Accelerates Alloy Discovery Through Expert Knowledge Fusion

Researchers at MIT and the Max Planck Institute for Iron Research published a paper in March 2026 describing an AI framework that accelerates alloy discovery by fusing expert metallurgist knowledge wi

Dynamic Sparse Training Cuts AI Energy Use by Up to 90%

A team of researchers from the University of Edinburgh and Google DeepMind published results in March 2026 showing that dynamic sparse training can reduce AI training energy consumption by up to 90% w

On-Device AI Gets Real with Qualcomm Dragonwing Q-8750

Qualcomm announced the Dragonwing Q-8750 processor in March 2026, delivering 100 TOPS of AI compute in a chip designed for edge devices. The processor can run 7-billion-parameter language models entir